ESET’s Jake Moore used sensible glasses, deepfakes and face swaps to ‘hack’ widely-used facial recognition programs – and he’ll demo all of it at RSAC 2026

13 Mar 2026

•

,

2 min. learn

Facial recognition is more and more embedded in every part from airport boarding gates to financial institution onboarding flows. The widely-held assumption is {that a} face is difficult to faux and that matching a stay face to a trusted supply is a dependable id sign.

Jake Moore, ESET International Cybersecurity Advisor, just lately put this assumption by means of a number of sensible stress assessments. His experiments confirmed that the highly effective expertise can truly be each misused and defeated.

In a single check, Jake used a pair of modified off-the-shelf sensible glasses that may establish individuals in actual time. He walked by means of a public house, captured individuals’s faces and in contrast them in opposition to publicly accessible on-line knowledge sources, with id matches returned inside seconds. The names and social media profiles have been pulled from nothing greater than individuals’s glances.

This capability may turn out to be useful if, say, a convention attendee struggles to recollect individuals’s names, nevertheless it’s far much less palatable when you think about what somebody with in poor health intentions may do with that data.

The second demo had a special spin. It went after monetary companies, turning a fraud prevention system in opposition to itself. Utilizing AI-generated photographs and freely accessible software program, Jake created a fictitious face to open an precise checking account. The financial institution’s facial recognition and eKYC (know your buyer) platform accepted it as a real individual.

After proving the purpose, Jake closed the account and shared all data with the financial institution, which has since shut down that particular methodology of id abuse. However one broader query stays: what number of monetary establishments should be prone to this sort of assault?

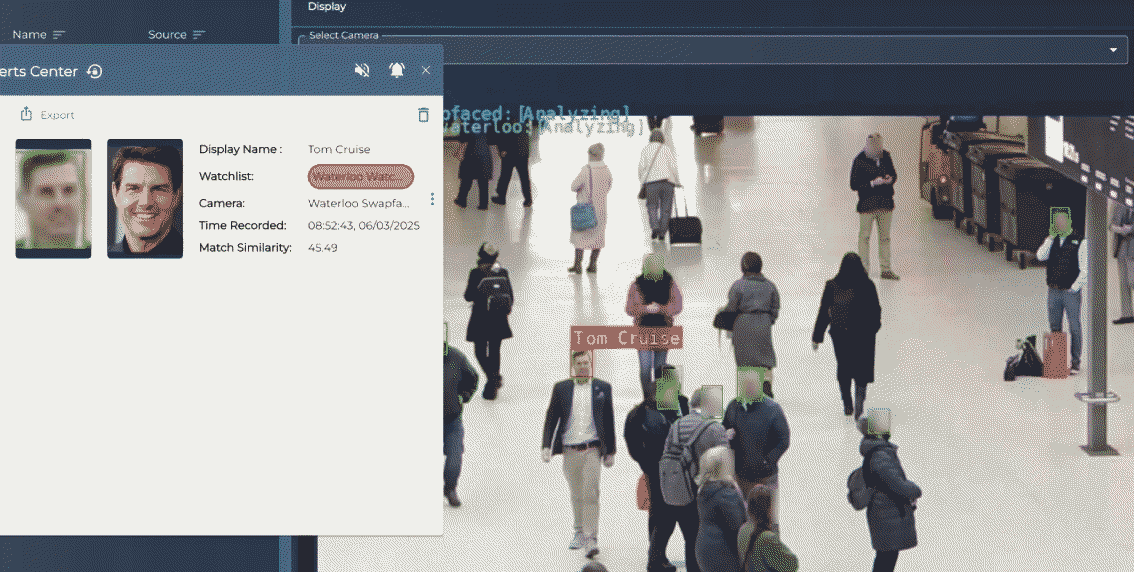

Lastly, Jake added himself to a facial recognition watchlist at a busy prepare station in London. He then walked by means of the monitored space whereas working real-time face swap software program that overlaid Tom Cruise’s likeness onto Jake’s personal within the digicam feed. The system, which can be utilized by the UK police, by no means acknowledged or flagged him. It was as if he merely wasn’t there and anybody actively trying to find him on CCTV would have seen the actor as a substitute.

There’s much more to those experiments than we are able to cowl right here – they’re all a part of Jake’s discuss at RSAC 2026, which is due in San Francisco from March 23rd-26th, 2026. If you happen to’re on the convention, think about attending the discuss – in spite of everything, seeing this all work in opposition to an in-production system in a stay surroundings is completely different from ‘simply’ studying about it. To be taught extra, together with about different ESET talks on the convention, go to this web site.

The massive image

Facial recognition programs are being deployed with implicit belief that does not match their precise resilience when somebody tries to interrupt them – even the place they solely use off-the-shelf client {hardware} and simply accessible software program, similar to Jake did. Identification verification that’s solely depending on a face match clearly carries extra danger than most individuals and organizations understand.

The experiments additionally ship a message to distributors of facial recognition programs and anybody answerable for id verification programs. Amongst different issues, the programs must be examined in assault simulation settings and beneath different adversarial situations. The expertise behind facial recognition is fragile in ways in which matter when somebody makes an attempt to subvert it.