This submit reveals how Amazon EMR 7.12 could make your Apache Spark and Iceberg workloads as much as 4.5x quicker efficiency.

The Amazon EMR runtime for Apache Spark offers a high-performance runtime setting with full API compatibility with open supply Apache Spark and Apache Iceberg. Amazon EMR on EC2, Amazon EMR Serverless, Amazon EMR on Amazon EKS, Amazon EMR on AWS Outposts and AWS Glue use the optimized runtimes.

Our benchmarks present Amazon EMR 7.12 runs TPC-DS 3 TB workloads 4.5x quicker than open supply Spark 3.5.6 with Iceberg 1.10.0.

Efficiency enhancements embrace optimizations for metadata caching, parallel I/O, adaptive question planning, knowledge sort dealing with, and fault tolerance. There have been additionally some Iceberg particular regressions round knowledge scans that we recognized and stuck.

These optimizations allow you to match Parquet efficiency on Amazon EMR whereas conserving the important thing options of Iceberg key options: ACID transactions, time journey, and schema evolution.

Benchmark outcomes in comparison with open supply

To evaluate the efficiency of the Spark engine with the Iceberg desk format, we carried out benchmark exams utilizing the 3 TB TPC-DS dataset, model 2.13, a preferred trade commonplace benchmark. Benchmark exams for the Amazon EMR runtime for Apache Spark and Apache Iceberg have been performed on Amazon EMR 7.12 EC2 clusters in comparison with open supply Apache Spark 3.5.6 and Apache Iceberg 1.10.0 on EC2 clusters.

Be aware: Our outcomes derived from the TPC-DS dataset should not instantly akin to the official TPC-DS outcomes because of setup variations.

The setup directions and technical particulars can be found in our GitHub repository. To reduce the affect of exterior catalogs like AWS Glue and Hive, we used the Hadoop catalog for the Iceberg tables. This makes use of the underlying file system, particularly Amazon S3, because the catalog. We will outline this setup by configuring the property spark.sql.catalog.. The actual fact tables used the default partitioning by the date column, which differ from 200–2,100 partitions. No precalculated statistics have been used for these tables.

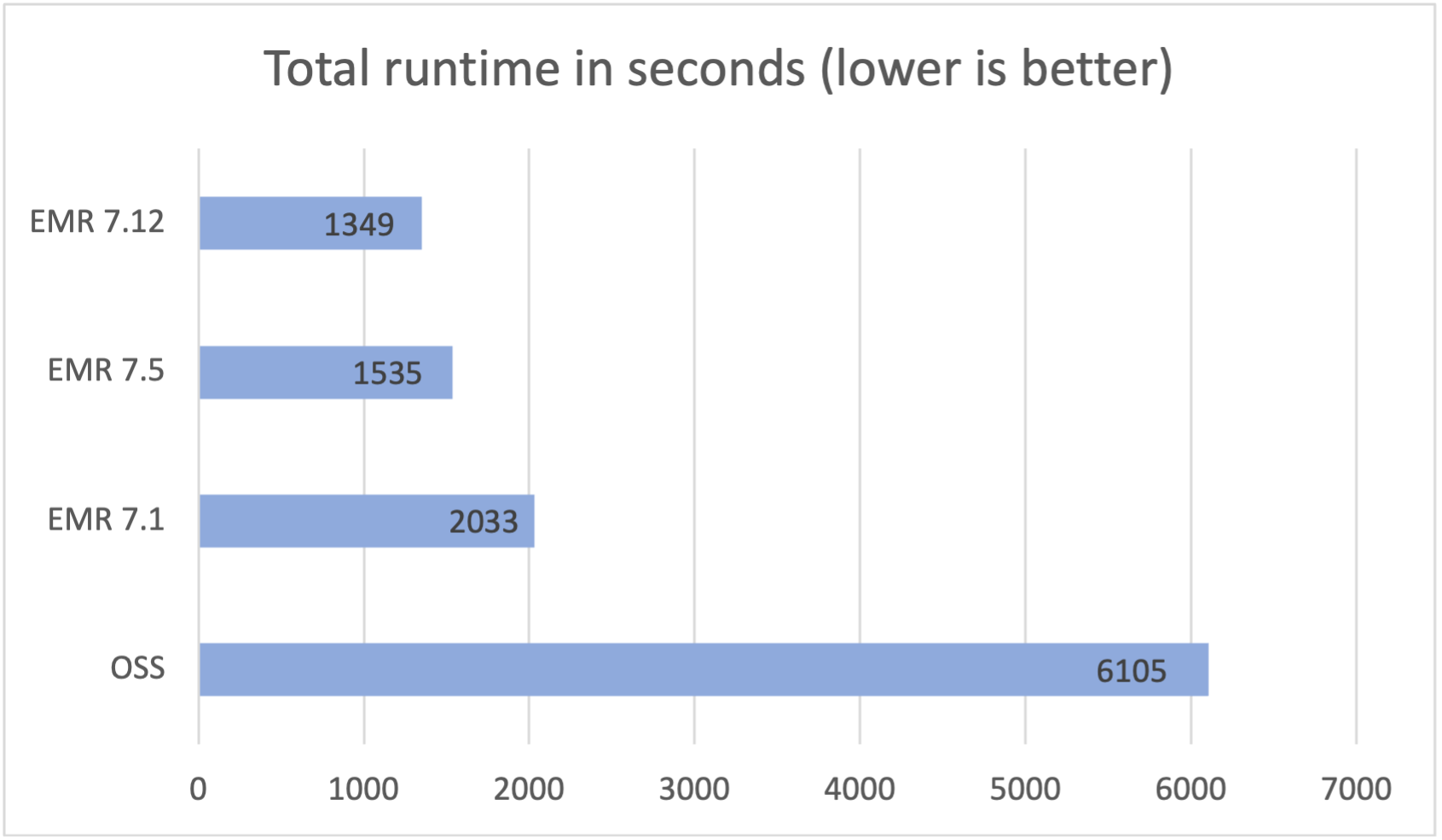

We ran a complete of 104 SparkSQL queries in 3 sequential rounds, and the typical runtime of every question throughout these rounds was taken for comparability. The typical runtime for the three rounds on Amazon EMR 7.12 with Iceberg enabled was 0.37 hours, demonstrating a 4.5x velocity enhance in comparison with open supply Spark 3.5.6 and Iceberg 1.10.0. The next determine presents the whole runtimes in seconds.

The next desk summarizes the metrics.

| Metric | Amazon EMR 7.12 on EC2 | Amazon EMR 7.5 on EC2 | Open supply Apache Spark 3.5.6 and Apache Iceberg 1.10.0 |

| Common runtime in seconds | 1349.62 | 1535.62 | 6113.92 |

| Geometric imply over queries in seconds | 7.45910 | 8.30046 | 22.31854 |

| Value* | $4.81 | $5.47 | $17.65 |

*Detailed price estimates are mentioned later on this submit.

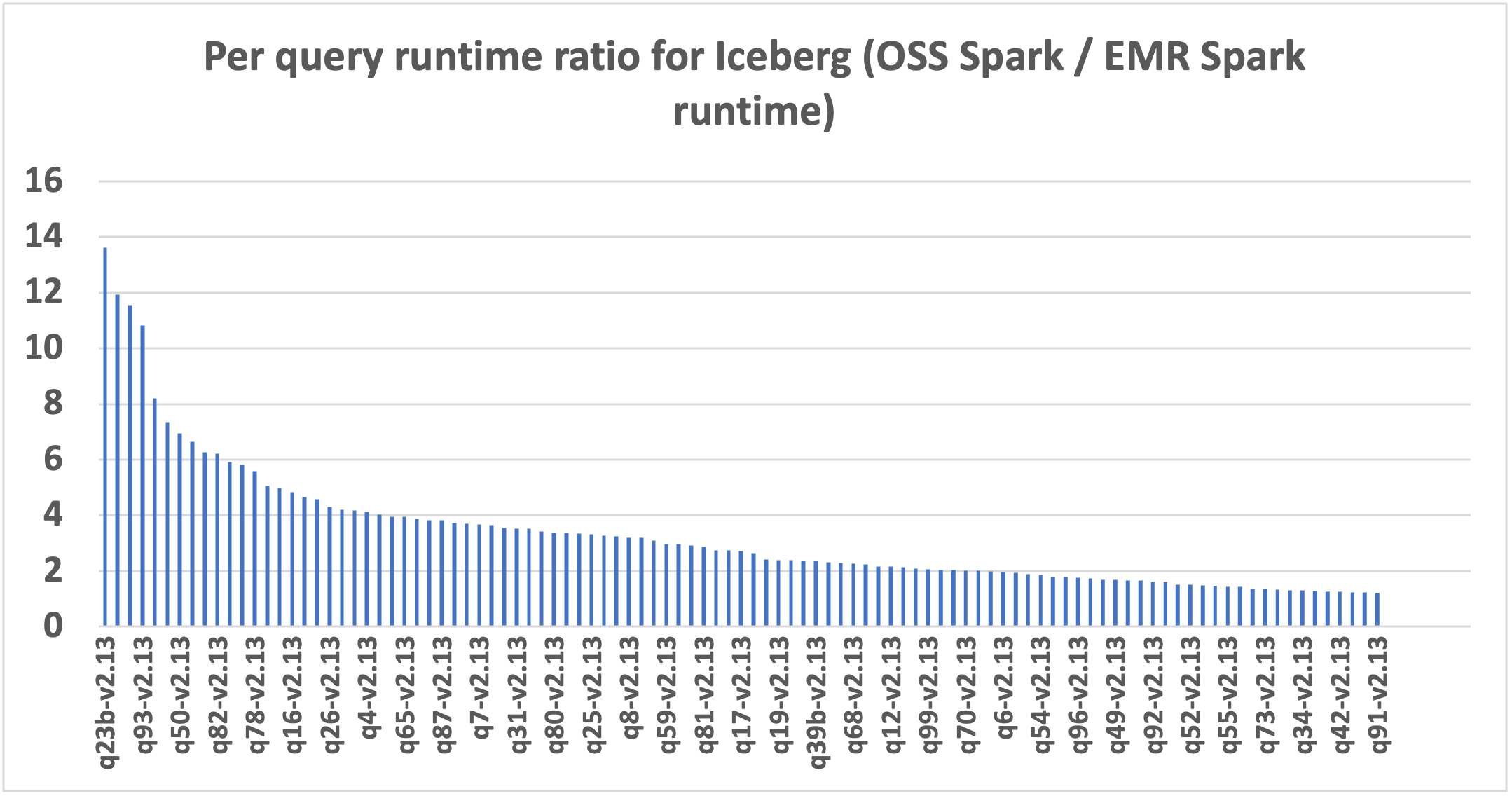

The next chart demonstrates the per-query efficiency enchancment of Amazon EMR 7.12 relative to open supply Spark 3.5.6 and Iceberg 1.10.0. The extent of the speedup varies from one question to a different, with the quickest as much as 13.6x quicker for q23b, with Amazon EMR outperforming open supply Spark with Iceberg tables. The horizontal axis arranges the TPC-DS 3TB benchmark queries in descending order based mostly on the efficiency enchancment seen with Amazon EMR, and the vertical axis depicts the magnitude of this speedup as a ratio.

Value comparability breakdown

Our benchmark offers the whole runtime and geometric imply knowledge to evaluate the efficiency of Spark and Iceberg in a posh, real-world resolution assist state of affairs. For extra insights, we additionally study the associated fee side. We calculate price estimates utilizing formulation that account for EC2 On-Demand cases, Amazon Elastic Block Retailer (Amazon EBS), and Amazon EMR bills.

- Amazon EC2 price (consists of SSD price) = variety of cases * r5d.4xlarge hourly charge * job runtime in hours

- 4xlarge hourly charge = $1.152 per hour

- Root Amazon EBS price = variety of cases * Amazon EBS per GB-hourly charge * root EBS quantity measurement * job runtime in hours

- Amazon EMR price = variety of cases * r5d.4xlarge Amazon EMR price * job runtime in hours

- 4xlarge Amazon EMR price = $0.27 per hour

- Complete price = Amazon EC2 price + root Amazon EBS price + Amazon EMR price

The calculations reveal that the Amazon EMR 7.12 benchmark yields a 3.6x price effectivity enchancment over open supply Spark 3.5.6 and Iceberg 1.10.0 in working the benchmark job.

| Metric | Amazon EMR 7.12 | Amazon EMR 7.5 | Open supply Apache Spark 3.5.6 and Apache Iceberg 1.10.0 |

| Runtime in seconds | 1349.62 | 1535.62 | 6113.92 |

|

Variety of EC2 cases (Consists of major node) |

9 | 9 | 9 |

| Amazon EBS Measurement | 20gb | 20gb | 20gb |

|

Amazon EC2 (Complete runtime price) |

$3.89 | $4.42 | $17.61 |

| Amazon EBS price | $0.01 | $0.01 | $0.04 |

| Amazon EMR price | $0.91 | $1.04 | $0 |

| Complete price | $4.81 | $5.47 | $17.65 |

| Value financial savings | Amazon EMR 7.12 is 3.6x higher | Amazon EMR 7.5 is 3.2x higher | Baseline |

Along with the time-based metrics mentioned up to now, knowledge from Spark occasion logs present that Amazon EMR scanned roughly 4.3x much less knowledge from Amazon S3 and 5.3x fewer data than the open supply model within the TPC-DS 3 TB benchmark. This discount in Amazon S3 knowledge scanning contributes on to price financial savings for Amazon EMR workloads.

Run open supply Apache Spark benchmarks on Apache Iceberg tables

We used separate EC2 clusters, every geared up with 9 r5d.4xlarge cases, for testing each open supply Spark 3.5.6 and Amazon EMR 7.12 for Iceberg workload. The first node was geared up with 16 vCPU and 128 GB of reminiscence, and the 8 employee nodes collectively had 128 vCPU and 1024 GB of reminiscence. We performed exams utilizing the Amazon EMR default settings to showcase the everyday consumer expertise and minimally adjusted the settings of Spark and Iceberg to keep up a balanced comparability.

The next desk summarizes the Amazon EC2 configurations for the first node and eight employee nodes of sort r5d.4xlarge.

| EC2 Occasion | vCPU | Reminiscence (GiB) | Occasion storage (GB) | EBS root quantity (GB) |

| r5d.4xlarge | 16 | 128 | 2 x 300 NVMe SSD | 20 GB |

Conditions

The next conditions are required to run the benchmarking:

- Utilizing the directions within the emr-spark-benchmark GitHub repository, arrange the TPC-DS supply knowledge in your S3 bucket and in your native laptop.

- Construct the benchmark software following the steps supplied in Steps to construct spark-benchmark-assembly software and replica the benchmark software to your S3 bucket. Alternatively, copy spark-benchmark-assembly-3.5.6.jar to your S3 bucket.

- Create Iceberg tables from the TPC-DS supply knowledge. Comply with the directions on GitHub to create Iceberg tables utilizing the Hadoop catalog. For instance, the next code makes use of an Amazon EMR 7.12 cluster with Iceberg enabled to create the tables:

Be aware: The Hadoop catalog warehouse location and database identify from the previous step. We use the identical Iceberg tables to run benchmarks with Amazon EMR 7.12 and open supply Spark.

This benchmark software is constructed from the department tpcds-v2.13_iceberg. In the event you’re constructing a brand new benchmark software, swap to the right department after downloading the supply code from the GitHub repository.

Create and configure a YARN cluster on Amazon EC2

To match Iceberg efficiency between Amazon EMR on Amazon EC2 and open supply Spark on Amazon EC2, comply with the directions within the emr-spark-benchmark GitHub repository to create an open supply Spark cluster on Amazon EC2 utilizing Flintrock with 8 employee nodes.

Primarily based on the cluster choice for this check, the next configurations are used:

Be sure that to switch the placeholder

Run the TPC-DS benchmark with Apache Spark 3.5.6 and Apache Iceberg 1.10.0

Full the next steps to run the TPC-DS benchmark:

- Log in to the open supply cluster major node utilizing

flintrock login $CLUSTER_NAME. - Submit your Spark job:

- Select the right Iceberg catalog warehouse location and database that has the created Iceberg tables.

- The outcomes are created in

s3://./benchmark_run - You may monitor progress in

/media/ephemeral0/spark_run.log.

Summarize the outcomes

After the Spark job finishes, retrieve the check outcome file from the output S3 bucket at s3://. This may be accomplished both via the Amazon S3 console by navigating to the desired bucket location or through the use of the Amazon Command Line Interface (AWS CLI). The Spark benchmark software organizes the information by making a timestamp folder and putting a abstract file inside a folder labeled abstract.csv. The output CSV information comprise 4 columns with out headers:

- Question identify

- Median time

- Minimal time

- Most time

With the information from 3 separate check runs with 1 iteration every time, we will calculate the typical and geometric imply of the benchmark runtimes.

Run the TPC-DS benchmark with Amazon EMR runtime for Apache Spark

Many of the directions are much like Steps to run Spark Benchmarking with just a few Iceberg-specific particulars.

Conditions

Full the next prerequisite steps:

- Run

aws configureto configure the AWS CLI shell to level to the benchmarking AWS account. Confer with Configure the AWS CLI for directions. - Add the benchmark software JAR file to Amazon S3.

Deploy Amazon EMR cluster and run the benchmark job

Full the next steps to run the benchmark job:

- Use the AWS CLI command as proven in Deploy EMR on EC2 Cluster and run benchmark job to deploy an Amazon EMR on EC2 cluster. Be sure that to allow Iceberg. See Create an Iceberg cluster for extra particulars. Select the right Amazon EMR model, root quantity measurement, and similar useful resource configuration because the open supply Flintrock setup. Confer with create-cluster for an in depth description of the AWS CLI choices.

- Retailer the cluster ID from the response. We’d like this for the subsequent step.

- Submit the benchmark job in Amazon EMR utilizing

add-stepsfrom the AWS CLI:- Substitute

- The benchmark software is at

s3://./spark-benchmark-assembly-3.5.6.jar - Select the right Iceberg catalog warehouse location and database that has the created Iceberg tables. This needs to be the identical because the one used for the open supply TPC-DS benchmark run.

- The outcomes will probably be in

s3://./benchmark_run

- Substitute

Summarize the outcomes

After the step is full, you’ll be able to see the summarized benchmark outcome at s3:// in the identical method because the earlier run and compute the typical and geometric imply of the question runtimes.

Clear up

To assist forestall future prices, delete the sources you created by following the directions supplied within the Cleanup part of the GitHub repository.

Abstract

Amazon EMR optimizes the runtime for Spark when used with Iceberg tables, attaining 4.5x quicker efficiency than open supply Apache Spark 3.5.6 and Apache Iceberg 1.10.0 with Amazon EMR 7.12 on TPC-DS 3 TB, v2.13. This represents a big development from Amazon EMR 7.5, which delivered 3.6x quicker efficiency and closes the hole to parquet efficiency on Amazon EMR so clients can use the advantages of Iceberg and not using a efficiency penalty.

We encourage you to maintain updated with the most recent Amazon EMR releases to totally profit from ongoing efficiency enhancements.

To remain knowledgeable, subscribe to the RSS feed for the AWS Large Information Weblog, the place you could find updates on the Amazon EMR runtime for Spark and Iceberg, in addition to recommendations on configuration finest practices and tuning suggestions.

In regards to the authors

Atul Felix Payapilly is a software program improvement engineer for Amazon EMR at Amazon Net Providers.

Atul Felix Payapilly is a software program improvement engineer for Amazon EMR at Amazon Net Providers.

Akshaya KP is a software program improvement engineer for Amazon EMR at Amazon Net Providers.

Akshaya KP is a software program improvement engineer for Amazon EMR at Amazon Net Providers.

Hari Kishore Chaparala is a software program improvement engineer for Amazon EMR at Amazon Net Providers.

Hari Kishore Chaparala is a software program improvement engineer for Amazon EMR at Amazon Net Providers.

Giovanni Matteo is the Senior Supervisor for the Amazon EMR Spark and Iceberg group.

Giovanni Matteo is the Senior Supervisor for the Amazon EMR Spark and Iceberg group.