AI-driven drone from College of Klagenfurt makes use of IDS uEye digital camera for real-time, object-relative navigation—enabling safer, extra environment friendly, and exact inspections.

The inspection of crucial infrastructures similar to vitality crops, bridges or industrial complexes is important to make sure their security, reliability and long-term performance. Conventional inspection strategies all the time require using folks in areas which can be troublesome to entry or dangerous. Autonomous cellular robots provide nice potential for making inspections extra environment friendly, safer and extra correct. Uncrewed aerial automobiles (UAVs) similar to drones specifically have change into established as promising platforms, as they can be utilized flexibly and might even attain areas which can be troublesome to entry from the air. One of many largest challenges right here is to navigate the drone exactly relative to the objects to be inspected as a way to reliably seize high-resolution picture information or different sensor information.

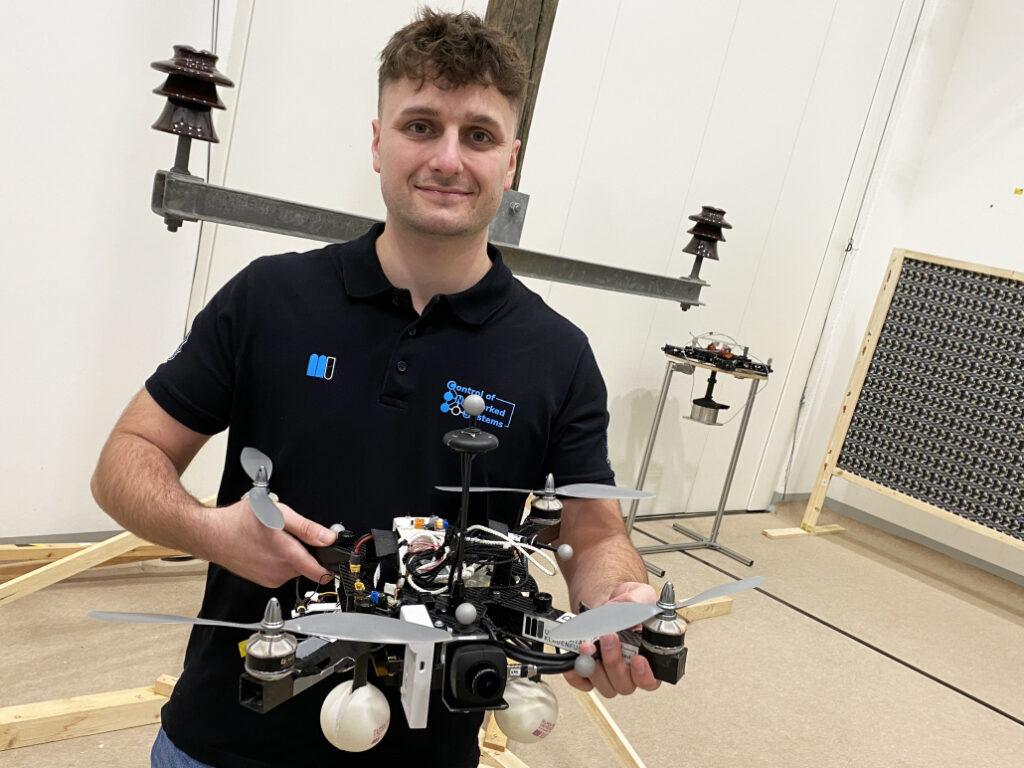

A analysis group on the College of Klagenfurt has designed a real-time succesful drone primarily based on object-relative navigation utilizing synthetic intelligence. Additionally on board: a USB3 Imaginative and prescient industrial digital camera from the uEye LE household from IDS Imaging Growth Techniques GmbH.

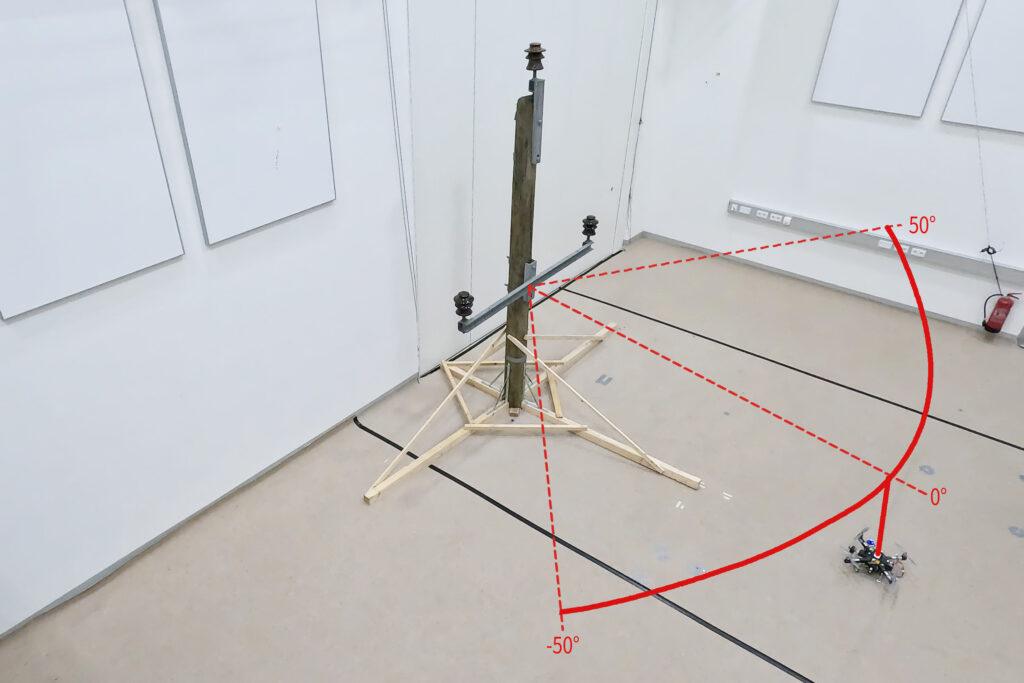

As a part of the analysis mission, which was funded by the Austrian Federal Ministry for Local weather Motion, Surroundings, Power, Mobility, Innovation and Expertise (BMK), the drone should autonomously recognise what’s an influence pole and what’s an insulator on the ability pole. It’s going to fly across the insulator at a distance of three meters and take footage. „Exact localisation is necessary such that the digital camera recordings can be in contrast throughout a number of inspection flights,“ explains Thomas Georg Jantos, PhD scholar and member of the Management of Networked Techniques analysis group on the College of Klagenfurt. The prerequisite for that is that object-relative navigation should have the ability to extract so-called semantic details about the objects in query from the uncooked sensory information captured by the digital camera. Semantic info makes uncooked information, on this case the digital camera photographs, „comprehensible“ and makes it attainable not solely to seize the surroundings, but in addition to accurately determine and localise related objects.

On this case, which means that a picture pixel isn’t solely understood as an impartial color worth (e.g. RGB worth), however as a part of an object, e.g. an isolator. In distinction to traditional GNNS (World Navigation Satellite tv for pc System), this strategy not solely gives a place in area, but in addition a exact relative place and orientation with respect to the item to be inspected (e.g. „Drone is situated 1.5m to the left of the higher insulator“).

The important thing requirement is that picture processing and information interpretation have to be latency-free in order that the drone can adapt its navigation and interplay to the particular circumstances and necessities of the inspection process in actual time.

Semantic info via clever picture processing

Object recognition, object classification and object pose estimation are carried out utilizing synthetic intelligence in picture processing. „In distinction to GNSS-based inspection approaches utilizing drones, our AI with its semantic info allows the inspection of the infrastructure to be inspected from sure reproducible viewpoints,“ explains Thomas Jantos. „As well as, the chosen strategy doesn’t endure from the standard GNSS issues similar to multi-pathing and shadowing brought on by giant infrastructures or valleys, which may result in sign degradation and thus to security dangers.“

How a lot AI suits right into a small quadcopter?

The {hardware} setup consists of a TWINs Science Copter platform geared up with a Pixhawk PX4 autopilot, an NVIDIA Jetson Orin AGX 64GB DevKit as on-board pc and a USB3 Imaginative and prescient industrial digital camera from IDS. „The problem is to get the bogus intelligence onto the small helicopters.

The computer systems on the drone are nonetheless too gradual in comparison with the computer systems used to coach the AI. With the primary profitable checks, that is nonetheless the topic of present analysis,“ says Thomas Jantos, describing the issue of additional optimising the high-performance AI mannequin to be used on the on-board pc.

The digital camera, alternatively, delivers good primary information immediately, because the checks within the college’s personal drone corridor present. When choosing an acceptable digital camera mannequin, it was not only a query of assembly the necessities by way of pace, dimension, safety class and, final however not least, worth. „The digital camera’s capabilities are important for the inspection system’s progressive AI-based navigation algorithm,“ says Thomas Jantos. He opted for the U3-3276LE C-HQ mannequin, a space-saving and cost-effective mission digital camera from the uEye LE household. The built-in Sony Pregius IMX265 sensor might be one of the best CMOS picture sensor within the 3 MP class and allows a decision of three.19 megapixels (2064 x 1544 px) with a body fee of as much as 58.0 fps. The built-in 1/1.8″ world shutter, which doesn’t produce any ‚distorted‘ photographs at these quick publicity instances in comparison with a rolling shutter, is decisive for the efficiency of the sensor. „To make sure a secure and strong inspection flight, excessive picture high quality and body charges are important,“ Thomas Jantos emphasises. As a navigation digital camera, the uEye LE gives the embedded AI with the great picture information that the on-board pc must calculate the relative place and orientation with respect to the item to be inspected. Primarily based on this info, the drone is ready to right its pose in actual time.

The IDS digital camera is related to the on-board pc through a USB3 interface. „With the assistance of the IDS peak SDK, we are able to combine the digital camera and its functionalities very simply into the ROS (Robotic Working System) and thus into our drone,“ explains Thomas Jantos. IDS peak additionally allows environment friendly uncooked picture processing and easy adjustment of recording parameters similar to auto publicity, auto white Balancing, auto acquire and picture downsampling.

To make sure a excessive stage of autonomy, management, mission administration, security monitoring and information recording, the researchers use the source-available CNS Flight Stack on the on-board pc. The CNS Flight Stack contains software program modules for navigation, sensor fusion and management algorithms and allows the autonomous execution of reproducible and customisable missions. „The modularity of the CNS Flight Stack and the ROS interfaces allow us to seamlessly combine our sensors and the AI-based ’state estimator‘ for place detection into the whole stack and thus realise autonomous UAV flights. The performance of our strategy is being analysed and developed utilizing the instance of an inspection flight round an influence pole within the drone corridor on the College of Klagenfurt,“ explains Thomas Jantos.

Exact, autonomous alignment via sensor fusion

The high-frequency management indicators for the drone are generated by the IMU (Inertial Measurement Unit). Sensor fusion with digital camera information, LIDAR or GNSS (World Navigation Satellite tv for pc System) allows real-time navigation and stabilisation of the drone – for instance for place corrections or exact alignment with inspection objects. For the Klagenfurt drone, the IMU of the PX4 is used as a dynamic mannequin in an EKF (Prolonged Kalman Filter). The EKF estimates the place the drone needs to be now primarily based on the final recognized place, pace and perspective. New information (e.g. from IMU, GNSS or digital camera) is then recorded at as much as 200 Hz and incorprated into the state estimation course of.

The digital camera captures uncooked photographs at 50 fps and a picture dimension of 1280 x 960px. „That is the utmost body fee that we are able to obtain with our AI mannequin on the drone’s onboard pc,“ explains Thomas Jantos. When the digital camera is began, an computerized white steadiness and acquire adjustment are carried out as soon as, whereas the automated publicity management stays switched off. The EKF compares the prediction and measurement and corrects the estimate accordingly. This ensures that the drone stays steady and might preserve its place autonomously with excessive precision.

Outlook

„With regard to analysis within the discipline of cellular robots, industrial cameras are essential for quite a lot of functions and algorithms. It is vital that these cameras are strong, compact, light-weight, quick and have a excessive decision. On-device pre-processing (e.g. binning) can be essential, because it saves precious computing time and sources on the cellular robotic,“ emphasises Thomas Jantos.

With corresponding options, IDS cameras are serving to to set a brand new customary within the autonomous inspection of crucial infrastructures on this promising analysis strategy, which considerably will increase security, effectivity and information high quality.

The Management of Networked Techniques (CNS) analysis group is a part of the Institute for Clever System Applied sciences. It’s concerned in instructing within the English-language Bachelor’s and Grasp’s applications „Robotics and AI“ and „Data and Communications Engineering (ICE)“ on the College of Klagenfurt. The group’s analysis focuses on management engineering, state estimation, path and movement planning, modeling of dynamic techniques, numerical simulations and the automation of cellular robots in a swarm: Extra info

Mannequin used:USB3 Imaginative and prescient Industriekamera U3-3276LE Rev.1.2

Digital camera household: uEye LE

Picture rights: Alpen-Adria-Universität (aau) Klagenfurt

© 2025 IDS Imaging Growth Techniques GmbH

Ähnliche Beiträge