Instruction-based picture modifying fashions are spectacular at following prompts. However when edits contain bodily interactions, they typically fail to respect real-world legal guidelines. Of their paper “From Statics to Dynamics: Physics-Conscious Picture Modifying with Latent Transition Priors,” the authors introduce PhysicEdit, a framework that treats picture modifying as a bodily state transition reasonably than a static transformation between two pictures. This shift improves realism in physics-heavy eventualities.

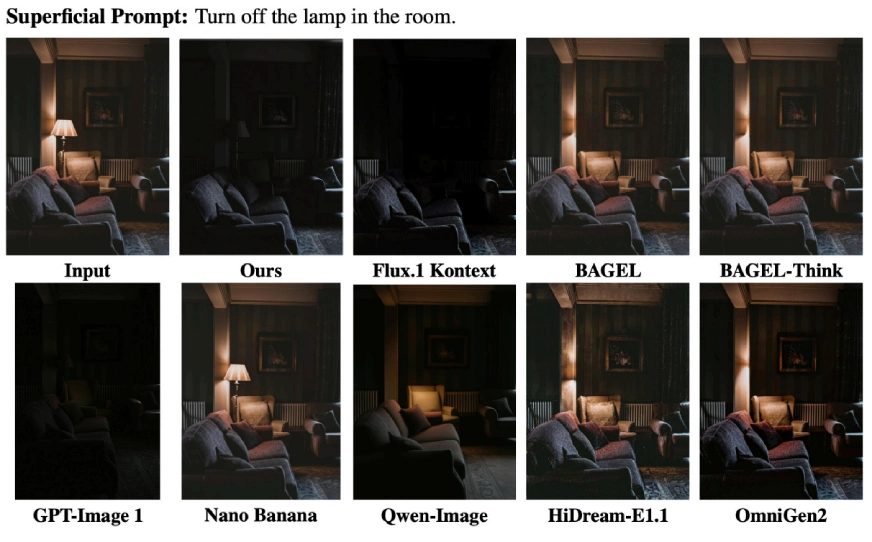

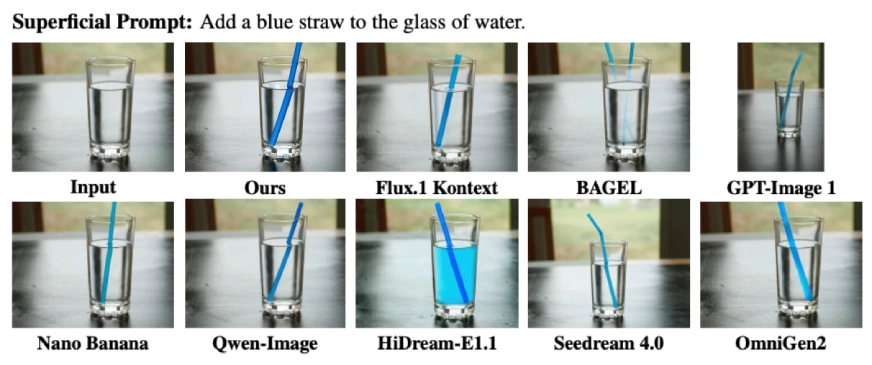

AI Picture Era Failures

You generate a room with a lamp and ask the mannequin to show it off. The lamp switches off, however the lighting within the room barely modifications. Shadows stay inconsistent. The instruction is adopted, however illumination physics is ignored.

Now insert a straw right into a glass of water. The straw seems within the glass however stays completely straight as a substitute of bending as a consequence of refraction. The edit seems to be right at first look, but it violates optical physics. These are precisely the failures PhysicEdit goals to repair.

Additionally Learn: High 7 AI Picture Turbines to Attempt in 2026

The Downside with Present Picture Modifying Fashions

Most instruction-based modifying fashions comply with a simple setup.

- You present a supply picture.

- You present an modifying instruction.

- The mannequin generates a modified picture.

This works effectively for semantic edits like:

- Change the shirt coloration to blue

- Substitute the canine with a cat

- Take away the chair

Nonetheless, this setup treats modifying as a static mapping between two pictures. It doesn’t mannequin the method that leads from the preliminary state to the ultimate state.

This turns into an issue in physics-heavy eventualities equivalent to:

- Insert a straw right into a glass of water

- Let the ball fall onto the cushion

- Flip off the lamp

- Freeze the soda can

These edits require understanding how bodily legal guidelines have an effect on the scene over time. With out modeling that transition, the system typically produces outcomes that look believable at first look however break below nearer inspection.

From Static Mapping to Bodily State Transitions

PhysicEdit proposes a distinct formulation.

As a substitute of straight predicting the ultimate picture from the supply picture and instruction, it treats the instruction as a bodily set off. The supply picture represents the preliminary bodily state of the scene. The ultimate picture represents the end result after the scene evolves below bodily legal guidelines.

In different phrases, modifying is handled as a state evolution drawback reasonably than a direct transformation.

This distinction issues.

Conventional modifying datasets solely present the beginning picture and the ultimate picture. The intermediate steps are lacking. In consequence, the mannequin learns what the output ought to appear to be, however not how the scene ought to bodily evolve to succeed in that state.

PhysicEdit addresses this limitation by studying from movies.

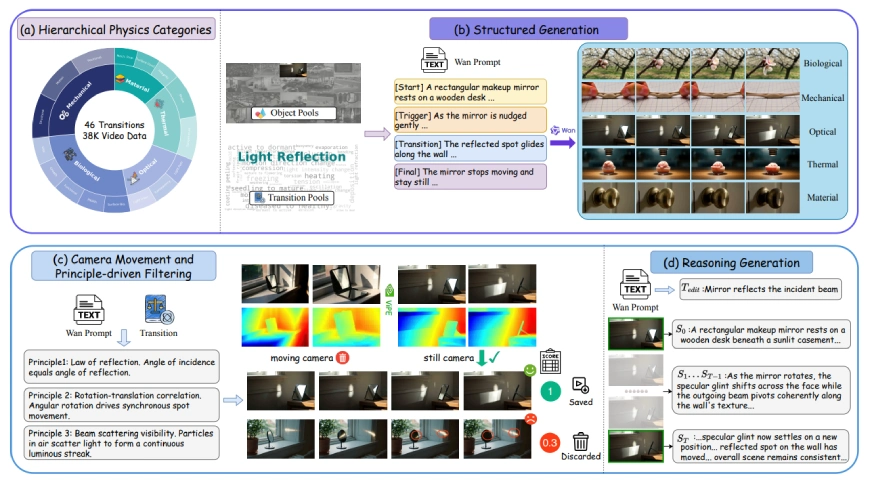

Introducing PhysicTran38K

To coach a physics-aware modifying mannequin, the authors created a brand new dataset known as PhysicTran38K. It comprises roughly 38,000 video-instruction pairs targeted particularly on bodily transitions. The dataset covers 5 main domains:

- Mechanical

- Optical

- Organic

- Materials

- Thermal

Throughout these domains, it defines 16 sub-domains and 46 transition varieties. Examples embody:

- Gentle reflection

- Refraction

- Deformation

- Freezing

- Melting

- Germination

- Hardening

- Collapse

Every video captures a full transition from an preliminary state to a last state, together with the intermediate steps. The development course of is structured and filtered rigorously:

- Movies are generated utilizing prompts that explicitly outline begin state, set off occasion, transition, and last state.

- Digital camera movement is filtered out in order that pixel modifications mirror bodily evolution reasonably than viewpoint shifts.

- Bodily ideas are robotically verified to make sure consistency.

- Solely transitions that cross these checks are retained.

This leads to high-quality supervision for studying real looking bodily dynamics.

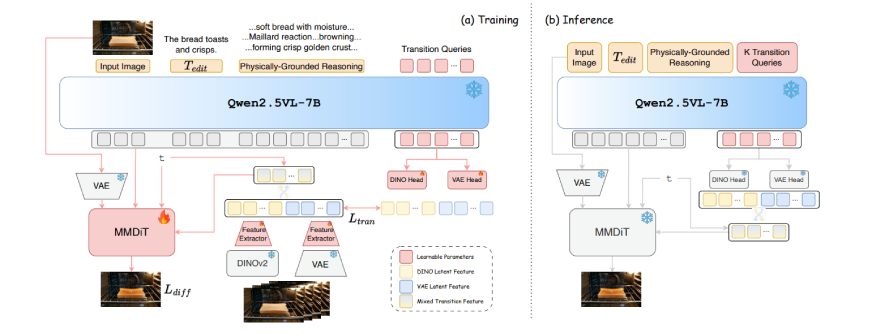

How PhysicEdit Works?

PhysicEdit builds on prime of Qwen-Picture-Edit, a diffusion-based modifying spine. To include physics, it introduces a dual-thinking mechanism with two elements:

- Bodily grounded reasoning

- Implicit visible pondering

These two streams complement one another and deal with totally different facets of bodily realism.

Twin-Pondering: Reasoning and Visible Transition Priors

Bodily Grounded Reasoning

PhysicEdit makes use of a frozen Qwen2.5-VL-7B mannequin to generate structured reasoning earlier than picture technology begins.

Given the supply picture and instruction, it produces:

- The bodily legal guidelines concerned

- Constraints that should be revered

- An outline of how the change ought to unfold

This reasoning hint turns into a part of the conditioning context for the diffusion mannequin. It ensures the edit respects causality and area information.

The reasoning mannequin stays frozen throughout coaching, which helps protect its basic information.

Implicit Visible Pondering

Textual content reasoning alone can not seize fine-grained visible results equivalent to:

- Refined deformation

- Texture transitions throughout melting

- Gentle scattering

To deal with this, PhysicEdit introduces learnable transition queries.

These queries are skilled utilizing intermediate frames from the PhysicTran38K movies. Two encoders supervise them:

- DINOv2 options for structural info

- VAE options for texture-level element

Throughout coaching, the mannequin aligns the transition queries with visible options extracted from intermediate states. At inference time, no intermediate frames can be found. As a substitute, the discovered transition queries act as distilled transition priors, guiding the mannequin towards bodily believable outputs.

Why Video Issues for Studying Physics?

With image-only supervision, the mannequin sees solely the preliminary and last states. With video supervision, it sees how the scene evolves step-by-step. This extra info constrains the training course of. It teaches the mannequin not simply what the end result ought to appear to be, however the way it ought to develop over time. PhysicEdit compresses this dynamic info into latent representations in order that modifying stays environment friendly and single-image based mostly throughout inference.

Outcomes on PICABench and KRISBench

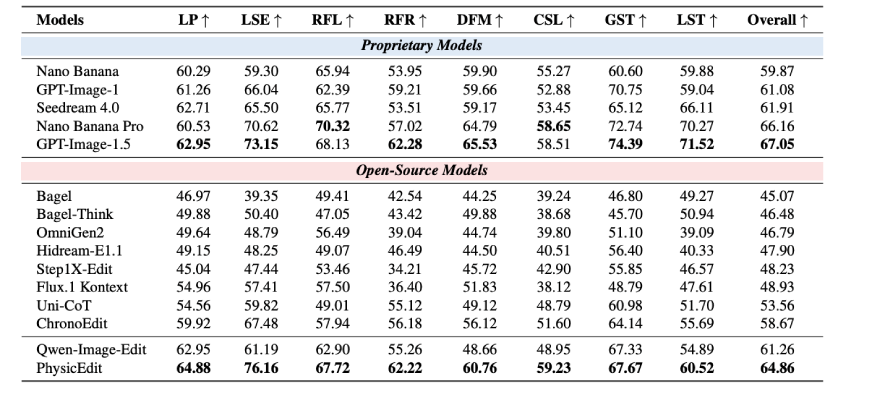

PhysicEdit was evaluated on two benchmarks:

PICABench Outcomes

PICABench focuses on bodily realism, together with optics, mechanics, and state transitions. In comparison with its spine mannequin, PhysicEdit improves general bodily realism by roughly 5.9%. The most important good points seem in classes requiring implicit dynamics, together with:

- Gentle supply results

- Deformation

- Causality

- Refraction

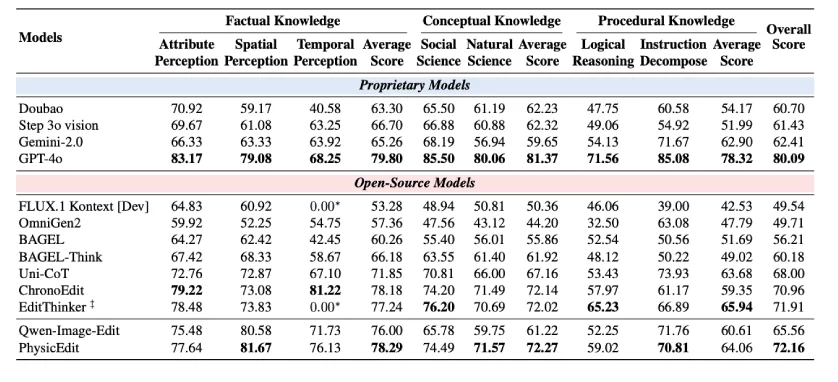

KRISBench Outcomes

On KRISBench, which evaluates knowledge-grounded modifying, PhysicEdit improves general efficiency by round 10.1%. Enhancements are significantly noticeable in:

- Temporal notion

- Pure science reasoning

These outcomes recommend that modeling modifying as state transitions improves each visible constancy and physics-related reasoning.

Why This Issues for AI Techniques?

As generative fashions turn into extra built-in into artistic instruments, augmented actuality methods, and multimodal brokers, bodily plausibility turns into more and more vital. Visually inconsistent lighting, unrealistic deformation, or damaged causality can cut back reliability and belief.

PhysicEdit demonstrates that:

- Physics will be discovered successfully from video knowledge

- Transition priors will be distilled into compact latent representations

- Textual content reasoning and visible supervision can work collectively

This represents a significant step towards extra world-consistent generative fashions.

Our High Articles on Picture Modifying Fashions:

Conclusion

Most picture modifying fashions deal with modifying as a static transformation drawback. PhysicEdit reframes it as a bodily state transition drawback. By combining video-based supervision, bodily grounded reasoning, and discovered transition priors, it produces edits that aren’t solely semantically right however bodily believable. The dataset, code, and checkpoints are open-sourced, making it accessible for researchers and engineers who need to construct extra real looking modifying methods. As generative AI continues to evolve, incorporating bodily consistency might transfer from being a analysis innovation to an ordinary requirement.

Notice: The supply of all the photographs and knowledge within the weblog is that this analysis paper.

Login to proceed studying and revel in expert-curated content material.