The flexibility of LLMs to execute instructions by plain language (e.g. English) has enabled agentic methods that may full a person question by orchestrating the precise set of instruments (e.g. ToolFormer, Gorilla). This, together with the current multi-modal efforts such because the GPT-4o or Gemini-1.5 mannequin, has expanded the realm of prospects with AI brokers. Whereas that is fairly thrilling, the massive mannequin dimension and computational necessities of those fashions typically requires their inference to be carried out on the cloud. This may create a number of challenges for his or her widespread adoption. At the beginning, importing information comparable to video, audio, or textual content paperwork to a 3rd get together vendor on the cloud, may end up in privateness points. Second, this requires cloud/Wi-Fi connectivity which isn’t at all times potential. For example, a robotic deployed in the actual world could not at all times have a secure connection. Moreover that, latency is also a problem as importing giant quantities of information to the cloud and ready for the response may decelerate response time, leading to unacceptable time-to-solution. These challenges could possibly be solved if we deploy the LLM fashions domestically on the edge.

Nevertheless, present LLMs like GPT-4o or Gemini-1.5 are too giant for native deployment. One contributing issue is that lots of the mannequin dimension finally ends up memorizing common details about the world into its parametric reminiscence which will not be crucial for a specialised downstream software. For example, in the event you ask a common factual query from these fashions like a historic occasion or well-known figures, they will produce the outcomes utilizing their parametric reminiscence, even with out having further context of their immediate. Nevertheless, it looks like this implicit memorization of coaching information into the parametric reminiscence is correlated with “emergent” phenomena in LLMs comparable to in-context studying and complicated reasoning, which has been the driving pressure behind scaling the mannequin dimension.

Nevertheless, this results in an intriguing analysis query:

Can a smaller language mannequin with considerably much less parametric reminiscence emulate such emergent skill of those bigger language fashions?

Attaining this is able to considerably cut back the computational footprint of agentic methods and thus allow environment friendly and privacy-preserving edge deployment. Our examine demonstrates that that is possible for small language fashions by coaching with specialised, high-quality information that doesn’t require recalling generic world information.

Such a system may significantly be helpful for semantic methods the place the AI agent’s position is to know the person question in pure language and, as an alternative of responding with a ChatGPT-type query reply response, orchestrate the precise set of instruments and APIs to perform the person’s command. For instance, in a Siri-like software, a person could ask a language mannequin to create a calendar invite with explicit attendees. If a predefined script for creating calendar objects already exists, the LLM merely must learn to invoke this script with the right enter arguments (comparable to attendees’ e mail addresses, occasion title, and time). This course of doesn’t require recalling/memorization of world information from sources like Wikipedia, however reasonably requires reasoning and studying to name the precise capabilities and to appropriately orchestrate them.

Our aim is to develop Small Language Fashions (SLM) which are able to complicated reasoning that could possibly be deployed securely and privately on the edge. Right here we are going to talk about the analysis instructions that we’re pursuing to that finish. First, we talk about how we are able to allow small open-source fashions to carry out correct perform calling, which is a key element of agentic methods. It seems that off-the-shelf small fashions have very low perform calling capabilities. We talk about how we tackle this by systematically curating high-quality information for perform calling, utilizing a specialised Mac assistant agent as our driving software. We then present that fine-tuning the mannequin on this top quality curated dataset, can allow SLMs to even exceed GPT-4-Turbo’s perform calling efficiency. We then present that this could possibly be additional improved and made environment friendly by a brand new Device RAG methodology. Lastly, we present how the ultimate fashions could possibly be deployed effectively on the edge with actual time responses.

Demo of TinyAgent-1B together with Whisper-v3 operating domestically deployed domestically on a Macbook M3 Professional. The framework is open sourced and obtainable at https://github.com/SqueezeAILab/TinyAgent

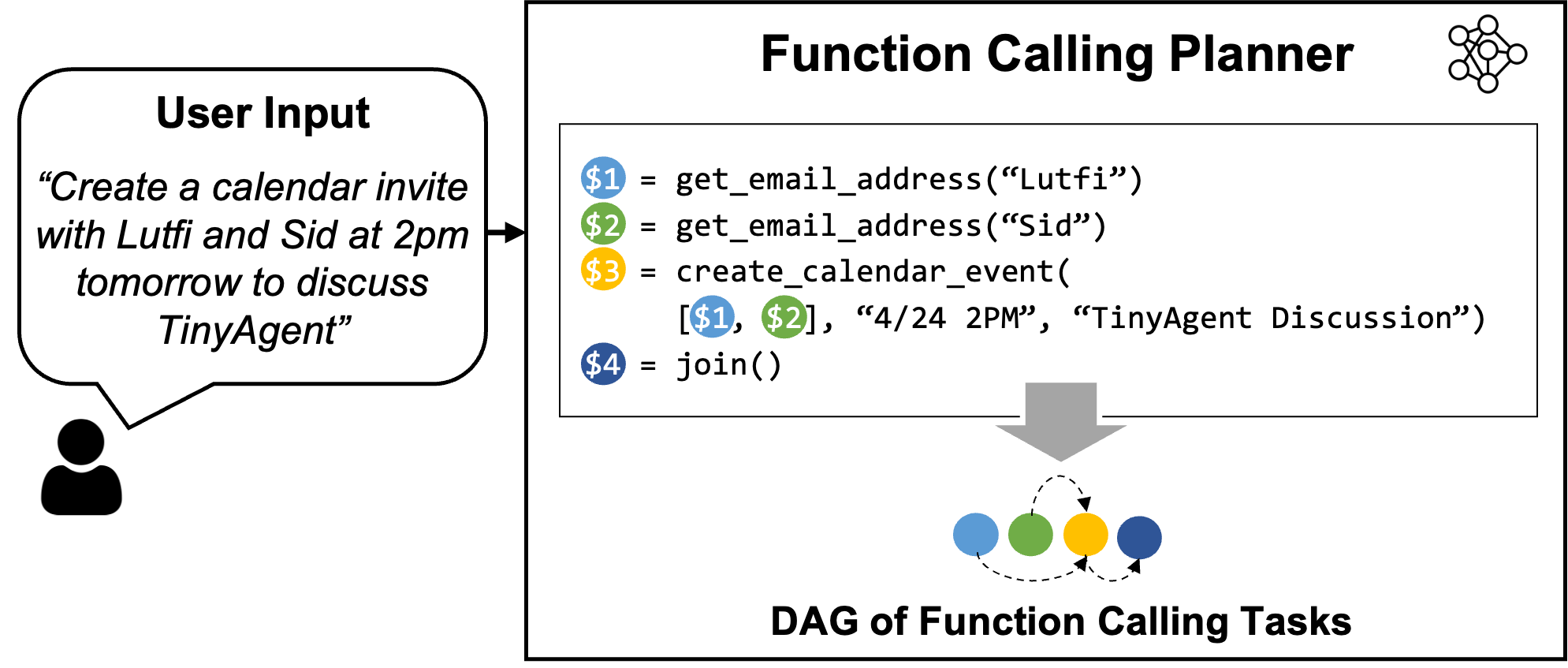

Determine 1: Overview of the LLMCompiler Perform Calling Planner. The Planner understands the person question and generates a sequence of duties with their inter-dependencies. These duties are then dispatched by the LLMCompiler framework to perform the person command. On this instance, Process $1 and $2 are fetched collectively to retrieve the e-mail addresses of Sid and Lutfi independently. After every process is carried out, the outcomes are forwarded to Process $3 which creates the calendar occasion. Earlier than executing Process $3, LLMCompiler replaces the placeholder variables (e.g., the variable $1 and $2 in Process $3) with precise values.

As talked about above, our primary curiosity is purposes the place the AI agent interprets the person question right into a sequence of perform calls to finish the duties. In such purposes, the mannequin doesn’t want to put in writing the perform definition itself because the capabilities (or APIs) are largely pre-defined and already obtainable. Due to this fact, what the mannequin must do is to find out (i) which capabilities to name, (ii) the corresponding enter arguments, and (iii) the precise order of calling these capabilities (i.e. perform orchestration) based mostly on the required interdependency throughout the perform calls.

The primary query is to seek out an efficient approach to equip SLMs to carry out perform calling. Giant fashions comparable to GPT-4 are capable of carry out perform calling, however how can this be achieved with open supply fashions? LLMCompiler is a current framework from our group that allows this by instructing the LLM to output a perform calling plan that features the set of capabilities that it must name together with the enter arguments and their dependencies (see the instance in Determine 1). As soon as this perform calling plan is generated, we are able to parse it and name every perform based mostly on the dependencies.

The vital half right here is to show the mannequin to create this perform calling plan with the precise syntax and dependency. The unique LLMCompiler paper solely thought-about giant fashions, comparable to LLaMA-2 70B, which have complicated reasoning capabilities to create the plan when supplied with enough directions of their prompts. Nevertheless, can smaller fashions be prompted the identical approach to output the right perform calling plan? Sadly, our experiments confirmed that off-the-shelf small fashions comparable to TinyLLaMA-1.1B (and even the bigger Wizard-2-7B mannequin) usually are not capable of output the right plans. The errors ranged from issues comparable to utilizing the mistaken set of capabilities, hallucinated names, mistaken dependencies, inconsistent syntax, and so forth.

That is reasonably anticipated as a result of these small fashions have been educated on generic datasets and primarily focused to realize good accuracy on common benchmarks which largely check the mannequin’s world information and common reasoning or fundamental instruction following functionality. To handle this, we explored if fine-tuning these fashions on a high-quality dataset specifically curated for perform calling and planning can enhance the accuracy of those small language fashions for a focused process, doubtlessly outperforming bigger fashions. Subsequent, we first talk about how we generated such a dataset, after which talk about the tremendous tuning method.

Determine 2: TinyAgent is an assistant that may work together with numerous MacOS purposes to help the person. The instructions may be given to it by both textual content by a highlight enter, or by voice.

As a driving software, we contemplate an area agentic system for Apple’s Macbook that solves person’s day-to-day duties, as proven in Determine 2. Significantly, the agent is provided with 16 completely different capabilities that may work together with completely different purposes on Mac, which incorporates:

- E mail: Compose a brand new e mail or reply to/ahead emails

- Contacts: Retrieve cellphone numbers or e mail addresses from the contacts database

- SMS: Ship textual content messages to contact(s)

- Calendar: Create calendar occasions with particulars comparable to title, time, attendees, and so forth.

- Notes: Create, open, or append content material to notes in numerous folders

- Reminder: Set reminders for numerous actions and duties

- File administration: Open, learn, or summarize paperwork in numerous file paths

- Zoom conferences: Schedule and manage Zoom conferences

Predefined Apple scripts exist for every of those capabilities/instruments, and all that the mannequin must do is to make the most of the predefined APIs and decide the precise perform calling plan to perform a given process, comparable to in Determine 1. However as mentioned beforehand, we want some information for evaluating and coaching small language fashions since their off-the-shelf perform calling functionality is subpar.

Creating handcrafted information with numerous perform calling plans is each difficult and never scalable. Nevertheless, we are able to curate artificial information utilizing an LLM like GPT-4-Turbo. Such an method is changing into a typical methodology the place a succesful LLM is instructed to generate information much like a given set of pattern examples or templates (see LLM2LLM and Self-Instruct). In our work, we used an identical method, however as an alternative of offering the LLM with generic person queries as templates, we offer it with numerous units of capabilities and instruct it to generate sensible person queries that require these capabilities to perform the duty, together with the related perform calling plan and enter arguments, like the instance proven in Determine 1. To confirm the validity of the generated information, we included sanity checks on the perform calling plan to guarantee that they kind a possible graph, and that the perform names and enter argument sorts are right. With this method, we created 80K coaching information, 1K validation information, and 1K testing information, with a complete price of solely ~$500.

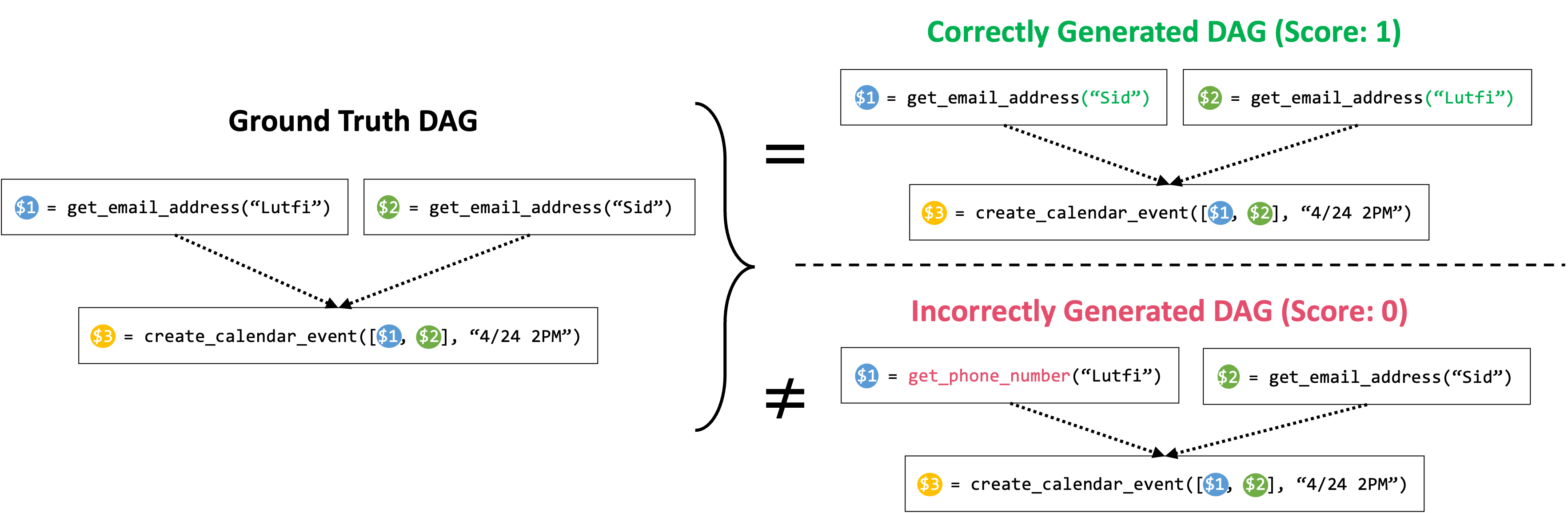

Determine 3: Graph Isomorphism Success Price. The mannequin scores successful fee of 1 provided that the DAG of its generated plan is isomorphic to the DAG of the bottom reality plan; and 0 in any other case. In above instance, for the highest case, though the order of the get_email_address calls are completely different from the bottom reality plan (the bottom reality plan will get the e-mail tackle of Lutfi earlier than Sid, and the generated plan will get the e-mail tackle of Sid earlier than Lutfi), because the two DAGs are isomorphic to one another, the plan will get 1 success fee. For the underside case, because the predicted DAG incorporates a mistaken node, similar to a mistaken perform name, the plan will get 0 success fee.

With our dataset in place, we are able to now proceed to fine-tune off-the-shelf SLMs to boost their perform calling functionality. We began with two base small fashions: TinyLlama-1.1B (instruct-32k model) and Wizard-2-7B. For fine-tuning these fashions, we first have to outline a metric to guage their efficiency. Our goal is for these fashions to precisely generate the precise plan, which entails not solely choosing the precise set of capabilities, but in addition appropriately orchestrating them in the precise order. Due to this fact, we outline successful fee metric that assigns 1 if each standards are met, and 0 in any other case. Checking whether or not the mannequin has chosen the precise set perform calls is simple. To moreover make sure that the orchestration of those capabilities is right, we assemble a Directed Acyclic Graph (DAG) of the perform calls based mostly on the dependencies, as proven in Determine 3, the place every node represents a perform name and a directed edge from node A to B represents their interdependency (i.e. perform B can solely be executed after the execution of perform A). Then we examine if this DAG is similar to that of the bottom reality plan to confirm the accuracy of the dependencies.

After defining our analysis metric, we utilized LoRA to fine-tune the fashions for 3 epochs utilizing a studying fee of 7e-5 over the 80K coaching examples, and chosen the very best checkpoint based mostly on validation efficiency. For fine-tuning, our immediate included not solely the descriptions of the bottom reality capabilities (i.e. capabilities used within the floor reality plan) but in addition different irrelevant capabilities as destructive samples. We discovered the destructive samples to be significantly efficient for educating the mannequin easy methods to choose acceptable instruments for a given question, therefore enhancing the post-training efficiency. Moreover, we additionally embody a number of in-context examples demonstrating how queries are translated right into a perform calling plans. These in-context examples are chosen by a Retrieval Augmented Technology (RAG) course of based mostly on the person question from the information within the coaching dataset.

Utilizing the above settings, we fine-tuned TinyLlama-1.1B/Wizard-2-7B fashions. After fine-tuning, the 1.1B mannequin improved the success fee from 12.71% to 78.89%, and the 7B mannequin efficiency improved from 41.25% to 83.09%, which is ~4% larger than GPT-4-Turbo.

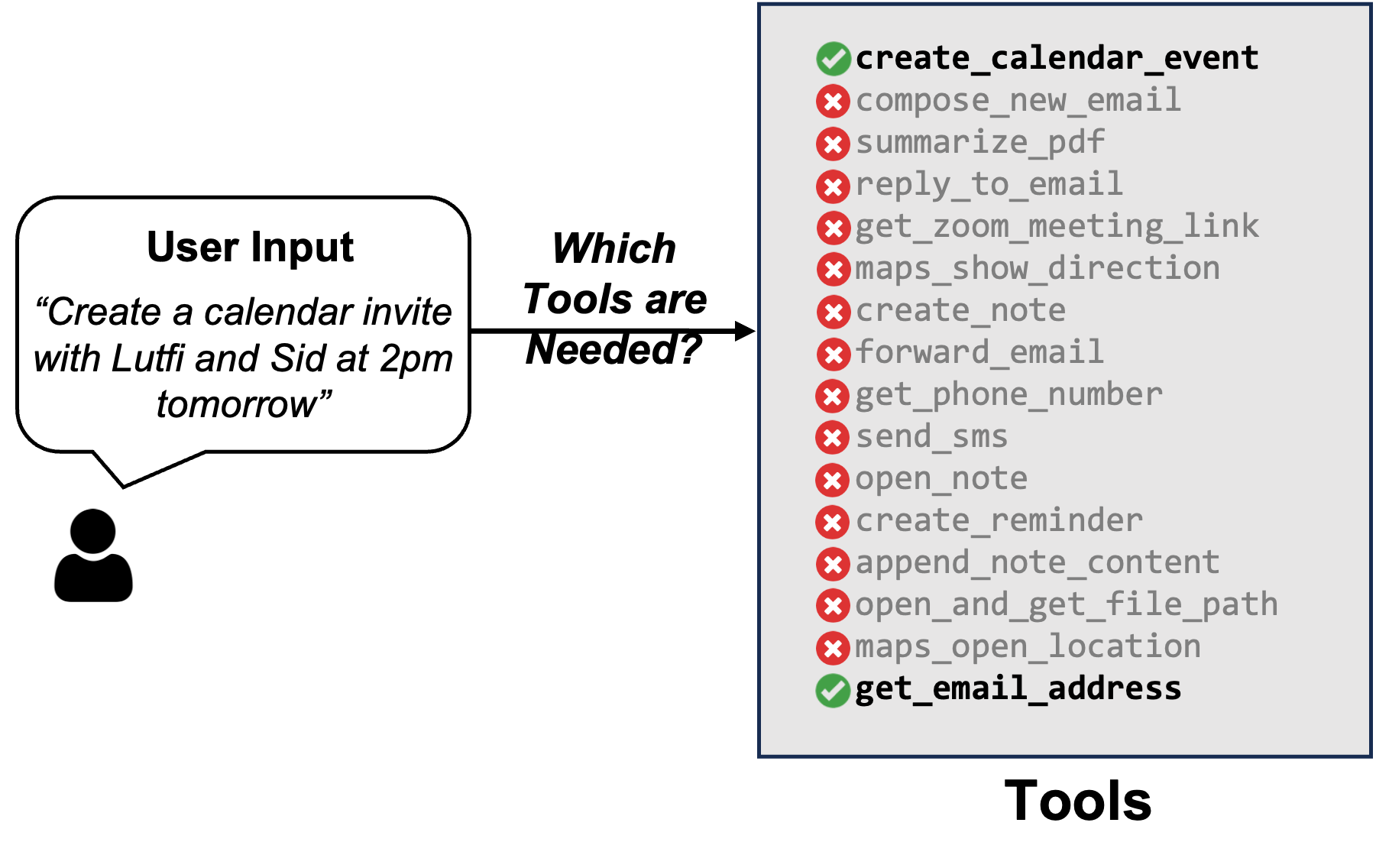

Determine 4: Environment friendly Device Choice Primarily based on Consumer Enter. Not all person inputs require all obtainable instruments; therefore, it’s crucial to pick out the precise set of instruments to reduce the immediate dimension and improve efficiency. On this case, the LLM solely wants the capabilities that get e mail addresses and create a calendar occasion in its immediate to perform its process.

Our major aim is to have the ability to deploy the TinyAgent mannequin domestically on a Macbook, which has restricted computational and reminiscence sources obtainable as in comparison with the GPUs that closed-source fashions like GPT are deployed on. To realize environment friendly efficiency with low latency we have to make sure that not solely the mannequin dimension is small, however that the enter immediate is as concise as potential. The latter is a crucial contributor to latency and computational useful resource consumption because of the quadratic complexity of consideration on sequence size.

The fine-tuned TinyAgent mannequin mentioned beforehand was fine-tuned with the outline of all obtainable instruments in its immediate. Nevertheless, that is fairly inefficient. We are able to considerably cut back the immediate dimension by solely together with the outline of related instruments based mostly on the person question. For example, contemplate the instance proven in Determine 4 above, the place the person is asking to create a calendar invite with two folks. On this case, the LLM solely wants the capabilities that get e mail addresses and create a calendar occasion in its immediate.

To make the most of this commentary, we have to decide which capabilities are required to perform the person’s command, which we confer with as Device RAG given its similarity with how Retrieval Augmented Technology (RAG) works. Nevertheless, there is a crucial subtlety. If we use a fundamental RAG methodology the place we compute the embedding of the person question and use that to retrieve the related instruments, we get very low efficiency. It’s because finishing a person’s question typically requires utilizing a number of auxiliary instruments which can be missed with a easy RAG methodology if the embedding of the auxiliary instrument isn’t much like the person question. For example, the instance proven in Determine 4 requires calling get_email_address perform although the person question is simply asking about making a calendar invitation.

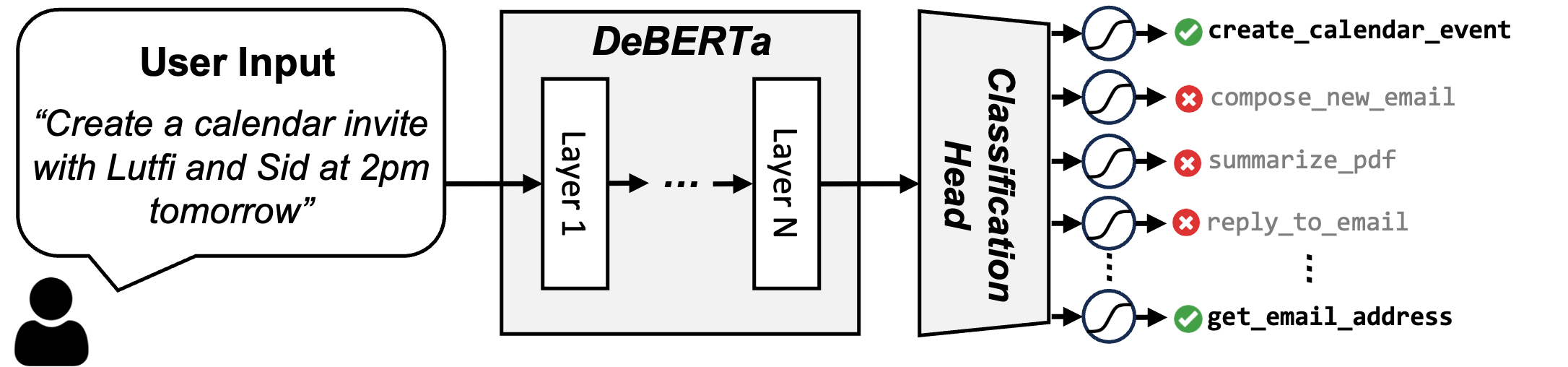

This may be addressed by treating the issue as a classification of which instruments are wanted. To that finish, we fine-tuned a DeBERTa-v3-small mannequin on the coaching information to carry out a 16-way classification as proven in Determine 5. The person question is given as an enter to this mannequin, after which we move the CLS token on the finish by a easy absolutely related layer of dimension 768×16 to rework it right into a 16 dimensional vector (which is the whole dimension of our instruments). The output of this layer is handed by a sigmoid layer to supply the chance of choosing every instrument. Throughout inference, we choose the instruments which have most likely larger than 50%, and in that case, we embody their description within the immediate. On common we observed that solely 3.97 instruments are retrieved with a recall of 0.998, whereas the essential RAG requires utilizing the highest 6 instruments to realize a instrument recall of 0.968.

Determine 5: Overview of our Device RAG scheme. We formulate instrument retrieval as a multi-label classification drawback. The person question is given as enter to the fine-tuned DeBERTa-v3-small mannequin, which outputs a 16-dimensional vector indicating instrument possibilities. Instruments with possibilities larger than 50% are chosen, averaging 3.97 instruments per question in comparison with 6 instruments in fundamental RAG.

We evaluated the mannequin efficiency after incorporating Device RAG. The outcomes are proven in Desk 1 beneath, the place we report the efficiency of the straightforward RAG system together with the fine-tuned DeBERTa method. As one can see, the DeBERTa based mostly Device RAG methodology achieves virtually good recall efficiency, improves the baseline accuracy, whereas decreasing the immediate dimension by ~2x tokens.

Desk 1: Comparability of TinyAgent efficiency with DeBERTa to Fundamental RAG and no RAG settings.

| Device RAG Methodology | Device Recall | Immediate Measurement (Tokens) | TinyAgent 1.1B Success Price (%) | TinyAgent 7B Success Price (%) |

|---|---|---|---|---|

| No RAG (all instruments within the immediate) | 1 | 2762 | 78.89 | 83.09 |

| Fundamental RAG | 0.949 (prime 3) | 1674 | 74.88 | 78.50 |

| Superb-tuned DeBERTa-v3-small (Ours) | 0.998 (instruments with >50% prob) | 1397 | 80.06 | 84.95 |

Deploying fashions on the edge, comparable to on client MacBooks, can nonetheless be difficult even for small fashions of O(1B) parameters, since loading the mannequin parameters can eat a big portion of the obtainable reminiscence. An answer to those points is quantization, which permits us to retailer the mannequin at a diminished bit precision. Quantization not solely reduces the storage necessities and mannequin footprint, but in addition cuts down the time and sources wanted to load mannequin weights into reminiscence, thereby decreasing the general inference latency as effectively (see this for extra data on quantization).

For extra environment friendly deployment of the fashions, we quantized the fashions into 4-bit with a bunch dimension of 32, which is supported by the llama.cpp framework with quantization conscious coaching. As proven in Desk 2, the 4-bit fashions end in 30% higher latency, together with a 4x discount within the mannequin dimension. We additionally discover slight accuracy enchancment which is because of the further fine-tuning with simulated quantization.

Desk 2: Latency, dimension, and success fee of TinyAgent fashions earlier than and after quantization. Latency is the end-to-end latency of the perform calling planner, together with the immediate processing time and era.

| Mannequin | Weight Precision | Latency (seconds) | Mannequin Measurement (GB) | Success Price (%) |

|---|---|---|---|---|

| GPT-3.5 | Unknown | 3.2 | Unknown | 65.04 |

| GPT-4-Turbo | Unknown | 3.9 | Unknown | 79.08 |

| TinyAgent-1.1B | 16 | 3.9 | 2.2 | 80.06 |

| TinyAgent-1.1B | 4 | 2.9 | 0.68 | 80.35 |

| TinyAgent-7B | 16 | 19.5 | 14.5 | 84.95 |

| TinyAgent-7B | 4 | 13.1 | 4.37 | 85.14 |

Under is the demo of the ultimate TinyAgent-1.1B mannequin deployed on a Macbook Professional M3 which you’ll truly obtain and set up in your Mac and check as effectively. It not solely runs the entire mannequin inference domestically in your laptop, however it additionally permits you to present instructions by audio. We course of the audio domestically as effectively utilizing the Whisper-v3 mannequin from OpenAI deployed domestically utilizing the whisper.cpp framework. The best shock for us was that the accuracy of the 1.1B mannequin exceeds that of GPT-4-Turbo, and is markedly quick whereas deployed domestically and privately on machine.

To summarize, we launched TinyAgent and confirmed that it’s certainly potential to coach a small language mannequin and use it to energy a semantic system that processes person queries. Particularly, we thought-about a Siri-like assistant for Mac as a driving software. The important thing elements for enabling it’s to (i) train off-the-shelf SLMs to carry out perform calling by LLMCompiler framework, (ii) curate top quality perform calling information for the duty at hand, (iii) fine-tune the off-the-shelf mannequin on the generated information, and (iv) allow environment friendly deployment by optimizing the immediate dimension by solely retrieving the mandatory instruments based mostly on the person question by a way known as ToolRAG, in addition to quantized mannequin deployment to cut back inference useful resource consumption. After these steps, our ultimate fashions achieved 80.06% and 84.95% for the TinyAgent1.1.B and 7B fashions which exceed GPT-4-Turbo’s success fee of 79.08% on this process.

We want to thank Apple for sponsoring this undertaking, in addition to help from Microsoft by Accelerating Basis Fashions Analysis Program. We additionally thank Sunjin Choi for his insights in vitality price related to native and cloud deployment. Our conclusions don’t essentially replicate the place or the coverage of our sponsors, and no official endorsement needs to be inferred.

BibTex for this put up:

@misc{tiny-agent,

title={TinyAgent: Perform Calling on the Edge},

creator={Erdogan, Lutfi Eren and Lee, Nicholas and Jha, Siddharth and Kim, Sehoon and Tabrizi, Ryan and Moon, Suhong and Hooper, Coleman and Anumanchipalli, Gopala and Keutzer, Kurt and Gholami, Amir},

howpublished={url{https://bair.berkeley.edu/weblog/2024/05/29/tiny-agent/}},

12 months={2024}

}