Classes from constructing manufacturing AI techniques that no person talks about.

The dialog round AI brokers has moved quick. A yr in the past, everybody was optimizing RAG pipelines. Now the discourse facilities on context engineering, MCP/A2A protocols, agentic coding instruments that learn/handle whole codebases, and multi-agent orchestration patterns. The frameworks maintain advancing.

After 18 months constructing the AI Assistant at Cisco Buyer Expertise (CX), we’ve realized that the challenges figuring out real-world success are not often those getting consideration. Our system makes use of multi-agent design patterns over structured enterprise knowledge (largely SQL, like most enterprises). The patterns that observe emerged from making that system truly helpful to the enterprise.

This put up isn’t in regards to the apparent. It’s about among the unglamorous patterns that decide whether or not your system will get used or deserted.

1. The Acronym Downside

Enterprise environments are dense with inside terminology. A single dialog may embody ATR, MRR, and NPS, every carrying particular inside which means that differs from widespread utilization.

To a basis mannequin, ATR may imply Common True Vary or Annual Taxable Income. To our enterprise customers, it means Obtainable to Renew. The identical acronym also can imply utterly various things throughout the firm, relying on the context:

Consumer: “Arrange a gathering with our CSM to debate the renewal technique”

AI: CSM → Buyer Success Supervisor (context: renewal)

Consumer: “Examine the CSM logs for that firewall challenge”

AI: CSM → Cisco Safety Supervisor (context: firewall)

NPS might be Internet Promoter Rating or Community Safety Options, each utterly legitimate relying on context. With out disambiguation, the mannequin guesses. It guesses confidently. It guesses unsuitable.

The naive answer is to increase acronyms in your immediate. However this creates two issues: first, you could know which acronyms want growth (and LLMs hallucinate expansions confidently). Second, enterprise acronyms are sometimes ambiguous even throughout the similar group.

We preserve a curated company-wide assortment of over 8,000 acronyms with domain-specific definitions. Early within the workflow, earlier than queries attain our area brokers, we extract potential acronyms, seize surrounding context for disambiguation, and lookup the right growth.

50% of all queries requested by CX customers to the AI Assistant comprise a number of acronyms and obtain disambiguation earlier than reaching our area brokers.

The important thing element: we inject definitions as context whereas preserving the consumer’s unique terminology. By the point area brokers execute, acronyms are already resolved.

2. The Clarification Paradox

Early in improvement, we constructed what appeared like a accountable system: when a consumer’s question lacked adequate context, we requested for clarification. “Which buyer are you asking about?” “What time interval?” “Are you able to be extra particular?”

Customers didn’t prefer it, and a clarification query would typically get downvoted.

The issue wasn’t the questions themselves. It was the repetition. A consumer would ask about “buyer sentiment,” obtain a clarification request, present a buyer identify, after which get requested about time interval. Three interactions to reply one query.

Analysis on multi-turn conversations exhibits a 39% efficiency degradation in comparison with single-turn interactions. When fashions take a unsuitable flip early, they not often recuperate. Each clarification query is one other flip the place issues can derail.

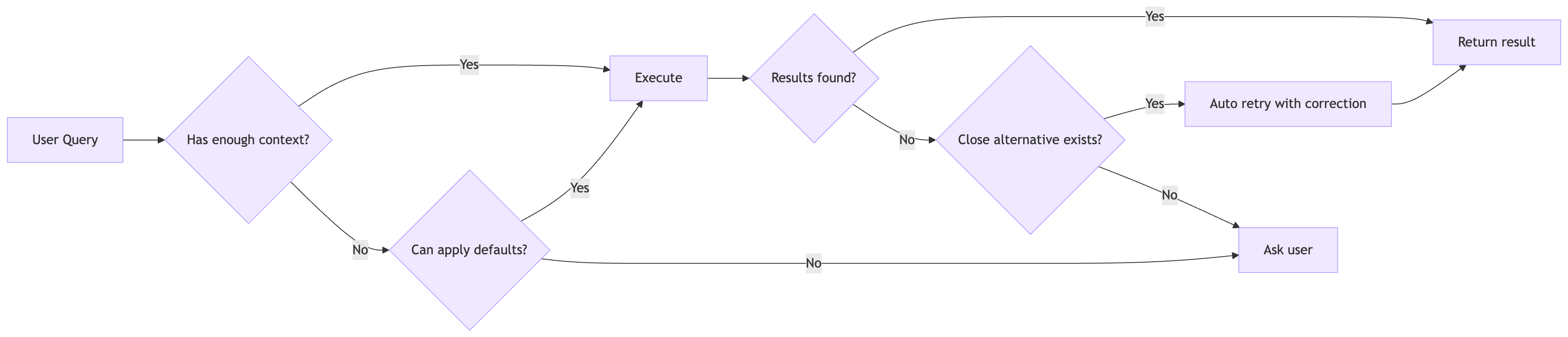

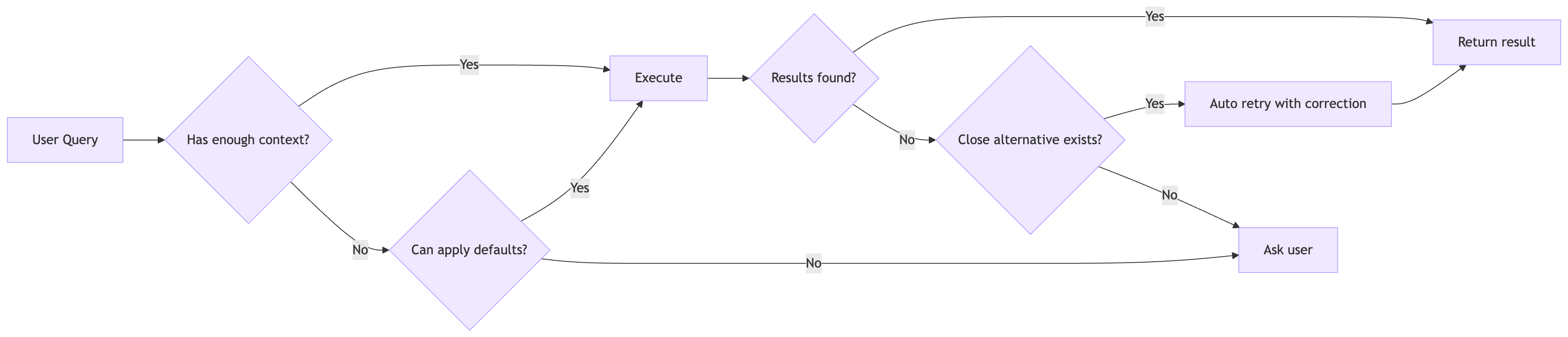

The repair was counterintuitive: classify clarification requests as a final resort, not a primary intuition.

We applied a priority system the place “proceed with affordable defaults” outranks “ask for extra info.” If a consumer offers any helpful qualifier (a buyer identify, a time interval, a area), assume “all” for lacking dimensions. Lacking time interval? Default to the following two fiscal quarters. Lacking buyer filter? Assume all clients throughout the consumer’s entry scope.

That is the place clever reflection additionally helps tremendously: when an agent’s preliminary try returns restricted outcomes however a detailed various exists (say, a product identify matching a barely totally different variation), the system can robotically retry with the corrected enter somewhat than bouncing a clarification query again to the consumer. The purpose is resolving ambiguity behind the scenes each time attainable, and being clear to customers about what filters the brokers used.

Early variations requested for clarification on 30%+ of queries. After tuning the choice stream with clever reflection, that dropped under 10%.

Determine: Choice stream for clarification, with clever reflection

The important thing perception: customers would somewhat obtain a broader end result set they will filter mentally than endure a clarification dialogue. The price of displaying barely extra knowledge is decrease than the price of friction.

3. Guided Discovery Over Open-Ended Dialog

We added a characteristic known as “Compass” that implies a logical subsequent query after every response. “Would you want me to interrupt down buyer sentiment by product line?”

Why not simply ask the LLM to counsel follow-ups? As a result of a basis mannequin that doesn’t perceive your small business will counsel queries your system can’t truly deal with. It’s going to hallucinate capabilities. It’s going to suggest evaluation that sounds affordable however leads nowhere.

Compass grounds strategies in precise system capabilities. Slightly than producing open-ended strategies (“Is there the rest you’d wish to know?”), it proposes particular queries the system can positively fulfill, aligned to enterprise workflows the consumer cares about.

This serves two functions. First, it helps customers who don’t know what to ask subsequent. Enterprise knowledge techniques are complicated; enterprise customers typically don’t know what knowledge is out there. Guided strategies educate them the system’s capabilities via instance. Second, it retains conversations productive and on-rails.

Roughly 40% of multi-turn conversations throughout the AI Assistant embody an affirmative follow-up, demonstrating how contextually related observe up strategies can enhance consumer retention, dialog continuity and information discovery.

We discovered this sample beneficial sufficient that we open-sourced a standalone implementation: langgraph-compass. The core perception is that follow-up era ought to be decoupled out of your predominant agent so it may be configured, constrained, and grounded independently.

4. Deterministic Safety in Probabilistic Methods

Position-based entry management can’t be delegated to an LLM.

The instinct may be to inject the consumer’s permissions into the immediate: “This consumer has entry to accounts A, B, and C. Solely return knowledge from these accounts.” This doesn’t work. The mannequin may observe the instruction. It won’t. It would observe it for the primary question and overlook by the third. It may be jailbroken. It may be confused by adversarial enter. Immediate-based identification isn’t identification enforcement.

The chance is refined however extreme: a consumer crafts a question that methods the mannequin into revealing knowledge exterior their scope, or the mannequin merely drifts from the entry guidelines mid-conversation. Compliance and audit necessities make this untenable. You can’t clarify to an auditor that entry management “normally works.”

Our RBAC implementation is solely deterministic and utterly opaque to the LLM. Earlier than any question executes, we parse it and inject entry management predicates in code. The mannequin by no means sees these predicates being added; it by no means makes entry choices. It formulates queries; deterministic code enforces boundaries.

When entry filtering produces empty outcomes, we detect it and inform the consumer: “No data are seen together with your present entry permissions.” They know they’re seeing a filtered view, not a whole absence.

Liz Centoni, Cisco’s EVP of Buyer Expertise, has written about the broader framework for constructing belief in agentic AI, together with governance by design and RBAC as foundational rules. These aren’t afterthoughts. They’re stipulations.

5. Empty Outcomes Want Explanations

When a database question returns no rows, your first intuition may be to inform the consumer “no knowledge discovered.” That is virtually all the time the unsuitable reply.

“No knowledge discovered” is ambiguous. Does it imply the entity doesn’t exist? The entity exists however has no knowledge for this time interval? The question was malformed? The consumer doesn’t have permission to see the information?

Every state of affairs requires a distinct response. The third is a bug. The fourth is a coverage that wants transparency (see part above).

System-enforced filters (RBAC): The info exists, however the consumer doesn’t have permission to see it. The appropriate response: “No data are seen together with your present entry permissions. Data matching your standards exist within the system.” That is transparency, not an error.

Consumer-applied filters: The consumer requested for one thing particular that doesn’t exist. “Present me upcoming subscription renewals for ACME Corp in Q3” returns empty as a result of there are not any renewals scheduled for that buyer in that interval. The appropriate response explains what was searched: “I couldn’t discover any subscriptions up for renewal for ACME Corp in Q3. This might imply there are not any lively subscriptions, or the information hasn’t been loaded but.”

Question errors: The filter values don’t exist within the database in any respect. The consumer misspelled a buyer identify or used an invalid ID. The appropriate response suggests corrections.

We deal with this at a number of layers. When queries return empty, we analyze what filters eradicated data and whether or not filter values exist within the database. When entry management filtering produces zero outcomes, we test whether or not outcomes would exist with out the filter. The synthesis layer is instructed to by no means say “the SQL question returned no outcomes.”

This transparency builds belief. Customers perceive the system’s boundaries somewhat than suspecting it’s damaged.

6. Personalization is Not Optionally available

Most enterprise AI is designed as a one-size-fits-all interface. However folks anticipate an “assistant” to adapt to their distinctive wants and assist their means of working. Pushing a inflexible system with out primitives for personalisation causes friction. Customers strive it, discover it doesn’t match their workflow, and abandon it.

We addressed this on a number of fronts.

Shortcuts enable customers to outline command aliases that increase into full prompts. As an alternative of typing out “Summarize renewal danger for ACME Corp, present a two paragraph abstract highlighting key danger elements which will affect probability of non-renewal of Meraki subscriptions”, a consumer can merely kind /danger ACME Corp. We took inspiration from agentic coding instruments like Claude Code that assist slash instructions, however constructed it for enterprise customers to assist them get extra accomplished rapidly. Energy customers create shortcuts for his or her weekly reporting queries. Managers create shortcuts for his or her group evaluate patterns. The identical underlying system serves totally different workflows with out modification.

Based mostly on manufacturing visitors, we’ve observed probably the most lively shortcut customers common 4+ makes use of per shortcut per day. Energy customers who create 5+ shortcuts generate 2-3x the question quantity of informal customers.

Scheduled prompts allow automated, asynchronous supply of knowledge. As an alternative of synchronous chat the place customers should bear in mind to ask, duties ship insights on a schedule: “Each Monday morning, ship me a abstract of at-risk renewals for my territory.” This shifts the assistant from reactive to proactive.

Lengthy-term reminiscence remembers utilization patterns and consumer behaviors throughout dialog threads. If a consumer all the time follows renewal danger queries with product adoption metrics, the system learns that sample and recommends it. The purpose is making AI really feel actually private, prefer it is aware of the consumer and what they care about, somewhat than beginning contemporary each session.

We monitor utilization patterns throughout all these options. Closely-used shortcuts point out workflows which are value optimizing and generalizing throughout the consumer neighborhood.

7. Carrying Context from the UI

Most AI assistants deal with context as chat historical past. In dashboards with AI assistants, one of many challenges is context mismatch. Customers might ask a few particular view, chart or desk they’re viewing, however the assistant normally sees chat textual content and broad metadata or carry out queries which are exterior the scope the consumer switched from. The assistant doesn’t reliably know the precise dwell view behind the query. As filters, aggregations, and consumer focus change, responses grow to be disconnected from what the consumer truly sees. For instance, a consumer might apply a filter for belongings which have reached end-of-support for a number of architectures or product varieties, however the assistant should reply from a broader prior context.

We enabled an choice by which UI context is specific and steady. Every AI flip is grounded within the precise view state of the chosen dashboard content material and even objects, not simply dialog historical past. This provides the assistant exact situational consciousness and retains solutions aligned with the consumer’s present display. Customers are made conscious that they’re inside their view context once they swap to the assistant window,

For customers, the most important achieve is accuracy they will confirm rapidly. Solutions are tied to the precise view they’re taking a look at, so responses really feel related as an alternative of generic. It additionally reduces friction: fewer clarification loops, and smoother transitions when switching between dashboard views and objects. The assistant feels much less like a separate chat instrument and extra like an extension of the interface.

8. Constructing AI with AI

We develop these agentic techniques utilizing AI-assisted workflows. It’s about encoding a senior software program engineer’s data into machine-readable patterns that any new group member, human or AI, can observe.

We preserve guidelines that outline code conventions, architectural patterns, and domain-specific necessities. These guidelines are all the time lively throughout improvement, guaranteeing consistency no matter who writes the code. For complicated duties, we preserve command recordsdata that break multi-step operations into structured sequences. These are shared throughout the group, so a brand new developer can choose issues up rapidly and contribute successfully from day one.

Options that beforehand required multi-week dash cycles now ship in days.

The important thing perception: the worth isn’t essentially in AI’s normal intelligence and what state-of-the-art mannequin you utilize. It’s within the encoded constraints that channel that intelligence towards helpful outputs. A general-purpose mannequin with no context writes generic code. The identical mannequin with entry to mission conventions and instance patterns writes code that matches the codebase.

There’s a moat in constructing a mission as AI-native from the beginning. Groups that deal with AI help as infrastructure, that put money into making their codebase legible to AI instruments, transfer quicker than groups that bolt AI on as an afterthought.

Conclusion

None of those patterns are technically refined. They’re apparent in hindsight. The problem isn’t realizing them; it’s prioritizing them over extra thrilling work.

It’s tempting to chase the newest protocol or orchestration framework. However customers don’t care about your structure. They care whether or not the system helps them do their job and is evolving rapidly to inject effectivity into extra parts of their workflow.

The hole between “technically spectacular demo” and “truly useful gizmo” is crammed with many of those unglamorous patterns. The groups that construct lasting AI merchandise are those prepared to do the boring work properly.

These patterns emerged from constructing a manufacturing AI Assistant at Cisco’s Buyer Expertise group. None of this is able to exist with out the group of architects, engineers and designers who argued about the correct abstractions, debugged the sting instances, and stored pushing till the system truly labored for actual customers.