Ever felt misplaced in messy folders, so many scripts, and unorganized code? That chaos solely slows you down and hardens the info science journey. Organized workflows and undertaking constructions usually are not simply nice-to-have, as a result of it impacts the reproducibility, collaboration and understanding of what’s taking place within the undertaking. On this weblog, we’ll discover one of the best practices plus have a look at a pattern undertaking to information your forthcoming initiatives. With none additional ado let’s look into a few of the essential frameworks, widespread practices, how to enhance them.

Widespread Knowledge Science Workflow Frameworks for Venture Construction

Knowledge science frameworks present a structured technique to outline and preserve a transparent knowledge science undertaking construction, guiding groups from drawback definition to deployment whereas bettering reproducibility and collaboration.

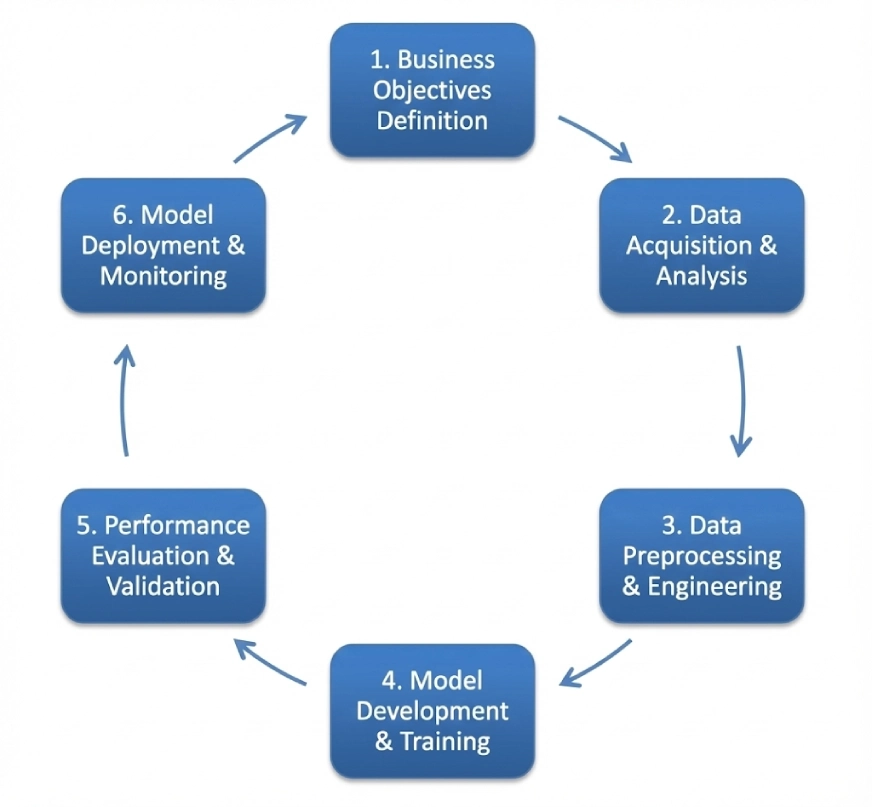

CRISP-DM

CRISP-DM is the acronym for Cross-Business Course of for Knowledge Mining. It follows a cyclic iterative construction together with:

- Enterprise Understanding

- Knowledge Understanding

- Knowledge Preparation

- Modeling

- Analysis

- Deployment

This framework can be utilized as a typical throughout a number of domains, although the order of steps of it may be versatile and you may transfer again in addition to against the unidirectional move. We’ll have a look at a undertaking utilizing this framework afterward on this weblog.

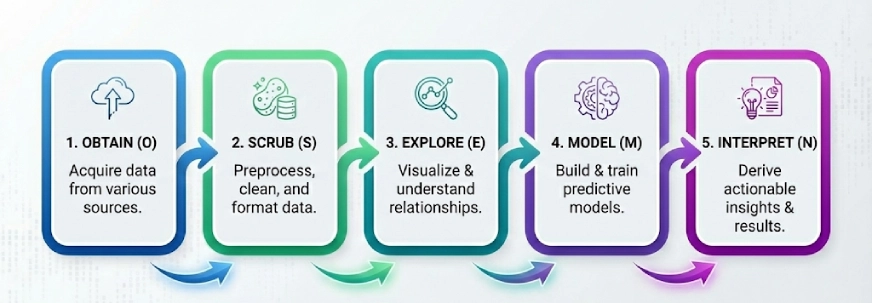

OSEMN

One other widespread framework on the planet of information science. The concept right here is to interrupt the advanced issues into 5 steps and resolve them step-by-step, the 5 steps of OSEMN (pronounced as Superior) are:

- Receive

- Scrub

- Discover

- Mannequin

- Interpret

Be aware: The ‘N’ in “OSEMN” is the N in iNterpret.

We comply with these 5 logical steps to “Receive” the info, “Scrub” or preprocess the info, then “Discover” the info by utilizing visualizations and understanding the relationships between the info, after which we “Mannequin” the info to make use of the inputs to foretell the outputs. Lastly, we “Interpret” the outcomes and discover actionable insights.

KDD

KDD or Data Discovery in Databases consists of a number of processes that intention to show uncooked knowledge into data discovery. Listed here are the steps on this framework:

- Choice

- Pre-Processing

- Transformation

- Knowledge Mining

- Interpretation/Analysis

It’s value mentioning that folks check with KDD as Knowledge Mining, however Knowledge Mining is the precise step the place algorithms are used to search out patterns. Whereas, KDD covers the whole lifecycle from the beginning to finish.

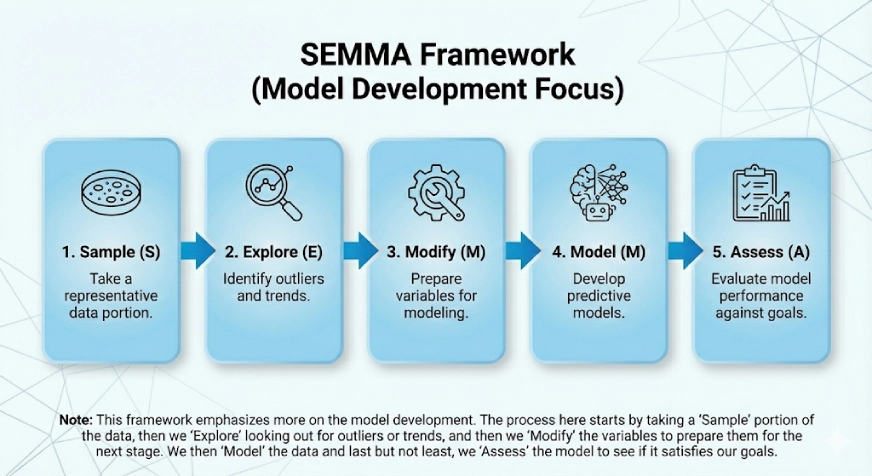

SEMMA

This framework emphasises extra on the mannequin growth. The SEMMA comes from the logical steps within the framework that are:

- Pattern

- Discover

- Modify

- Mannequin

- Assess

The method right here begins by taking a “Pattern” portion of the info, then we “Discover” searching for outliers or traits, after which we “Modify” the variables to arrange them for the following stage. We then “Mannequin” the info and final however not least, we “Assess” the mannequin to see if it satisfies our objectives.

Widespread Practices that Have to be Improved

Enhancing these practices is crucial for sustaining a clear and scalable knowledge science undertaking construction, particularly as initiatives develop in dimension and complexity.

1. The issue with “Paths”

Folks typically hardcode absolute paths like pd.read_csv(“C:/Customers/Identify/Downloads/knowledge.csv”). That is positive whereas testing issues out on Jupyter Pocket book however when used within the precise undertaking it breaks the code for everybody else.

The Repair: All the time use relative paths with the assistance of libraries like “os” or “pathlib”. Alternatively, you may select so as to add the paths in a config file (for example: DATA_DIR=/house/ubuntu/path).

2. The Cluttered Jupyter Pocket book

Typically folks use a single Jupyter Pocket book with 100+ cells containing imports, EDA, cleansing, modeling, and visualization. This is able to make it not possible to check or model management.

The Repair: Use Jupyter Notebooks just for Exploration and stick with Python Scripts for Automation. As soon as a cleansing perform works, add it to a src/processing.py file after which you may import it into the pocket book. This provides modularity and re-usability and in addition makes testing and understanding the pocket book loads easier.

3. Model the Code not the Knowledge

Git can wrestle in dealing with massive CSV information. Folks on the market typically push knowledge to GitHub which might take loads of time and in addition trigger different problems.

The Repair: Point out and use Knowledge Model Management (DVC briefly). It’s like Git however for knowledge.

4. Not offering a README for the undertaking

A repository can include nice code however with out directions on the way to set up dependencies or run the scripts might be chaotic.

The Repair: Be sure that you at all times craft a very good README.md that has info on Learn how to arrange the surroundings, The place and the way to get the info, How to run the mannequin and different essential scripts.

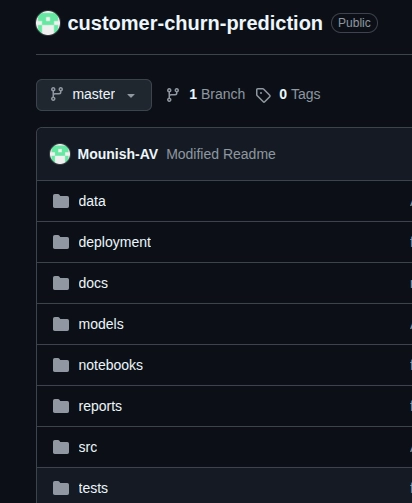

Constructing a Buyer Churn Prediction System [Sample Project]

Now utilizing the CRISP-DM framework I’ve created a pattern undertaking known as “Buyer Churn Prediction System”, let’s perceive the complete course of and the steps by taking a greater have a look at the identical.

Right here’s the GitHub hyperlink of the repository.

Be aware: It is a pattern undertaking and is crafted to grasp the way to implement the framework and comply with a typical process.

Making use of CRISP-DM Step by Step

- Enterprise Understanding: Right here we must outline what we’re truly attempting to resolve. In our case it’s recognizing clients who’re more likely to churn. We set clear targets for the system, 85%+ accuracy and 80%+ recall, and the enterprise purpose right here is to retain the shoppers.

- Knowledge Understanding In our case the Telco Buyer Churn dataset. We now have to look into the descriptive statistics, examine the info high quality, search for lacking values (additionally take into consideration how we are able to deal with them), additionally we have now to see how the goal variable is distributed, additionally lastly we have to discover the correlations between the variables to see what options matter.

- Knowledge Preparation: This step can take time however must be completed fastidiously. Right here we cleanse the messy knowledge, cope with the lacking values and outliers, create new options if required, encode the explicit variables, break up the dataset into coaching (70%), validation (15%), and check (15%), and eventually normalizing the options for our fashions.

- Modeling: In this important step, we begin with a easy mannequin or baseline (logistic regression in our case), then experiment with different fashions like Random Forest, XGBoost to attain our enterprise objectives. We then tune the hyperparameters.

- Analysis: Right here we determine which mannequin is working one of the best for us and is assembly our enterprise objectives. In our case we have to have a look at the precision, recall, F1-scores, ROC-AUC curves and the confusion matrix. This step helps us choose the ultimate mannequin for our purpose.

- Deployment: That is the place we truly begin utilizing the mannequin. Right here we are able to use FastAPI or every other alternate options, containerize it with Docker for scalability, and set-up monitoring for monitor functions.

Clearly utilizing a step-by-step course of helps present a transparent path to the undertaking, additionally through the undertaking growth you may make use of progress trackers and GitHub’s model controls can certainly assist. Knowledge Preparation wants intricate care because it received’t want many revisions if rightly completed, if any situation arises after deployment it may be mounted by going again to the modeling part.

Conclusion

As talked about within the begin of the weblog, organized workflows and undertaking constructions usually are not simply nice-to-have, they’re a should. With CRISP-DM, OSEMN, KDD, or SEMMA, a step-by-step course of retains initiatives clear and reproducible. Additionally don’t neglect to make use of relative paths, maintain Jupyter Notebooks for Exploration, and at all times craft a very good README.md. All the time keep in mind that growth is an iterative course of and having a transparent structured framework to your initiatives will ease your journey.

Often Requested Questions

A. Reproducibility in knowledge science means with the ability to get hold of the identical outcomes utilizing the identical dataset, code, and configuration settings. A reproducible undertaking ensures that experiments might be verified, debugged, and improved over time. It additionally makes collaboration simpler, as different workforce members can run the undertaking with out inconsistencies brought on by surroundings or knowledge variations.

A. Mannequin drift happens when a machine studying mannequin’s efficiency degrades as a result of real-world knowledge modifications over time. This could occur because of modifications in consumer conduct, market circumstances, or knowledge distributions. Monitoring for mannequin drift is crucial in manufacturing techniques to make sure fashions stay correct, dependable, and aligned with enterprise aims.

A. A digital surroundings isolates undertaking dependencies and prevents conflicts between totally different library variations. Since knowledge science initiatives typically depend on particular variations of Python packages, utilizing digital environments ensures constant outcomes throughout machines and over time. That is crucial for reproducibility, deployment, and collaboration in real-world knowledge science workflows.

A. An information pipeline is a collection of automated steps that transfer knowledge from uncooked sources to a model-ready format. It sometimes contains knowledge ingestion, cleansing, transformation, and storage.

Login to proceed studying and revel in expert-curated content material.