LLMs like ChatGPT, Claude, and Gemini, are sometimes thought-about clever as a result of they appear to recall previous conversations. The mannequin acts as if it obtained the purpose, even after you made a follow-up query. That is the place LLM reminiscence is useful. It permits a chatbot to return to the purpose of what “it” or “that” means. Most LLMs are stateless by default. Due to this fact, every new consumer question is handled independently, with no data of previous exchanges.

Nevertheless, LLM reminiscence works very in a different way from human reminiscence. This reminiscence phantasm is among the fundamental elements that decide how trendy AI programs are perceived as being helpful in real-world functions. The fashions don’t “recall” within the typical method. As a substitute, they use architectural mechanisms, context home windows, and exterior reminiscence programs. On this weblog, we are going to talk about how LLM reminiscence features, the assorted forms of reminiscence which might be concerned, and the way present programs assist fashions in remembering what is absolutely necessary.

What’s Reminiscence in LLMs?

Reminiscence in LLMs is an idea that permits LLMs to make use of earlier info as a foundation for creating new responses. Basically, the time period “established reminiscence” defines how constructed reminiscences work inside LLMs, in comparison with established reminiscence in people, the place established reminiscence is used rather than established reminiscence as a system of storing and/ or recalling experiences.

As well as, the established reminiscence idea provides to the general functionality of LLMs to detect and higher perceive the context, the connection between previous exchanges and present enter tokens, in addition to the appliance of not too long ago discovered patterns to new circumstances by way of an integration of enter tokens into established reminiscence.

Since established reminiscence is continually developed and utilized primarily based on what was discovered throughout prior interactions, info derived from established reminiscence permits a considerably extra complete understanding of context, earlier message exchanges, and new requests in comparison with the normal use of LLMs to reply to requests in the identical method as with present LLM strategies of operation.

What Does Reminiscence Imply in LLMs?

The massive language mannequin (LLM) reminiscence permits the usage of prior data in reasoning. The data could also be linked to the present immediate. Previous dialog although is pulled from exterior information sources. Reminiscence doesn’t indicate that the mannequin has continuous consciousness of all the data. Slightly, it’s the mannequin that produces its output primarily based on the supplied context. Builders are continually pouring within the related info into every mannequin name, thus creating reminiscence.

Key Factors:

- The LLM reminiscence function permits for retaining outdated textual content and using it in new textual content era.

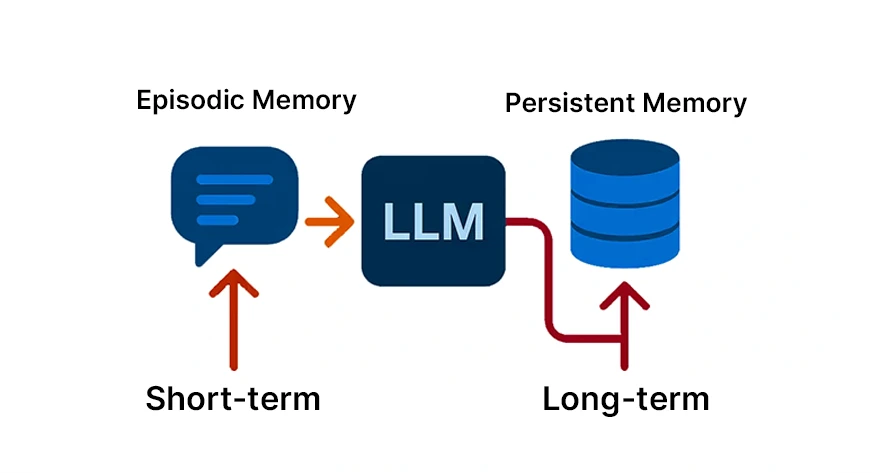

- The reminiscence can final for a short while (just for the continued dialog) or a very long time (enter throughout consumer periods), as we are going to present all through the textual content.

- To people, it could be like evaluating the short-term and long-term reminiscence in our brains.

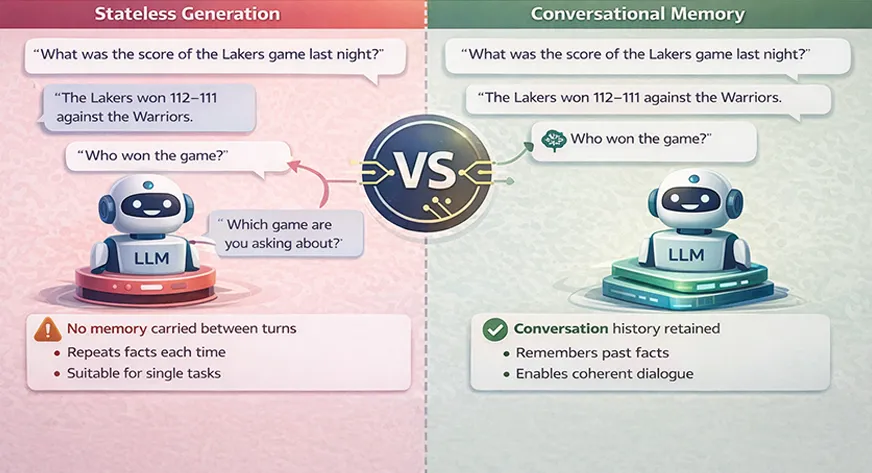

Reminiscence vs. Stateless Era

In a regular scenario, an LLM doesn’t retain any info between calls. For example, “every incoming question is processed independently” if there are not any express reminiscence mechanisms in place. This means that in answering the query “Who received the sport?” an LLM wouldn’t take into account that “the sport” was beforehand referred to. The mannequin would require you to repeat all necessary info each single time. Such a stateless character is usually appropriate for single duties, nevertheless it will get problematic for conversations or multi-step duties.

In distinction, reminiscence programs permit for this example to be reversed. The inclusion of conversational reminiscence signifies that the LLM’s inputs include the historical past of earlier conversations, which is usually condensed or shortened to suit the context window. Consequently, the mannequin’s reply can depend on the earlier exchanges.

Core Elements of LLM Reminiscence

The reminiscence of LLM operates by way of the collaboration of assorted layers. The mannequin that’s fashioned by these components units the boundaries of the data a mannequin can take into account, the time it lasts, and the extent to which it influences the ultimate outcomes with certainty. The data of such elements empowers the engineers to create programs which might be scalable and preserve the identical stage of significance.

Context Window: The Working Reminiscence of LLMs

The context window defines what number of tokens an LLM can course of directly. It acts because the mannequin’s short-term working reminiscence.

Every little thing contained in the context window influences the mannequin’s response. As soon as tokens fall outdoors this window, the mannequin loses entry to them totally.

Challenges with Massive Context Home windows

Longer context home windows enrich reminiscence capability however pose a sure subject. They increase the bills for computation, trigger a delay, and in some circumstances, scale back the standard of the eye paid. The fashions could not be capable of successfully discriminate between salient and non-salient with the rise within the context size.

For instance, if an 8000-token context window mannequin is used, then it is going to be in a position to perceive solely the most recent 8000 tokens out of the dialogue, paperwork, or directions mixed. Every little thing that goes past this should be both shortened or discarded. The context window includes all that you simply transmit to the mannequin: system prompts, your complete historical past of the dialog, and any related paperwork. With an even bigger context window, extra attention-grabbing and complicated conversations can happen.

Parametric vs Non-Parametric Reminiscence in LLMs

Once we say reminiscence in LLM, it may be considered when it comes to the place it’s saved. We make a distinction between two sorts of reminiscence: parametric and non-parametric. Now we’ll talk about it briefly.

- Parametric reminiscence means the data that was saved within the mannequin weights throughout the coaching section. This could possibly be a combination of assorted issues, comparable to language patterns, world data, and the flexibility to purpose. That is how a GPT mannequin might have labored with historic info as much as its coaching cutoff date as a result of they’re saved within the parametric reminiscence.

- Non-parametric reminiscence is maintained outdoors of the mannequin. It consists of databases, paperwork, embeddings, and dialog historical past which might be all added on-the-fly. Non-parametric reminiscence is what trendy LLM programs closely rely upon to offer each accuracy and freshness. For instance, a data base in a vector database is non-parametric. As it may be added to or corrected at any cut-off date, the mannequin can nonetheless entry info from it throughout the inference course of.

Kinds of LLM Reminiscence

LLM reminiscence is a time period used to discuss with the identical idea, however in numerous methods. The commonest solution to inform them aside is by the short-term (contextual) reminiscence and the long-term (persistent) reminiscence. The opposite perspective takes phrases from cognitive psychology: semantic reminiscence (data and info), episodic reminiscence (occasions), and procedural reminiscence (appearing). We’ll describe every one.

Contextual Reminiscence or Quick-Time period Reminiscence

Quick-term reminiscence, also called contextual, is the reminiscence that accommodates the data that’s at present being talked about. It’s the digital counterpart of your short-term recall. Any such reminiscence is normally stored within the current context window or a dialog buffer.

Key Factors:

- The current questions of the consumer and the solutions of the mannequin are saved in reminiscence throughout the session. There isn’t a long-lasting reminiscence. Usually, this reminiscence is eliminated after the dialog, except it’s saved.

- It is rather quick and doesn’t eat a lot reminiscence. It doesn’t want a database or sophisticated infrastructure. It’s merely the tokens within the present immediate.

- It will increase coherence, i.e., the mannequin “understands” what was not too long ago mentioned and may precisely discuss with it utilizing phrases comparable to “he” or “the earlier instance”.

For example, a assist chatbot might keep in mind that the shopper had earlier inquired a few defective widget, after which, inside the identical dialog, it might ask the shopper if he had tried rebooting the widget. That’s short-term reminiscence going into motion.

Persistent Reminiscence or Lengthy-Time period Reminiscence

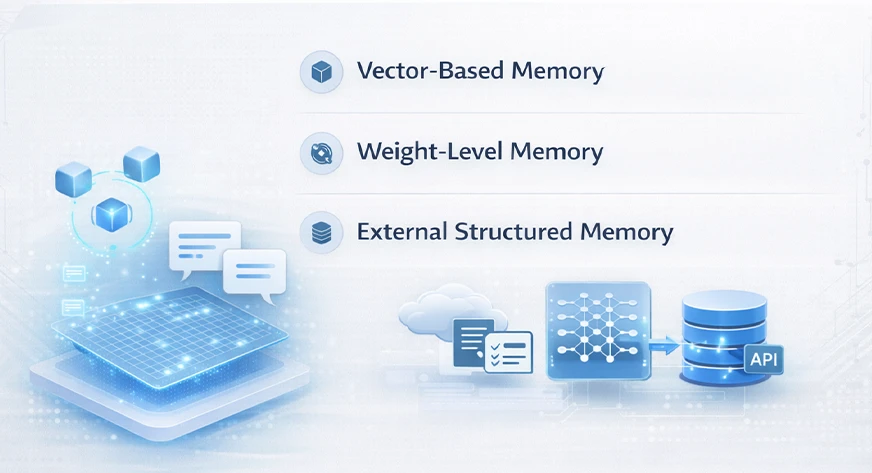

Persistent reminiscence is a function that constantly exists in trendy computing programs and historically retains info by way of numerous consumer periods. Among the many several types of system retains are consumer preferences, utility information, and former interactions. As a matter of truth, builders should depend on exterior sources like databases, caches, or vector shops for a short lived answer, as fashions should not have the flexibility to retailer these internally, thus, long-term reminiscence simulation.

For example, an AI writing assistant that might neglect that your most popular tone is “formal and concise” or which tasks you wrote about final week. Whenever you return the subsequent day, the assistant nonetheless remembers your preferences. To implement such a function, builders normally undertake the next measures:

- Embedding shops or vector databases: They hold paperwork or info within the type of high-dimensional vectors. The massive language mannequin (LLM) is able to conducting a semantic search on these vectors to acquire reminiscences which might be related.

- High quality-tuned fashions or reminiscence weights: In sure setups, the mannequin is periodically fine-tuned or up to date to encode the brand new info supplied by the consumer long-term. That is akin to embedding reminiscence into the weights.

- Exterior databases and APIs: Structured information (like consumer profiles) is saved in a database and fetched as wanted.

Vector Databases & Retrieval-Augmented Era (RAG)

A significant methodology for executing long-term reminiscence is vector databases together with retrieval-augmented era (RAG). RAG is a way that locations the era section of the LLM together with the retrieval section, dynamically combining them in an LLM method.

In a RAG system, when the consumer submits a question, the system first makes use of the retriever to scan an exterior data retailer, normally a vector database, for pertinent information. The retriever identifies the closest entries to the question and fetches these corresponding textual content segments. The following step is to insert these retrieved segments into the context window of the LLM as supplementary context. The LLM gives the reply primarily based on the consumer’s enter in addition to the retrieved information. RAG affords vital benefits:

- Grounded solutions: It combats hallucination by counting on precise paperwork for solutions.

- Up-to-date information: It grants the mannequin entry to recent info or proprietary information with out going by way of your complete retraining course of.

- Scalability: The mannequin isn’t required to carry every thing in reminiscence directly; it retrieves solely what is critical.

For instance, allow us to take an AI that summarizes analysis papers. RAG might allow it to get related tutorial papers, which might then be fed to the LLM. This hybrid system merges transitional reminiscence with lasting reminiscence, yielding tremendously highly effective outcomes.

Episodic, Semantic & Procedural Reminiscence in LLMs

Cognitive science phrases are steadily utilized by researchers to characterize LLM reminiscence. They steadily categorize reminiscence into three varieties: semantic, episodic, and procedural reminiscence:

- Semantic Reminiscence: This represents the stock or storage of info and common data pertaining to the mannequin. One sensible side of that is that it includes exterior data bases or doc shops. The LLM could have gained intensive data throughout the coaching section. Nevertheless, the most recent or most detailed info are in databases.

- Episodic Reminiscence: It includes particular person occasions or dialogue historical past. An LLM makes use of its episodic reminiscence to maintain monitor of what simply happened in a dialog. This reminiscence gives the reply to inquiries like “What was spoken earlier on this session?”

- Procedural Reminiscence: That is the algorithm the mannequin has acquired on how you can act. Within the case of LLM, procedural reminiscence accommodates the system immediate and the foundations or heuristics that the mannequin is given. For instance, instructing the mannequin to “At all times reply in bullet factors” or “Be formal” is equal to setting the procedural reminiscence.

How LLM Reminiscence Works in Actual Programs

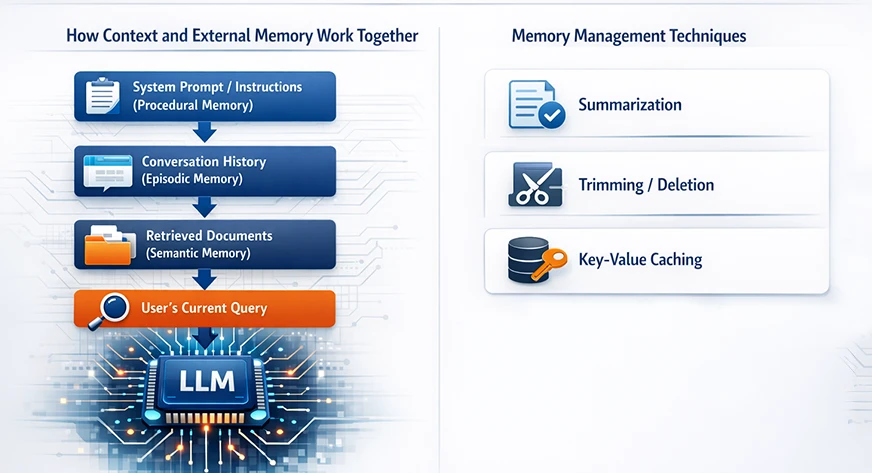

In creating an LLM system with reminiscence capabilities, the builders incorporate the context and the exterior storage within the mannequin’s structure in addition to within the immediate design.

How Context and Exterior Reminiscence Work Collectively

The reminiscence of huge language fashions isn’t considered a unitary ingredient. Slightly, it outcomes from the mixed interactivity of consideration, embeddings, and exterior retrieval programs. Sometimes, it accommodates:

- A system immediate or directions (a part of procedural reminiscence).

- The dialog historical past (contextual/episodic reminiscence).

- Any retrieved exterior paperwork (semantic/persistent reminiscence).

- The consumer’s present question.

All this info is then merged into one immediate that’s inside the context window.

Reminiscence Administration Strategies

The mannequin could be simply defeated by uncooked reminiscence, even when the structure is nice. Engineers make use of numerous strategies to manage the reminiscence in order that the mannequin stays environment friendly:

- Summarization: As a substitute of preserving complete transcripts of prolonged discussions, the system can do a abstract of the sooner components of the dialog at common intervals.

- Trimming/Deletion: Essentially the most fundamental method is to do away with messages which might be outdated or not related. For example, while you exceed the preliminary 100 messages in a chat, you possibly can do away with the oldest ones if they’re now not wanted. Hierarchical Group: Reminiscence could be organized by subject or time. For instance, the older conversations could be categorized by subject after which stored as a narrative, whereas the brand new ones are stored verbatim.

- Key-Worth Caching: On the mannequin’s aspect, Transformers apply a way named KV (key-value) caching. KV caching doesn’t improve the mannequin’s data, nevertheless it makes the lengthy context sequence era quicker by reusing earlier computations.

Challenges & Limitations of LLM Reminiscence

The addition of reminiscence to giant language fashions is a big benefit, nevertheless it additionally comes with a set of recent difficulties. Among the many high issues are the price of computation, hallucinations, and privateness points.

Computational Bottlenecks & Prices

Reminiscence is each extremely efficient and really expensive. Each the lengthy context home windows and reminiscence retrieval are the principle causes for requiring extra computation. To offer a tough instance, doubling the context size roughly quadruples the computation for the eye layers of the Transformer. In actuality, each further token or reminiscence lookup makes use of each GPU and CPU energy.

Hallucination & Context Misalignment

One other subject is the hallucination. This case arises when the LLM provides out improper info that’s nonetheless convincing. For example, if the exterior data base has outdated and outdated information, the LLM could current an outdated truth as if it had been new. Or, if the retrieval step fetches a doc that’s solely loosely associated to the subject, the mannequin could find yourself deciphering it into a solution that’s totally totally different.

Privateness & Moral Concerns

Protecting dialog historical past and private information creates severe issues concerning privateness. If an LLM retains consumer preferences or details about the consumer that’s of a private or delicate nature, then such information should be handled with the very best stage of safety. Truly, the designers need to observe the laws (comparable to GDPR) and the practices which might be thought-about greatest within the business. Which means they need to get the consumer’s consent for reminiscence, holding the minimal doable information, and ensuring that one consumer’s reminiscences are by no means blended with one other’s.

Additionally Learn: What’s Mannequin Collapse? Examples, Causes and Fixes

Conclusion

LLM reminiscence isn’t just one function however moderately a complicated system that has been designed with nice care. It mimics good recall by merging context home windows, exterior retrieval, and architectural design choices. The fashions nonetheless preserve their fundamental core of being stateless, however the present reminiscence programs give them an impression of being persistent, contextual, and adaptive.

With the developments in analysis, LLM reminiscence will more and more develop into extra human-like in its effectivity, selectivity, and reminiscence traits. A deep comprehension of the working of those programs will allow the builders to create AI functions that may be capable of bear in mind what’s necessary, with out the drawbacks of precision, value, or belief.

Continuously Requested Questions

A. LLMs don’t bear in mind previous conversations by default. They’re stateless programs that generate responses solely from the data included within the present immediate. Any obvious reminiscence comes from dialog historical past or exterior information that builders explicitly cross to the mannequin.

A. LLM reminiscence refers back to the strategies used to offer giant language fashions with related previous info. This contains context home windows, dialog historical past, summaries, vector databases, and retrieval programs that assist fashions generate coherent and context-aware responses.

A. A context window defines what number of tokens an LLM can course of directly. Reminiscence is broader and contains how previous info is saved, retrieved, summarized, and injected into the context window throughout every mannequin name.

A. Retrieval-Augmented Era (RAG) improves LLM reminiscence by retrieving related paperwork from an exterior data base and including them to the immediate. This helps scale back hallucinations and permits fashions to make use of up-to-date or personal info with out retraining.

A. Most LLMs are stateless by design. Every request is processed independently except exterior reminiscence programs are used. Statefulness is simulated by storing and re-injecting dialog historical past or retrieved data with each request.

Login to proceed studying and luxuriate in expert-curated content material.

![How Does LLM Reminiscence Work? [Explained in 2 Minutes] How Does LLM Reminiscence Work? [Explained in 2 Minutes]](https://i0.wp.com/cdn.analyticsvidhya.com/wp-content/uploads/2026/01/LLM.webp?w=696&resize=696,0&ssl=1)