This can be a visitor submit by Jeffrey Wang, Co-Founder and Chief Architect at Amplitude in partnership with AWS.

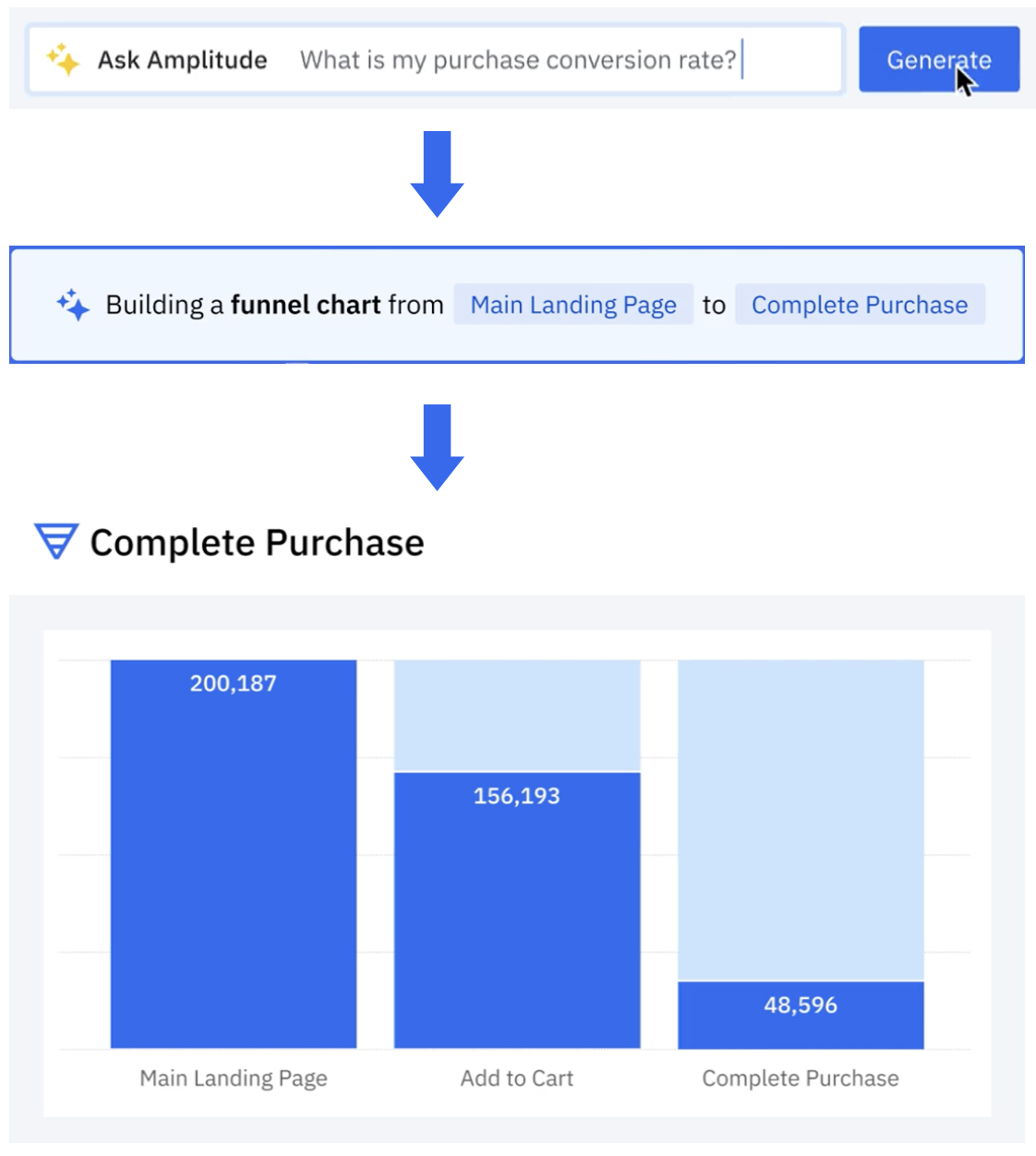

Amplitude is a product and buyer journey analytics platform. Our prospects needed to ask deep questions on their product utilization. Ask Amplitude is an AI assistant that makes use of massive language fashions (LLMs). It combines schema search and content material search to supply a custom-made, correct, low latency, pure language-based visualization expertise to finish prospects. Ask Amplitude has data of a person’s product, taxonomy, and language to border an evaluation. It makes use of a collection of LLM prompts to transform the person’s query right into a JSON definition that may be handed to a customized question engine. The question engine then renders a chart with the reply, as illustrated within the following determine.

Amplitude’s search structure advanced to scale, simplify, and cost-optimize for our prospects, by implementing semantic search and Retrieval Augmented Era (RAG) powered by Amazon OpenSearch Service. On this submit, we stroll you thru Amplitude’s iterative architectural journey and discover how we deal with a number of essential challenges in constructing a scalable semantic search and analytics platform.

Our major focus was on enabling semantic search capabilities and pure language chart technology at scale, whereas implementing a cheap multi-tenant system with granular entry controls. A key goal was optimizing the end-to-end search latency to ship fast outcomes. We additionally tackled the problem of empowering finish prospects to securely search and use their present charts and content material for extra subtle analytical inquiries. Moreover, we developed options to deal with real-time knowledge synchronization at scale, ensuring fixed updates to incoming knowledge could possibly be processed whereas sustaining persistently low search latency throughout your entire system.

RAG and vector search with Ask Amplitude

Let’s take a quick take a look at why Ask Amplitude makes use of RAG. Amplitude collects omnichannel buyer knowledge. Our finish prospects ship knowledge on person actions which can be carried out of their platforms. These actions are recorded as user-generated occasions. For instance, within the case of retail and ecommerce prospects, the sorts of person occasions embody “product search,” “add to cart,” “checked out,” “transport choice,” “buy,” and extra. These occasions assist outline the shopper’s database schema, outlining the tables, columns, and relationships between them. Let’s think about a person query comparable to “How many individuals used 2-day transport?” The LLM wants to find out which parts of the captured person occasions are pertinent to formulating an correct response to the question. When customers ask a query to Ask Amplitude, step one is to filter the related occasions from OpenSearch Service. Fairly than feeding all occasion knowledge to the LLM, we take a extra selective method for each price and accuracy causes. As a result of LLM utilization is billed primarily based on token depend, sending full occasion knowledge could be unnecessarily costly. Extra importantly, offering an excessive amount of context can degrade the LLM’s efficiency—when confronted with 1000’s of schema parts, the mannequin struggles to reliably determine and concentrate on the related data. This data overload can distract the LLM from the core query, probably resulting in hallucinations or inaccurate responses. This is the reason RAG is the popular method. To retrieve essentially the most related objects from the product utilization schema, a vector search is carried out. That is efficient even in conditions when the query won’t discuss with the precise phrases which can be within the buyer’s schema. The next sections stroll via the iterations of Amplitude’s search journey.

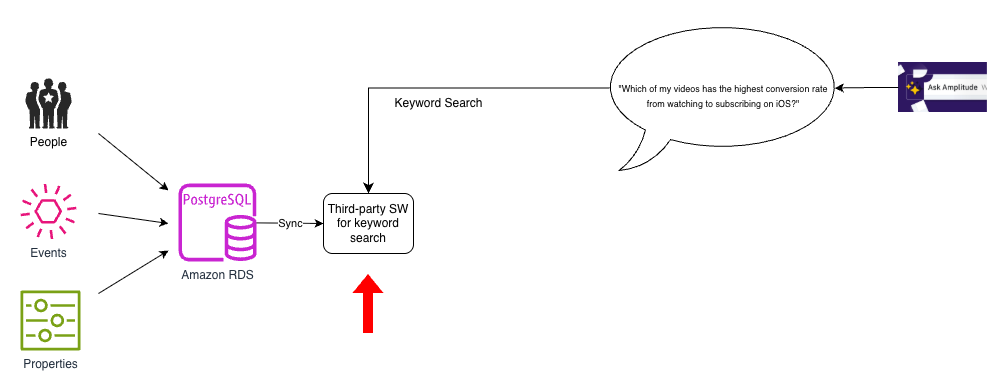

Preliminary answer: No semantic search

We used Amazon Relational Database Service (Amazon RDS) for PostgreSQL as the first database to retailer our folks, occasions, and properties knowledge. Nevertheless, as the next diagram exhibits, we had a separate, third-party retailer to implement key phrase search. We had to usher in knowledge from PostgreSQL to this third-party search index and preserve it up to date.

This structure was easy however had two key shortcomings: there have been no pure language capabilities in our search index, and the search index supported solely key phrase search.

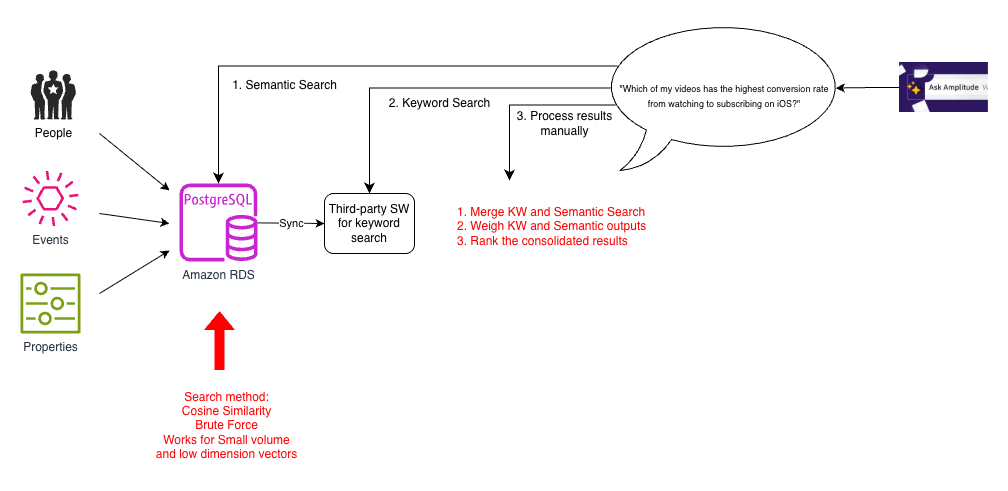

Iteration 1: Brute drive cosine similarity

To enhance our search functionality, we thought-about a number of prototypes. As a result of knowledge volumes for many prospects weren’t very massive, it was fast to construct a vector search prototype utilizing PostgreSQL. We remodeled person interplay knowledge into vector embeddings and used array cosine similarity to compute similarity metrics throughout the dataset. This alleviated the necessity for customized similarity computation. The vector embeddings captured nuanced person conduct patterns utilizing PostgreSQL capabilities with out further infrastructure overhead. That is typically referred to as the brute drive methodology, the place an incoming question is matched towards all embeddings to seek out its high (Ok) neighbors by a distance measure (cosine similarity on this case). The next diagram illustrates this structure.

Enabling semantic search was a giant enchancment over conventional seek for customers who may use completely different phrases to discuss with the identical ideas, comparable to “hours of video streamed” or “complete watch time”. Nevertheless, though this labored for small datasets, it was gradual as a result of the brute drive methodology needed to compute cosine similarity for all pairs of vectors. This was amplified because the variety of parts within the occasions schema, the complexity of questions, and expectations of high quality grew. Moreover, Ask Amplitude solutions wanted to mix each semantic and key phrase search. To help this, every search question needed to be carried out as a three-step course of involving a number of calls to separate databases:

- Retrieve the semantic search outcomes from PostgreSQL.

- Retrieve the key phrase search outcomes from our search index.

- Within the utility, semantic search outcomes and key phrase search outcomes have been mixed utilizing pre-assigned weights, and this output was dispatched to the Ask Amplitude UI.

This multi-step guide method made the search course of extra advanced.

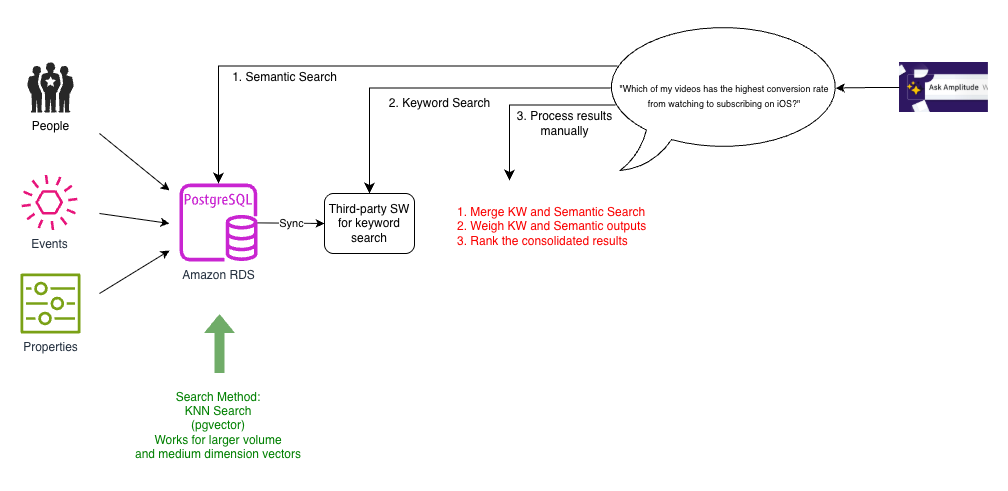

Iteration 2: ANN search with pgvector

As Amplitude’s buyer base grew, Ask Amplitude wanted to scale to accommodate extra prospects and bigger schemas. The objective was not simply to reply the query at hand, however to show the person find out how to construct an end-to-end evaluation by guiding them iteratively. To this finish, the embeddings wanted to retailer and index contextually wealthy semantic content material. The crew experimented with larger, greater dimensionality embeddings and had anecdotal observations of vector dimensionality showing to affect the effectiveness of the retrieval. One other requirement was to help multilingual embeddings.

To help a extra scalable k-NN search, the crew switched to pgvector, a PostgreSQL extension that gives highly effective functionalities for with vectors in high-dimensional house. The next diagram illustrates this structure.

Pgvector was in a position to help k-nearest neighbor (k-NN) similarity seek for bigger dimensionality vectors. Because the variety of vectors grew, we switched to indexes that allowed approximate nearest neighbor (ANN) search, comparable to HNSW and IVFFlat.

For purchasers with bigger schemas, calculating brute drive cosine similarity was gradual and costly. We discovered a efficiency distinction after we moved to ANN enabled by pgvector. Nevertheless, we nonetheless wanted to cope with the complexity launched by the three-step technique of querying PostgreSQL for semantic search, a separate search index for key phrase search, after which stitching all of it collectively.

Iteration 3: Twin sync to key phrase and semantic search with OpenSearch Service

Because the variety of prospects grew, so did the variety of schemas. There have been lots of of thousands and thousands of schema entries within the database, so we sought a performant, scalable, and cost-effective answer for k-NN search. We explored OpenSearch Service and Pinecone. We selected OpenSearch Service as a result of we may mix key phrase and vector search capabilities. This was handy for 4 causes:

- Easier structure – Positioning semantic search as a functionality in an present search answer, as we noticed in OpenSearch Service, makes for an easier structure than treating it as a separate specialised service.

- Decrease-latency search – The power to successfully set up and catalog search knowledge was elementary to how we generated solutions. Augmenting semantic search to our present pipeline by combining each into one question offered decrease latency querying.

- Decreased want for knowledge synchronization – Conserving the database in sync with the search index was essential to the accuracy and high quality of solutions. With the options that we checked out, we must preserve two synchronization pipelines, one for key phrase search index and the opposite for a semantic search index, complicating the structure and growing the probabilities of experiencing out-of-sync outcomes between key phrase and semantic search outcomes. Synchronizing them into one place was simpler than synchronizing them into a number of locations after which combining the indicators at question time. With a mixed key phrase and vector search capabilities of OpenSearch Service, we now wanted to synchronize just one major database on PostgreSQL with the search index.

- Minimized efficiency affect to supply knowledge updates – We discovered that synchronizing knowledge to a different search index is a fancy downside as a result of our dataset modifications continually. With each new buyer, we had lots of of updates each second. We had to ensure the latency of those updates wasn’t impacted by the sync course of. Collocating search knowledge with vector embeddings obviated the necessity for a number of sync processes. This helped us keep away from further latency within the major database, as a result of sync processes encroaching upon database replace site visitors.

Though our earlier third-party search engine specialised in quick ecommerce search, this wasn’t aligned with Amplitude’s particular wants. By migrating to OpenSearch Service, we simplified our structure by decreasing two synchronization processes to 1. We phased out the present search platform step by step. This meant we briefly continued to have two synchronization processes, one with present platform and one other to the mixed key phrase and semantic search index on OpenSearch Service, as proven within the following diagram.

Along with the professionals of k-NN search recognized within the earlier iteration, shifting to OpenSearch Service helped us notice three key advantages:

- Decreased latency – As an alternative of collocating the embeddings with major knowledge, we have been in a position to collocate with our search index. The search index is the place our utility wanted to run our queries to select person occasions which can be related to the query being requested and ship this as context despatched to the LLM. As a result of the search textual content, metadata, and embeddings have been multi function place, we would have liked just one hop for all our search necessities, thereby enhancing latency.

- Decreased compute energy – We had wherever between 5,000–20,000 parts within the person occasions schema. We didn’t have to ship your entire schema to the LLM, as a result of every person question required solely 20–50 related parts. With the environment friendly filtering capabilities of OpenSearch Service, we have been in a position to slim down the vector search house by utilizing tenant-specific metadata, considerably decreasing compute necessities throughout our multi-tenant atmosphere.

- Improved scalability – With OpenSearch Service, we may benefit from further capabilities comparable to HNSW product quantization (PQ) and byte quantization. Byte quantization made it potential to deal with the size of thousands and thousands of vector entries with minimal discount in recall, however with enchancment to price and latency.

Nevertheless, on this interim answer, our knowledge wasn’t absolutely migrated to OpenSearch Service but. We nonetheless had the previous pipeline together with the brand new pipeline, and needed to carry out twin syncing. This was solely non permanent, as we phased out the previous search index, and the previous pipeline served as a baseline to match with by way of efficiency and recall.

Iteration 4: Hybrid search with OpenSearch Service

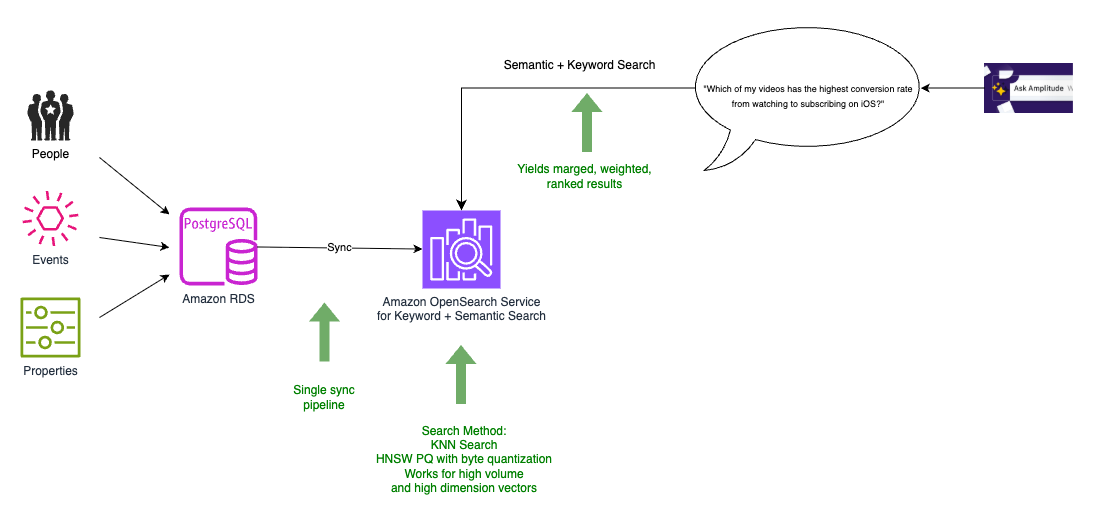

Within the remaining structure, we have been in a position to migrate all our knowledge to OpenSearch Service, which additionally served as our vector database, as proven within the following diagram.

We now needed to carry out only one knowledge synchronization from the PostgreSQL database to the mixed search and vector index, permitting the assets on the database to concentrate on transactional site visitors. OpenSearch Service offers merging, weighting, and rating of the search outcomes as a part of the identical question. This obviated the necessity to implement them as a separate module in our utility, successfully leading to a single, scalable hybrid search (mixed keyword-based (lexical) search and vector-based (semantic) search). With OpenSearch Service, we may additionally experiment with the brand new integration with Amazon Personalize.

Evolving RAG to attract upon user-generated content material

Our prospects needed to ask deeper questions on their product utilization that couldn’t be answered simply by trying on the schema (the construction and names of the info columns) alone. Merely realizing the column names in a database doesn’t essentially reveal the that means, values, or correct interpretation of that knowledge. The schema alone offers an incomplete image. A naïve method could be to index and search all knowledge values as an alternative of looking simply the schema. Amplitude avoids this for scalability causes. The cardinality and quantity of occasion knowledge (probably trillions of occasion information) makes indexing all values price prohibitive. Amplitudes hosts about 20 million charts and dashboards throughout all Amplitude prospects. This user-generated content material is effective. We noticed that we will higher perceive the that means and context by analyzing how different customers have beforehand visualized knowledge.For instance, if a person asks about “2-day transport,” Amplitude first checks if the info schema accommodates columns with related names like “transport” or “transport methodology”. If such columns exist, it then examines the potential values in these columns to seek out values associated to 2-day transport. Amplitude additionally searches user-created content material (charts, dashboards, and extra) to see if anybody else on the firm has already visualized knowledge associated to 2-day transport. In that case, it could use that present chart as a reference for find out how to correctly filter and analyze the info to reply the query. To go looking this content material effectively, Amplitude employs a hybrid method combining key phrase and vector similarity (semantic) searches. For tenant isolation and pruning, we use metadata to filter by buyer first, after which vector search.

Conclusion

On this submit, we confirmed you ways Amplitude constructed Ask Amplitude, an AI assistant utilizing OpenSearch Service as a vector database to allow pure language queries of product analytics knowledge. We advanced our system via 4 iterations, in the end consolidating key phrase and semantic search into OpenSearch Service, which simplified our structure from a number of sync pipelines to 1, diminished question latency by combining search operations, and enabled environment friendly multi-tenant vector search at scale utilizing options like HNSW PQ and byte quantization. We prolonged the system past schema search to index 20 million user-generated charts and dashboards, utilizing hybrid search to supply richer context for answering buyer questions on product utilization.

As pure language interfaces grow to be more and more prevalent, Amplitude’s iterative journey demonstrates the potential for harnessing LLMs and RAG utilizing vector databases comparable to OpenSearch Service to unlock wealthy conversational buyer experiences. By step by step transitioning to a unified search answer that mixes key phrase and semantic vector search capabilities, Amplitude overcame scalability and efficiency challenges whereas decreasing structure complexity. The ultimate structure utilizing OpenSearch Service enabled environment friendly multi-tenancy and fine-grained entry management and likewise facilitated low-latency hybrid search. Amplitude is ready to ship extra pure and intuitive analytics capabilities to its prospects by producing deeper insights and contextualizing knowledge.

To be taught extra about how Ask Amplitude helps you categorical Amplitude-related ideas and questions in pure language, discuss with Ask Amplitude. To get began with OpenSearch Service as a vector database, discuss with Amazon OpenSearch Service as a Vector Database.

Concerning the authors