AI brokers are reshaping software program growth, from writing code to finishing up complicated directions. But LLM-based brokers are susceptible to errors and infrequently carry out poorly on difficult, multi-step duties. Reinforcement studying (RL) is an strategy the place AI techniques study to make optimum selections by receiving rewards or penalties for his or her actions, enhancing by means of trial and error. RL can assist brokers enhance, however it sometimes requires builders to extensively rewrite their code. This discourages adoption, though the info these brokers generate might considerably enhance efficiency by means of RL coaching.

To handle this, a analysis staff from Microsoft Analysis Asia – Shanghai has launched Agent Lightning. This open-source (opens in new tab) framework makes AI brokers trainable by means of RL by separating how brokers execute duties from mannequin coaching, permitting builders so as to add RL capabilities with nearly no code modification.

Capturing agent habits for coaching

Agent Lightning converts an agent’s expertise right into a format that RL can use by treating the agent’s execution as a sequence of states and actions, the place every state captures the agent’s standing and every LLM name is an motion that strikes the agent to a brand new state.

This strategy works for any workflow, irrespective of how complicated. Whether or not it includes a number of collaborating brokers or dynamic instrument use, Agent Lightning breaks it down right into a sequence of transitions. Every transition captures the LLM’s enter, output, and reward (Determine 1). This standardized format means the info can be utilized for coaching with none extra steps.

Hierarchical reinforcement studying

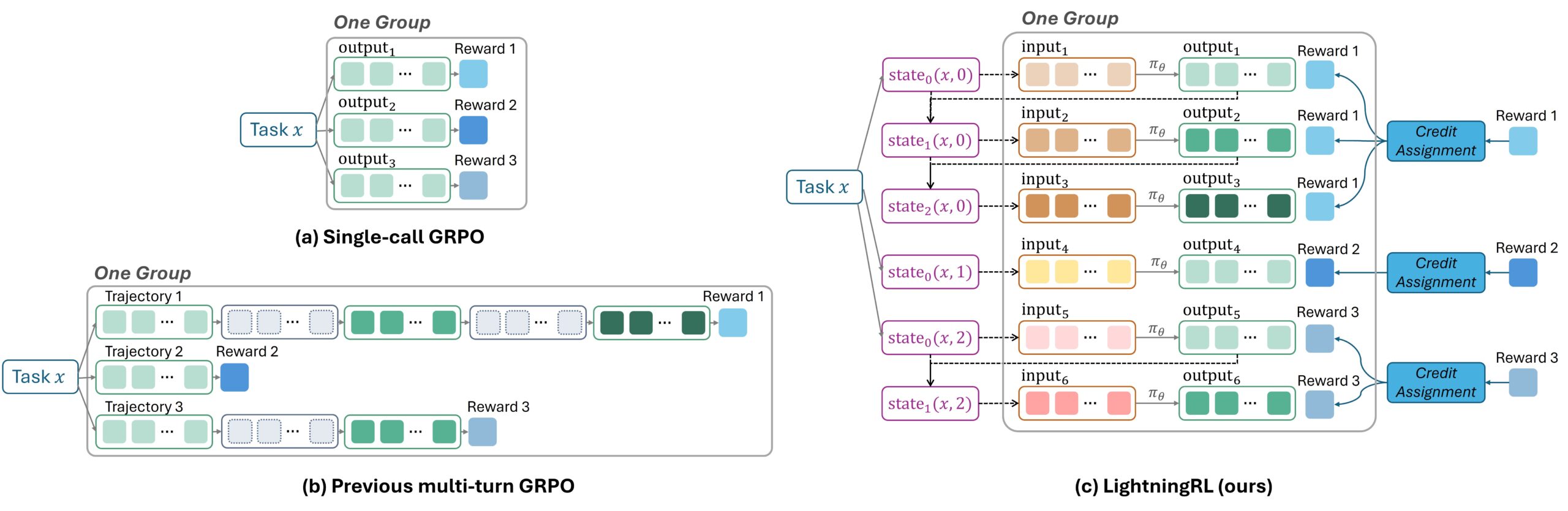

Conventional RL coaching for brokers that make a number of LLM requests includes stitching collectively all content material into one lengthy sequence after which figuring out which elements must be discovered and which ignored throughout coaching. This strategy is troublesome to implement and may create excessively lengthy sequences that degrade mannequin efficiency.

As an alternative, Agent Lightning’s LightningRL algorithm takes a hierarchical strategy. After a activity completes, a credit score task module determines how a lot every LLM request contributed to the result and assigns it a corresponding reward. These unbiased steps, now paired with their very own reward scores, can be utilized with any current single-step RL algorithm, similar to Proximal Coverage Optimization (PPO) or Group Relative Coverage Optimization (GRPO) (Determine 2).

This design provides a number of advantages. It stays absolutely appropriate with broadly used single-step RL algorithms, permitting current coaching strategies to be utilized with out modification. Organizing information as a sequence of unbiased transitions lets builders flexibly assemble the LLM enter as wanted, supporting complicated behaviors like brokers that use a number of instruments or work with different brokers. Moreover, by retaining sequences brief, the strategy scales cleanly and retains coaching environment friendly.

Agent Lightning as middleware

Agent Lightning serves as middleware between RL algorithms and agent environments, offering modular elements that allow scalable RL by means of standardized protocols and well-defined interfaces.

An agent runner manages the brokers as they full duties. It distributes work and collects and shops the outcomes and progress information. It operates individually from the LLMs, enabling them to run on totally different sources and scale to help a number of brokers operating concurrently.

An algorithm trains the fashions and hosts the LLMs used for inference and coaching. It orchestrates the general RL cycle, managing which duties are assigned, how brokers full them, and the way fashions are up to date based mostly on what the brokers study. It sometimes runs on GPU sources and communicates with the agent runner by means of shared protocols.

The LightningStore (opens in new tab) serves because the central repository for all information exchanges inside the system. It gives standardized interfaces and a shared format, guaranteeing that the totally different elements can work collectively and enabling the algorithm and agent runner to speak successfully.

All RL cycles comply with two steps: (1) Agent Lightning collects agent execution information (referred to as “spans”) and retailer them within the information retailer; (2) it then retrieves the required information and sends it to the algorithm for coaching. By means of this design, the algorithm can delegate duties asynchronously to the agent runner, which completes them and experiences the outcomes again (Determine 4).

One key benefit of this strategy is its algorithmic flexibility. The system makes it simple for builders to customise how brokers study, whether or not they’re defining totally different rewards, capturing intermediate information, or experimenting with totally different coaching approaches.

One other benefit is useful resource effectivity. Agentic RL techniques are complicated, integrating agentic techniques, LLM inference engines, and coaching frameworks. By separating these elements, Agent Lightning makes this complexity manageable and permits every half to be optimized independently

A decoupled design permits every part to make use of the {hardware} that fits it finest. The agent runner can use CPUs whereas mannequin coaching makes use of GPUs. Every part also can scale independently, enhancing effectivity and making the system simpler to keep up. In apply, builders can maintain their current agent frameworks and swap mannequin calls to the Agent Lightning API with out altering their agent code (Determine 5).

Analysis throughout three real-world eventualities

Agent Lightning was examined on three distinct duties, reaching constant efficiency enhancements throughout all eventualities (Determine 6):

Textual content-to-SQL (LangChain): In a system with three brokers dealing with SQL era, checking, and rewriting, Agent Lightning concurrently optimized two of them, considerably enhancing the accuracy of producing executable SQL from pure language queries.

Retrieval-augmented era (OpenAI Brokers SDK implementation): On the multi-hop question-answering dataset MuSiQue, which requires querying a big Wikipedia database, Agent Lightning helped the agent generate more practical search queries and cause higher from retrieved content material.

Mathematical QA and gear use (AutoGen implementation): For complicated math issues, Agent Lightning educated LLMs to extra precisely decide when and how one can name the instrument and combine the outcomes into its reasoning, rising accuracy.

Enabling steady agent enchancment

By simplifying RL integration, Agent Lightning could make it simpler for builders to construct, iterate, and deploy high-performance brokers. We plan to broaden Agent Lightning’s capabilities to incorporate computerized immediate optimization and extra RL algorithms.

The framework is designed to function an open platform the place any AI agent can enhance by means of real-world apply. By bridging current agentic techniques with reinforcement studying, Agent Lightning goals to assist create AI techniques that study from expertise and enhance over time.