Attempt taking an image of every of North America’s roughly 11,000 tree species, and also you’ll have a mere fraction of the tens of millions of photographs inside nature picture datasets. These huge collections of snapshots — starting from butterflies to humpback whales — are an excellent analysis software for ecologists as a result of they supply proof of organisms’ distinctive behaviors, uncommon circumstances, migration patterns, and responses to air pollution and different types of local weather change.

Whereas complete, nature picture datasets aren’t but as helpful as they may very well be. It’s time-consuming to look these databases and retrieve the photographs most related to your speculation. You’d be higher off with an automatic analysis assistant — or maybe synthetic intelligence techniques known as multimodal imaginative and prescient language fashions (VLMs). They’re skilled on each textual content and pictures, making it simpler for them to pinpoint finer particulars, like the precise timber within the background of a photograph.

However simply how properly can VLMs help nature researchers with picture retrieval? A crew from MIT’s Pc Science and Synthetic Intelligence Laboratory (CSAIL), College School London, iNaturalist, and elsewhere designed a efficiency check to search out out. Every VLM’s activity: find and reorganize probably the most related outcomes throughout the crew’s “INQUIRE” dataset, composed of 5 million wildlife footage and 250 search prompts from ecologists and different biodiversity consultants.

On the lookout for that particular frog

In these evaluations, the researchers discovered that bigger, extra superior VLMs, that are skilled on much more information, can generally get researchers the outcomes they need to see. The fashions carried out fairly properly on easy queries about visible content material, like figuring out particles on a reef, however struggled considerably with queries requiring knowledgeable information, like figuring out particular organic circumstances or behaviors. For instance, VLMs considerably simply uncovered examples of jellyfish on the seaside, however struggled with extra technical prompts like “axanthism in a inexperienced frog,” a situation that limits their capacity to make their pores and skin yellow.

Their findings point out that the fashions want rather more domain-specific coaching information to course of troublesome queries. MIT PhD scholar Edward Vendrow, a CSAIL affiliate who co-led work on the dataset in a brand new paper, believes that by familiarizing with extra informative information, the VLMs might in the future be nice analysis assistants. “We need to construct retrieval techniques that discover the precise outcomes scientists search when monitoring biodiversity and analyzing local weather change,” says Vendrow. “Multimodal fashions don’t fairly perceive extra advanced scientific language but, however we imagine that INQUIRE shall be an necessary benchmark for monitoring how they enhance in comprehending scientific terminology and in the end serving to researchers mechanically discover the precise photos they want.”

The crew’s experiments illustrated that bigger fashions tended to be simpler for each easier and extra intricate searches as a consequence of their expansive coaching information. They first used the INQUIRE dataset to check if VLMs might slender a pool of 5 million photos to the highest 100 most-relevant outcomes (also referred to as “rating”). For easy search queries like “a reef with artifical constructions and particles,” comparatively massive fashions like “SigLIP” discovered matching photos, whereas smaller-sized CLIP fashions struggled. In response to Vendrow, bigger VLMs are “solely beginning to be helpful” at rating more durable queries.

Vendrow and his colleagues additionally evaluated how properly multimodal fashions might re-rank these 100 outcomes, reorganizing which photos had been most pertinent to a search. In these assessments, even large LLMs skilled on extra curated information, like GPT-4o, struggled: Its precision rating was solely 59.6 p.c, the very best rating achieved by any mannequin.

The researchers offered these outcomes on the Convention on Neural Data Processing Methods (NeurIPS) earlier this month.

Soliciting for INQUIRE

The INQUIRE dataset contains search queries primarily based on discussions with ecologists, biologists, oceanographers, and different consultants in regards to the forms of photos they’d search for, together with animals’ distinctive bodily circumstances and behaviors. A crew of annotators then spent 180 hours looking out the iNaturalist dataset with these prompts, rigorously combing by roughly 200,000 outcomes to label 33,000 matches that match the prompts.

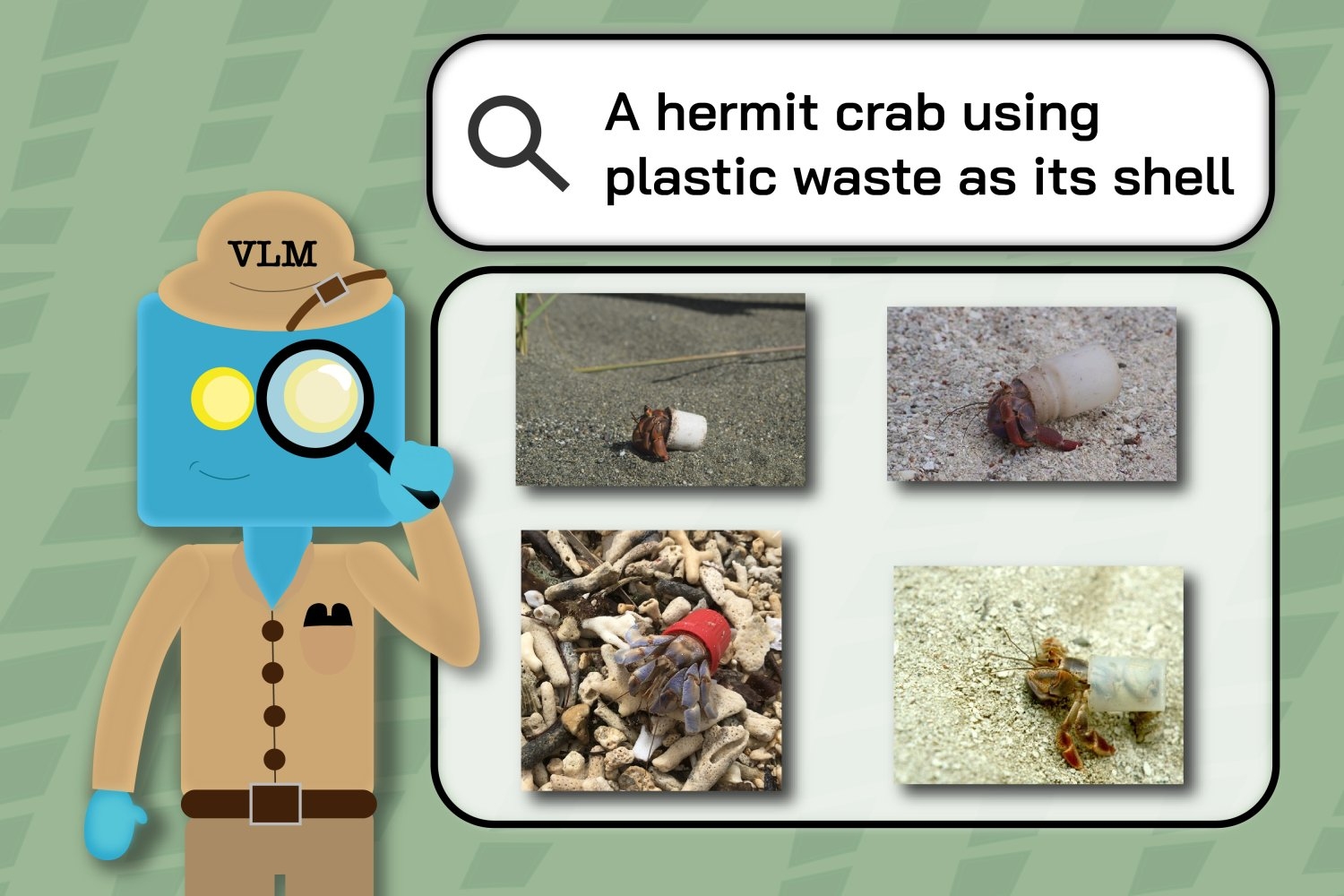

As an illustration, the annotators used queries like “a hermit crab utilizing plastic waste as its shell” and “a California condor tagged with a inexperienced ‘26’” to determine the subsets of the bigger picture dataset that depict these particular, uncommon occasions.

Then, the researchers used the identical search queries to see how properly VLMs might retrieve iNaturalist photos. The annotators’ labels revealed when the fashions struggled to know scientists’ key phrases, as their outcomes included photos beforehand tagged as irrelevant to the search. For instance, VLMs’ outcomes for “redwood timber with fireplace scars” generally included photos of timber with none markings.

“That is cautious curation of knowledge, with a give attention to capturing actual examples of scientific inquiries throughout analysis areas in ecology and environmental science,” says Sara Beery, the Homer A. Burnell Profession Growth Assistant Professor at MIT, CSAIL principal investigator, and co-senior writer of the work. “It’s proved very important to increasing our understanding of the present capabilities of VLMs in these probably impactful scientific settings. It has additionally outlined gaps in present analysis that we will now work to handle, notably for advanced compositional queries, technical terminology, and the fine-grained, refined variations that delineate classes of curiosity for our collaborators.”

“Our findings indicate that some imaginative and prescient fashions are already exact sufficient to help wildlife scientists with retrieving some photos, however many duties are nonetheless too troublesome for even the biggest, best-performing fashions,” says Vendrow. “Though INQUIRE is concentrated on ecology and biodiversity monitoring, the big variety of its queries signifies that VLMs that carry out properly on INQUIRE are more likely to excel at analyzing massive picture collections in different observation-intensive fields.”

Inquiring minds need to see

Taking their challenge additional, the researchers are working with iNaturalist to develop a question system to higher assist scientists and different curious minds discover the photographs they really need to see. Their working demo permits customers to filter searches by species, enabling faster discovery of related outcomes like, say, the varied eye colours of cats. Vendrow and co-lead writer Omiros Pantazis, who just lately obtained his PhD from College School London, additionally intention to enhance the re-ranking system by augmenting present fashions to supply higher outcomes.

College of Pittsburgh Affiliate Professor Justin Kitzes highlights INQUIRE’s capacity to uncover secondary information. “Biodiversity datasets are quickly turning into too massive for any particular person scientist to evaluation,” says Kitzes, who wasn’t concerned within the analysis. “This paper attracts consideration to a troublesome and unsolved drawback, which is learn how to successfully search by such information with questions that transcend merely ‘who’s right here’ to ask as an alternative about particular person traits, conduct, and species interactions. With the ability to effectively and precisely uncover these extra advanced phenomena in biodiversity picture information shall be important to elementary science and real-world impacts in ecology and conservation.”

Vendrow, Pantazis, and Beery wrote the paper with iNaturalist software program engineer Alexander Shepard, College School London professors Gabriel Brostow and Kate Jones, College of Edinburgh affiliate professor and co-senior writer Oisin Mac Aodha, and College of Massachusetts at Amherst Assistant Professor Grant Van Horn, who served as co-senior writer. Their work was supported, partly, by the Generative AI Laboratory on the College of Edinburgh, the U.S. Nationwide Science Basis/Pure Sciences and Engineering Analysis Council of Canada World Heart on AI and Biodiversity Change, a Royal Society Analysis Grant, and the Biome Well being Venture funded by the World Wildlife Fund United Kingdom.