On this article, you’ll learn to design, scale, and safe instrument calling in AI brokers in order that the layer connecting mannequin reasoning to real-world motion holds up in manufacturing.

Matters we are going to cowl embrace:

- How the instrument calling protocol separates mannequin reasoning from deterministic execution, and why that boundary issues.

- Tips on how to write instrument definitions, error dealing with, and parallelization methods that keep dependable as your agent scales.

- Tips on how to handle instrument catalog measurement, safe agentic methods, and consider instrument calls past end-to-end job success.

Introduction

Most AI agent failures don’t hint again to dangerous reasoning. The mannequin understands the duty, then calls the improper instrument, passes malformed arguments, will get again an unhandled error, and produces a improper reply anyway. The reasoning layer will get the eye; the instrument layer is the place manufacturing incidents truly occur.

Software calling — additionally referred to as perform calling — is what bridges a language mannequin’s reasoning to real-world motion. With out it, brokers are capped by coaching information: no reside queries, no exterior methods, no uncomfortable side effects. With it, an agent can search the online, name APIs, run code, retrieve paperwork, and set off transactions in any system that exposes an interface.

Getting this proper means understanding the complete stack, not simply the completely satisfied path. This text covers:

- Understanding the instrument calling protocol and why the execution boundary issues

- Writing definitions and error dealing with that maintain up in manufacturing

- Scaling instrument catalogs and parallelizing calls with out sacrificing accuracy

- Securing agentic methods and evaluating past end-to-end job success

Every step covers when the idea applies, what trade-offs it carries, and what goes improper whenever you skip it.

Step 1: Understanding the Software Calling Protocol

Software calling in AI brokers works as a easy loop: the mannequin decides what motion is required, and your system executes it.

First, you outline the instruments by giving the mannequin an inventory with clear names, functions, and structured enter/output schemas. This units the boundaries of what the agent can do.

When a person sends a request, the mannequin reads it and decides whether or not it could reply immediately or wants to make use of a instrument. If a instrument is required, it selects essentially the most related one and produces a structured JSON payload with the instrument title and arguments.

- The system receives the instrument name and validates the enter

- It executes the precise perform or API

- It handles errors and codecs the end result

That result’s then despatched again to the mannequin, which makes use of it to proceed reasoning and generate the ultimate reply. Extra importantly, the mannequin does not execute something. Your utility code receives the payload, validates it, runs the logic, and returns the end result as new context.

The boundary issues. The mannequin is a non-deterministic reasoner proposing actions; your code is the deterministic layer that executes and validates them. Letting the mannequin guess at argument codecs, skipping end result suggestions, or omitting validation blurs this contract in ways in which trigger silent failures at scale.

Step 2: Writing Software Definitions as Contracts

Software definitions are the largest lever on whether or not your agent makes use of instruments accurately. Imprecise descriptions produce improper picks; free parameter varieties produce dangerous arguments.

Robust definitions have three components:

- A exact function assertion together with scope and circumstances — “Search the online for present or time-sensitive info; don’t use this for questions answerable from coaching information” beats “Search the online.”

- Typed and constrained parameters — choose enums over open strings, use pure identifiers the mannequin can infer from context, and add specific format examples the place wanted.

- A transparent output contract — what the instrument returns, in what form, and what partial or empty outcomes appear to be, so the mannequin causes from sign fairly than void.

Overlapping instruments want specific resolution boundaries; when you have knowledge_base_search and web_search, every description should make the break up apparent. Additionally embrace unfavourable steerage; telling the mannequin when not to name a instrument prevents pointless invocations that add latency and burn tokens.

Step 3: Constructing Error Dealing with Into the Software Layer

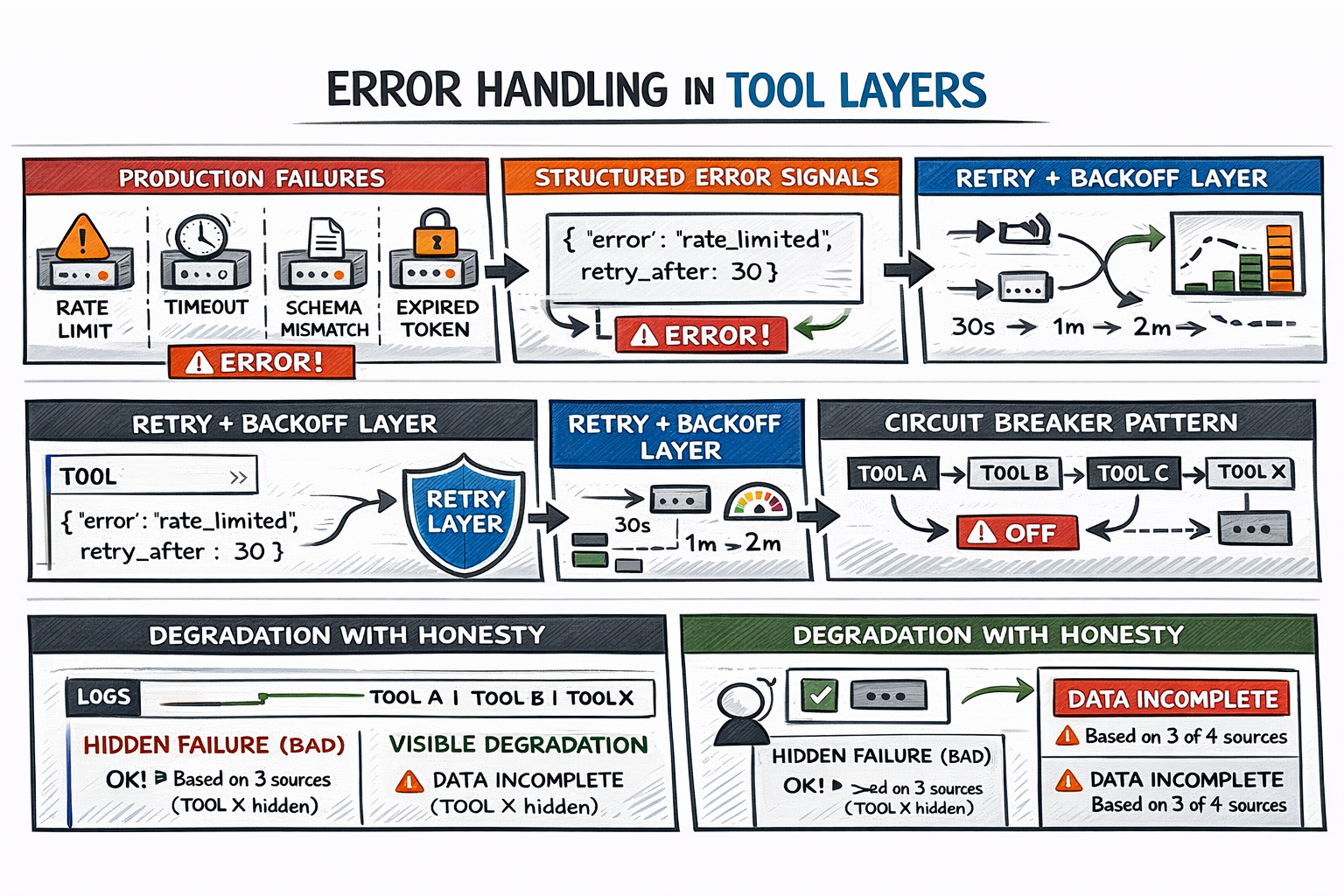

In follow, APIs rate-limit, trip, and alter schemas, and OAuth tokens expire. A instrument returning an empty array is worse than one returning a structured error — a minimum of the error provides the mannequin one thing to purpose from.

Constructing Error Dealing with Into the Software Layer

Three practices cowl the failure floor:

- Typed, interpretable error indicators — an error of the shape

{"error": "rate_limited", "retry_after": 30}tells the mannequin precisely what occurred and what to do subsequent. - Clear transient-failure dealing with — community blips and fee limits must be absorbed by the instrument layer with exponential backoff, not surfaced uncooked to the reasoning loop.

- Circuit breakers for persistent failures — as soon as a failure threshold is crossed, the instrument stops being referred to as and the mannequin is explicitly knowledgeable it’s unavailable.

That final level is crucial: the mannequin ought to all the time know when a instrument fails. An agent that solutions from three out of 4 information sources and says so is way extra helpful than one which fills gaps with hallucinated content material.

Step 4: Parallelizing Software Calls Strategically

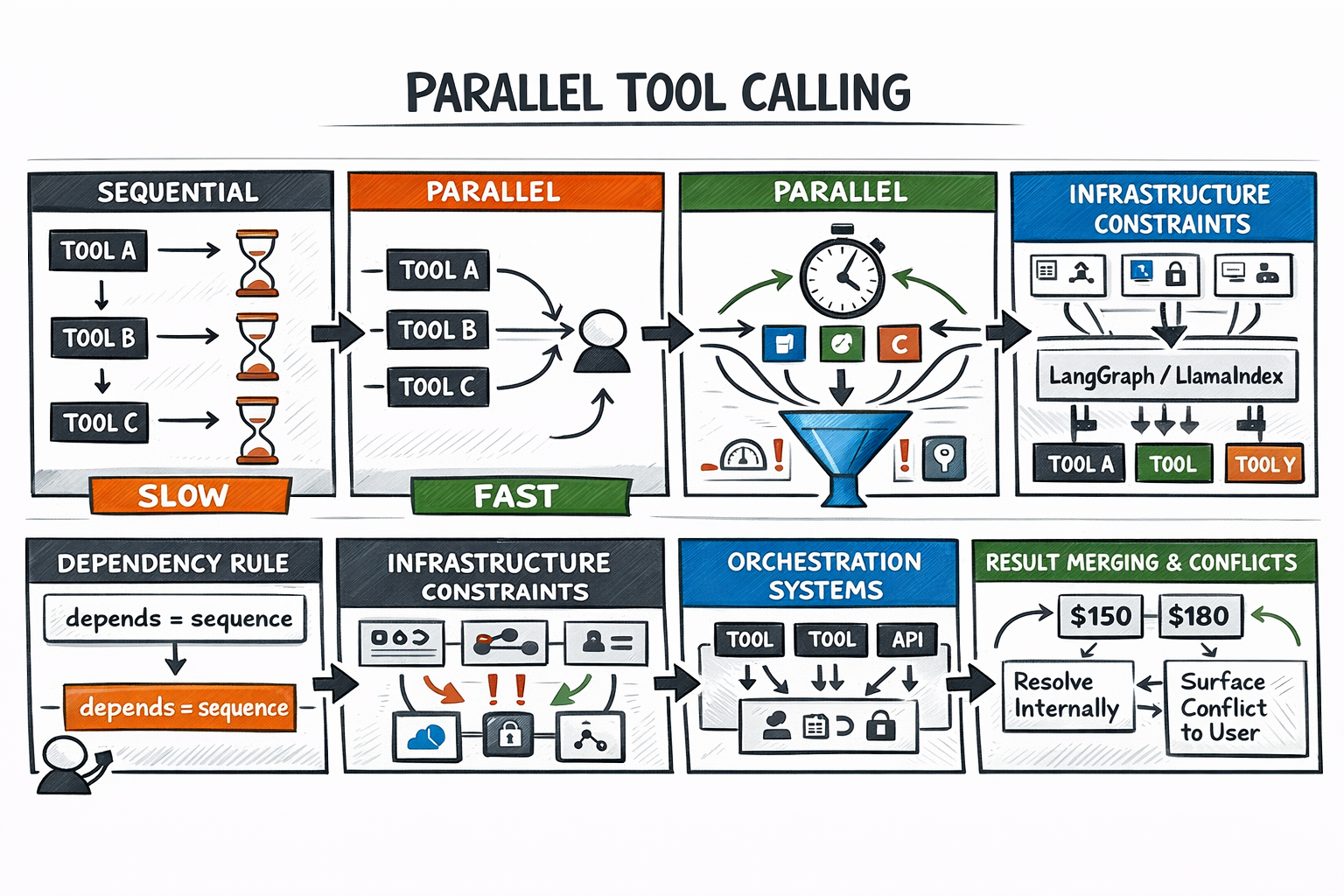

Sequential execution is the secure default, but it surely has a value. When instruments don’t rely upon one another’s outputs, serializing them is pure latency with no profit. So you possibly can name instruments in parallel.

The choice rule is dependency:

- If instrument B wants instrument A’s output as enter, they’re sequential.

- If each could be referred to as with what’s already identified, they’re candidates for parallel dispatch.

Your agent orchestration framework handles the orchestration mechanics. The tougher downside is infrastructure: parallel calls compete for a similar fee restrict headroom, connection swimming pools, and auth tokens concurrently — constraints invisible in sequential execution that floor .

Parallelizing Agent Software Calls

Output merging is the opposite failure mode. Parallel outcomes come again independently, and the mannequin should synthesize them. In the event that they battle, the mannequin wants an outlined decision technique — both surfacing the battle to the person or making use of a precedence rule.

Step 5: Managing Software Catalog Dimension

Giving brokers extra instruments than they want degrades choice accuracy predictably. A mannequin selecting from 5 clearly scoped instruments considerably outperforms one scanning fifty. Giant catalogs additionally devour enter tokens that may in any other case be accessible for reasoning context.

The scalable resolution is dynamic instrument loading: retrieving a semantically related subset per job through vector similarity over instrument descriptions, fairly than registering the whole lot upfront. The place dynamic loading isn’t sensible, constant naming prefixes group instruments by area, turning a flat search right into a two-step “which class, then which instrument” resolution.

Audit for redundancy. Two instruments that do almost the identical factor for nominally totally different causes create a confusion floor each time the mannequin chooses between them. Consolidate or differentiate; there’s no center floor that works in manufacturing. Right here’s a helpful check: for those who can’t articulate in a single sentence why an agent would decide instrument A over instrument B, the boundary isn’t clear sufficient to ship.

Step 6: Designing for Safety and Blast Radius

In manufacturing, brokers set off actual transactions, ship actual emails, and modify actual information. The blast radius of an autonomous error by tool-calling AI brokers is all the time bigger than it regarded in a demo.

Two menace surfaces require deliberate design:

- Scope creep via permissions — instruments ought to carry minimal entry for his or her perform. Learn-only instruments are inherently safer, and write operations with irreversible penalties ought to gate behind a human approval step. Pausing to floor a proposed motion and require affirmation is a legitimate structure selection, not a limitation.

- Immediate injection — malicious content material embedded in instrument outputs might try and redirect the agent’s subsequent habits. Sanitizing instrument outcomes earlier than they re-enter the reasoning context is the usual countermeasure.

The OWASP Prime 10 for LLM Functions covers the complete menace taxonomy for agentic methods. For any agent calling instruments in manufacturing, reviewing these classes earlier than deployment is time effectively spent.

Step 7: Evaluating Software Calls and Iterating on Definitions

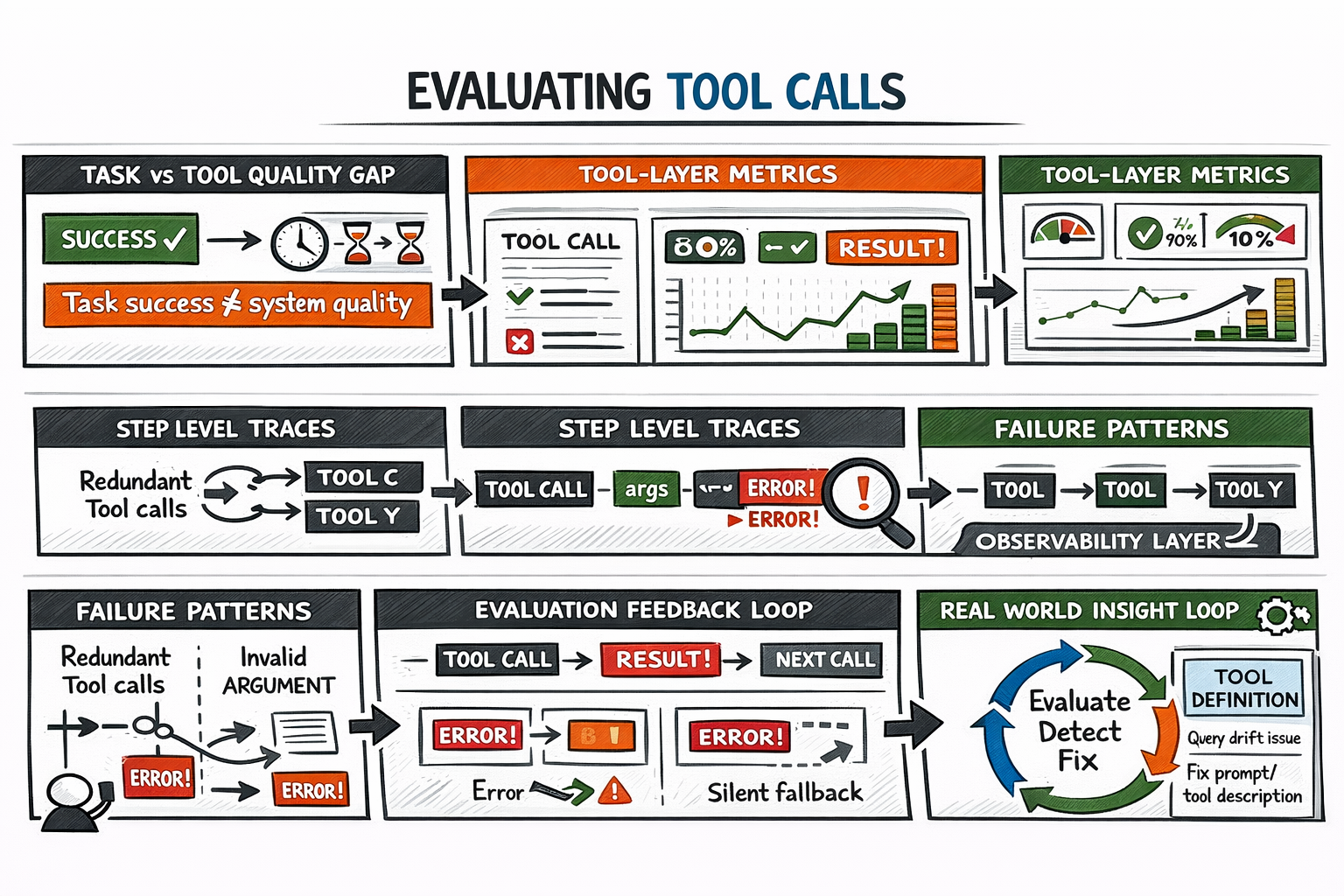

Finish-to-end job accuracy hides tool-layer issues. An agent can full a job accurately whereas making inefficient instrument picks, incurring pointless token prices, or silently recovering from earlier errors. These patterns present up as latency, value overruns, and reliability failures beneath load.

Software-specific analysis tracks what issues: appropriate instrument choice fee, first-attempt argument validity, error propagation into last outputs, and restoration high quality. This requires step-level traces — logs capturing every instrument name, its arguments, its end result, and the following reasoning step. With out traces, debugging a manufacturing failure is guesswork.

Evaluating AI Agent Software Calls

Definitions ought to evolve from analysis indicators: excessive charges of redundant calls normally point out scope issues; frequent invalid arguments normally point out descriptions needing clarification or examples.

The iteration loop: construct an analysis set masking identified failure modes → instrument for observability → run it → establish highest-frequency failures → replace definitions or error dealing with → repeat.

Learn Tips on how to Consider Software-Calling Brokers by Arize AI and Software analysis | Claude Cookbook to be taught extra.

Abstract

The instrument layer is the place agentic methods meet the true world. Right here’s a sensible sample that works: outline specific contracts, deal with failures on the supply, constrain scope to what’s crucial, and measure what issues earlier than optimizing for it.

Right here’s a abstract of what we’ve lined:

| Step | Significance |

|---|---|

| Understanding the Software Calling Protocol | Establishes the separation between mannequin reasoning and execution. Prevents silent failures by implementing validation, structured inputs, and correct suggestions loops. |

| Writing Software Definitions as Contracts | Ensures appropriate instrument choice and argument formatting via exact descriptions, constrained inputs, and clear output schemas. Reduces ambiguity and misuse. |

| Constructing Error Dealing with Into the Software Layer | Improves reliability by dealing with API failures, fee limits, and timeouts with structured errors, retries, and circuit breakers, enabling the mannequin to reply intelligently. |

| Parallelizing Software Calls Strategically | Reduces latency by executing unbiased instruments concurrently whereas managing infrastructure constraints and making certain correct end result merging and battle decision. |

| Managing Software Catalog Dimension | Maintains excessive choice accuracy by limiting instrument selections, utilizing dynamic loading, and eliminating redundancy to cut back confusion and token overhead. |

| Designing for Safety and Blast Radius | Protects methods by implementing least privilege, requiring human approval for crucial actions, and mitigating immediate injection via output sanitization. |

| Evaluating Software Calls and Iteration | Permits steady enchancment via metrics like instrument accuracy, argument validity, and error dealing with, supported by step-level tracing and iterative refinement. |

Agent orchestration frameworks and the MCP ecosystem deal with substantial infrastructure complexity, however the design choices — what instruments to reveal, learn how to describe them, what permissions to grant, learn how to deal with errors — require deliberate judgment that tooling can’t substitute for.