Amazon OpenSearch Service now brings utility monitoring, native Amazon Managed Service for Prometheus integration, and AI agent tracing collectively in OpenSearch UI‘s observability workspace. You may question Prometheus metrics with PromQL alongside logs and traces saved in Amazon OpenSearch Service, hint an AI agent’s full reasoning chain all the way down to the failing instrument name, and drill from a service-level well being view to the precise span that prompted a checkout failure, all with out leaving the interface.

On this submit, we stroll by means of two real-world situations utilizing the OpenTelemetry pattern app: a multi-agent journey planner dealing with gradual processing, and a checkout circulation quietly failing on one microservice. We chase every one to its root trigger utilizing these new capabilities.

Situation 1: An underperforming AI agent

Your multi-agent journey planner is reside and customers begin reporting gradual responses. With the brand new AI agent tracing functionality in Amazon OpenSearch Service, you may hint the agent’s full processing path to pinpoint precisely the place issues went improper.

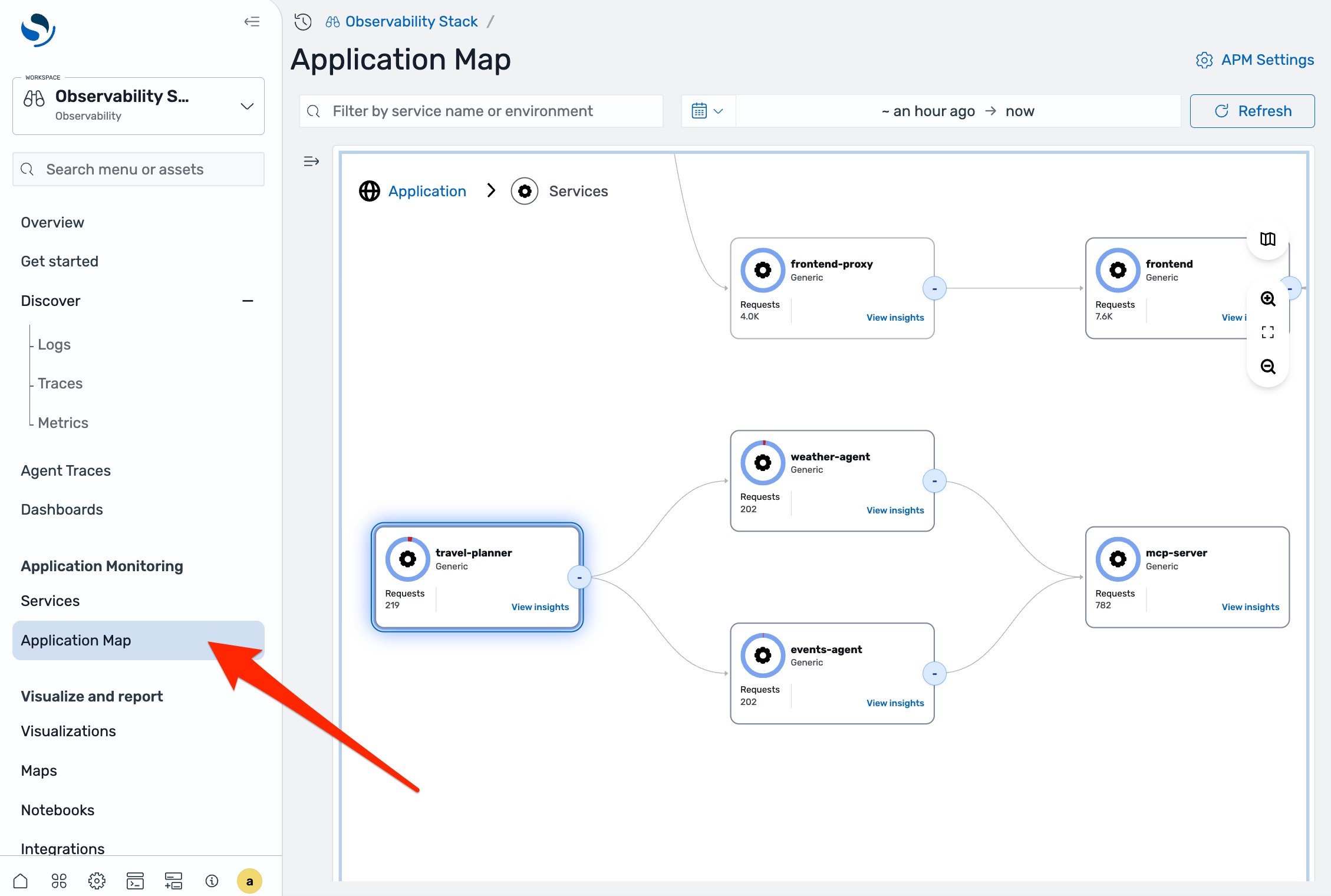

In any observability workspace in OpenSearch UI, navigate to Utility Map within the left navigation pane.

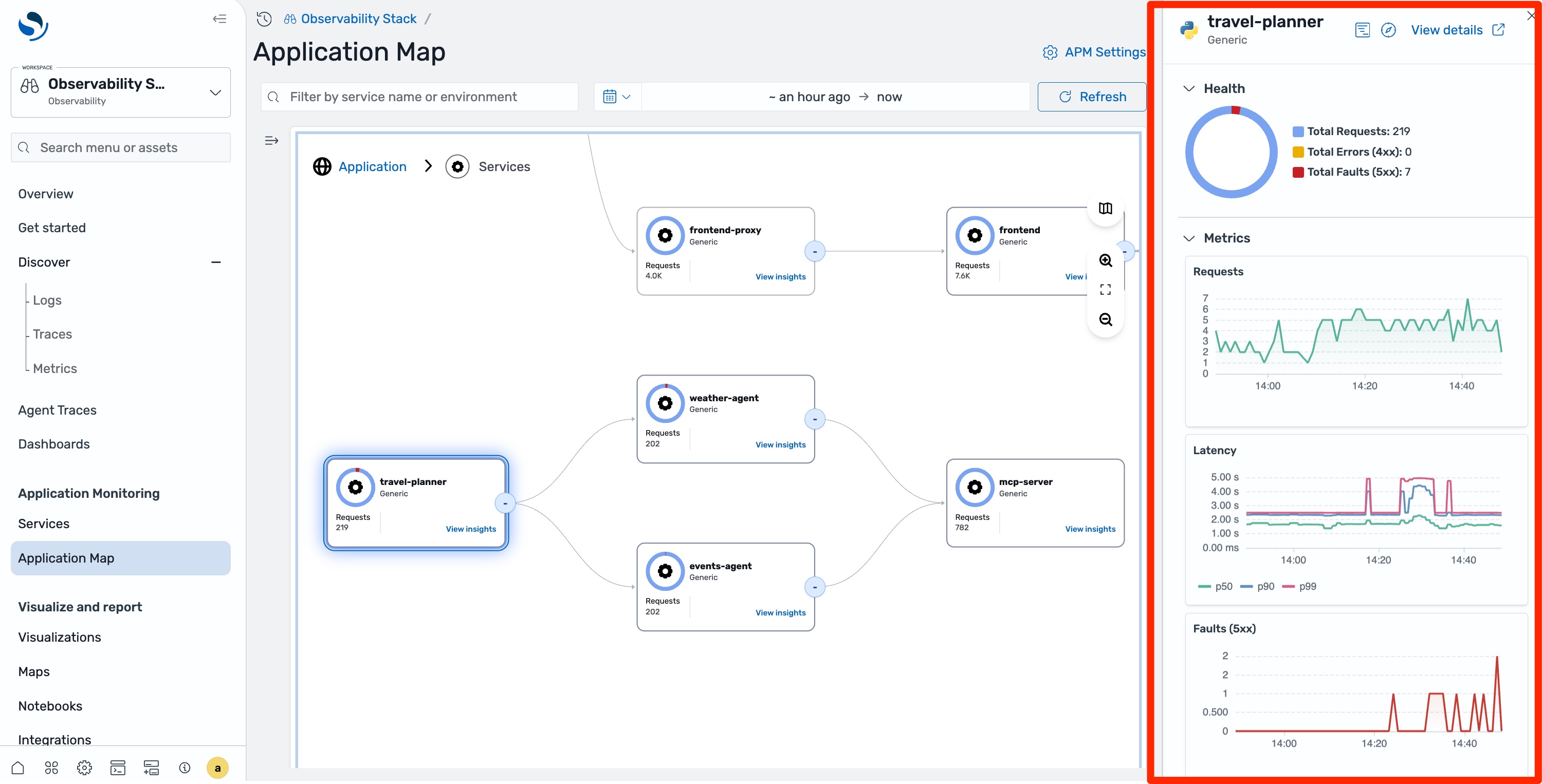

You may see the total topology of your system together with the journey agent and the sub-agents it calls. The journey agent node exhibits elevated latency and occasional errors. Choose it, and the facet panel confirms that latency is up however the latency chart exhibits intermittent spikes slightly than constant degradation.

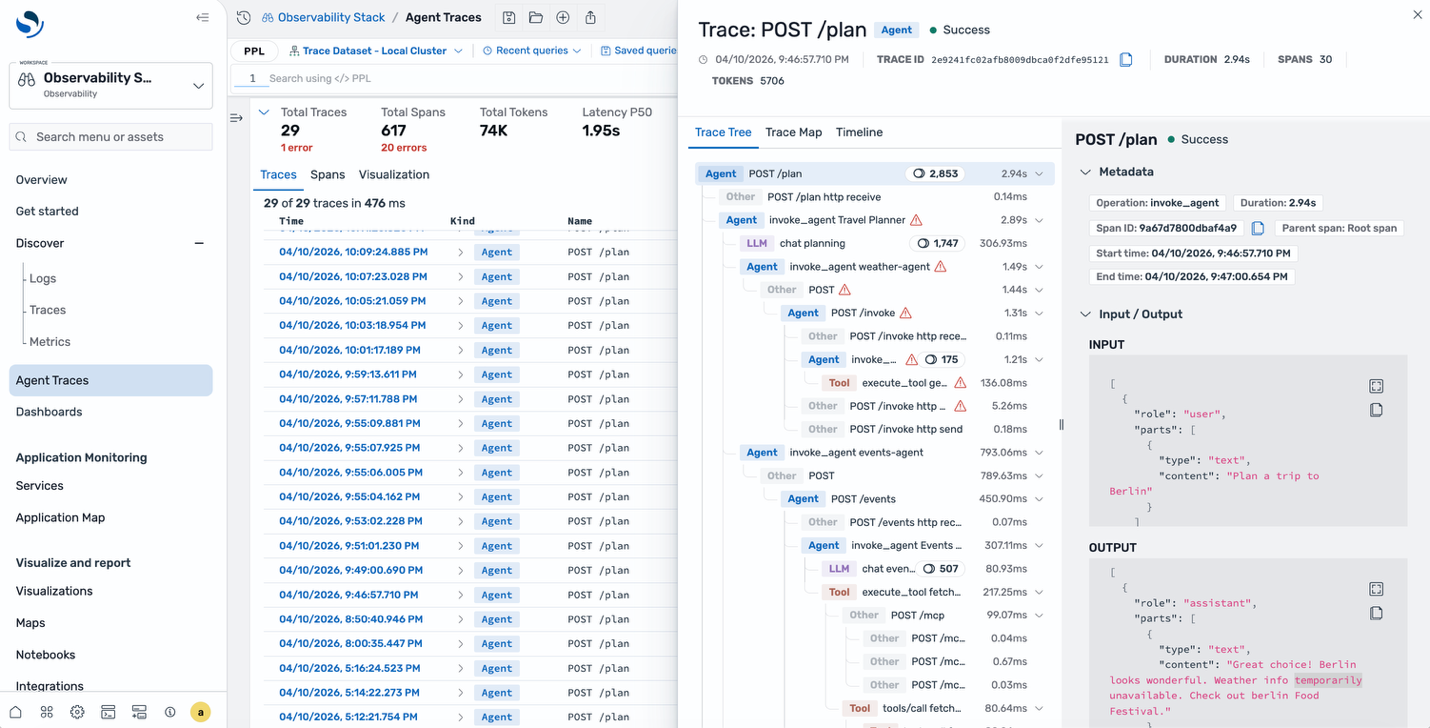

The appliance map tells you one thing is improper, however understanding why an AI agent is underperforming requires seeing its reasoning chain. Choose Agent Traces within the left navigation pane, then filter by service title and time vary.

Choose one of many traces to see the hint tree. Not like a standard span waterfall, this view organizes across the agent’s reasoning chain: the foundation agent span, the LLM calls it made, the instruments it invoked, and the way they nested every step color-coded by sort. The hint map supplies a visible directed graph of the identical execution. You may see which mannequin was known as, what number of enter and output tokens have been consumed, and the precise messages despatched to and obtained from the mannequin.

A instrument name contained in the climate agent errored out. The agent then spent extra time reasoning concerning the failure earlier than returning a partial response explaining the intermittent latency spikes and occasional faults.

Why this issues for AI brokers

Brokers make autonomous selections based mostly on LLM responses, instrument outcomes, and chained reasoning. Not like conventional microservices with deterministic code paths, agent habits varies throughout executions. With out semantic tracing that captures these AI-specific indicators, root-cause evaluation is guesswork. The hint tree surfaced the mannequin title, token counts, and failing instrument name as a result of the journey planner was instrumented with OpenTelemetry’s generative AI semantic conventions. The subsequent part describes how.

Instrumenting AI brokers

OpenTelemetry auto-instrumentation enriches spans with well-known attributes for HTTP, database, and gRPC calls. AI brokers want a unique set of attributes similar to which LLM was known as, what tokens have been consumed, which instruments have been invoked, that commonplace instrumentation doesn’t cowl.

The OpenTelemetry gen_ai semantic conventions outline commonplace attributes for these indicators, together with gen_ai.operation.title, gen_ai.utilization.input_tokens, gen_ai.request.mannequin, and gen_ai.instrument.title. When Amazon OpenSearch Service receives spans with these attributes, it categorizes them by operation sort (agent, LLM, instrument, embeddings, retrieval) and renders the agent hint tree and hint map views.

The Python SDK supplies one option to generate these spans. To ship traces to Amazon OpenSearch Ingestion, configure the SDK with AWS Signature Model 4 (SigV4) authentication. The AWSSigV4OTLPExporter cryptographically indicators every HTTP request to assist forestall unauthorized knowledge ingestion. The calling id wants an IAM coverage that grants osis:Ingest in your pipeline’s ARN. Credentials are resolved by means of the usual AWS credential supplier chain.

from opensearch_genai_observability_sdk_py import register, AWSSigV4OTLPExporter

exporter = AWSSigV4OTLPExporter(

endpoint="https://pipeline.us-east-1.osis.amazonaws.com/v1/traces",

service="osis",

area="us-east-1",

)

register(service_name="my-agent", exporter=exporter)

Use the @observe decorator to hint agent capabilities and enrich() so as to add mannequin metadata:

@observe(op=Op.EXECUTE_TOOL)

def get_weather(metropolis: str) -> dict:

return {"metropolis": metropolis, "temp": 22, "situation": "sunny"}

@observe(op=Op.INVOKE_AGENT)

def assistant(question: str) -> str:

enrich(mannequin="gpt-4o", supplier="openai")

knowledge = get_weather("Paris")

return f"{knowledge['condition']}, {knowledge['temp']}C"

end result = assistant("What is the climate?")

The SDK additionally helps auto-instrumentation for OpenAI, Anthropic, Amazon Bedrock, LangChain, LlamaIndex, and others. As a result of the instrumentation is constructed on OpenTelemetry requirements, any agent framework that emits spans with gen_ai.* attributes is appropriate with OpenSearch UI.

Situation 2: Investigating a microservice challenge

AI brokers are just one a part of most manufacturing environments. The identical interface surfaces telemetry from typical microservices, the place the troubleshooting workflow follows a extra acquainted path.

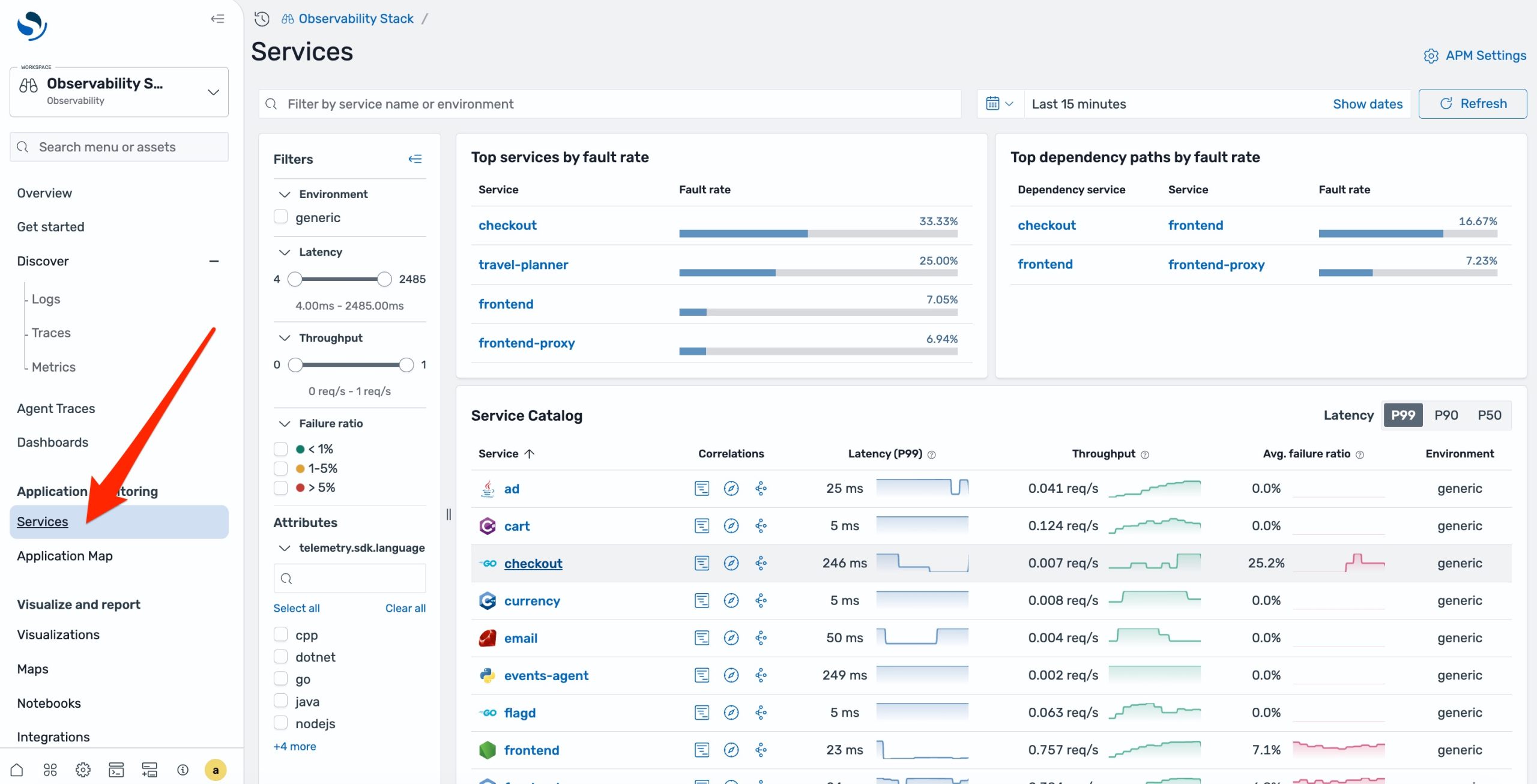

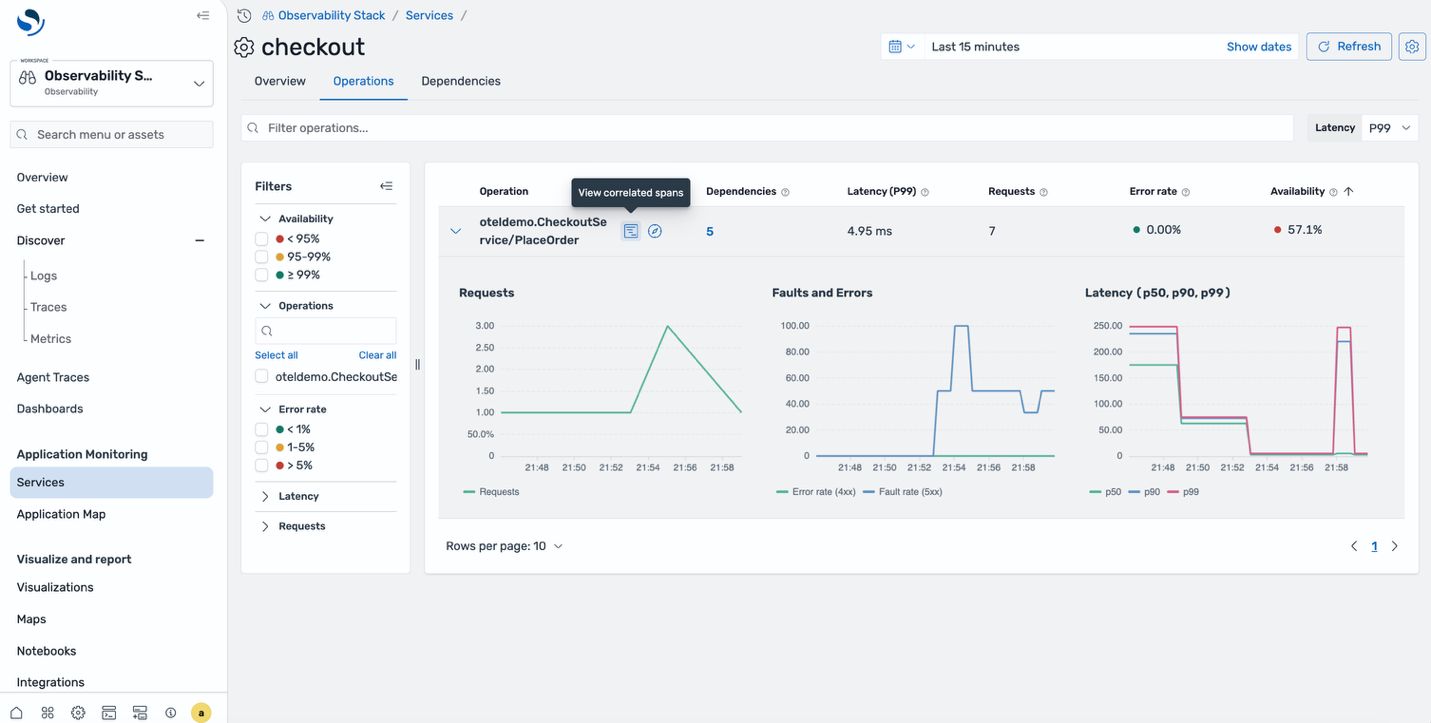

Your ecommerce checkout begins paging throughout a busy site visitors window. From OpenSearch UI, navigate to APM Companies within the left navigation pane. Each instrumented service is listed alongside its well being indicators. The checkout service exhibits an elevated error price.

Choose the affected service. The element view exhibits Request, Error, and Period (RED) metrics: request price is climbing, fault price has spiked within the final quarter-hour, and p99 length has doubled. You may see precisely when the degradation began.

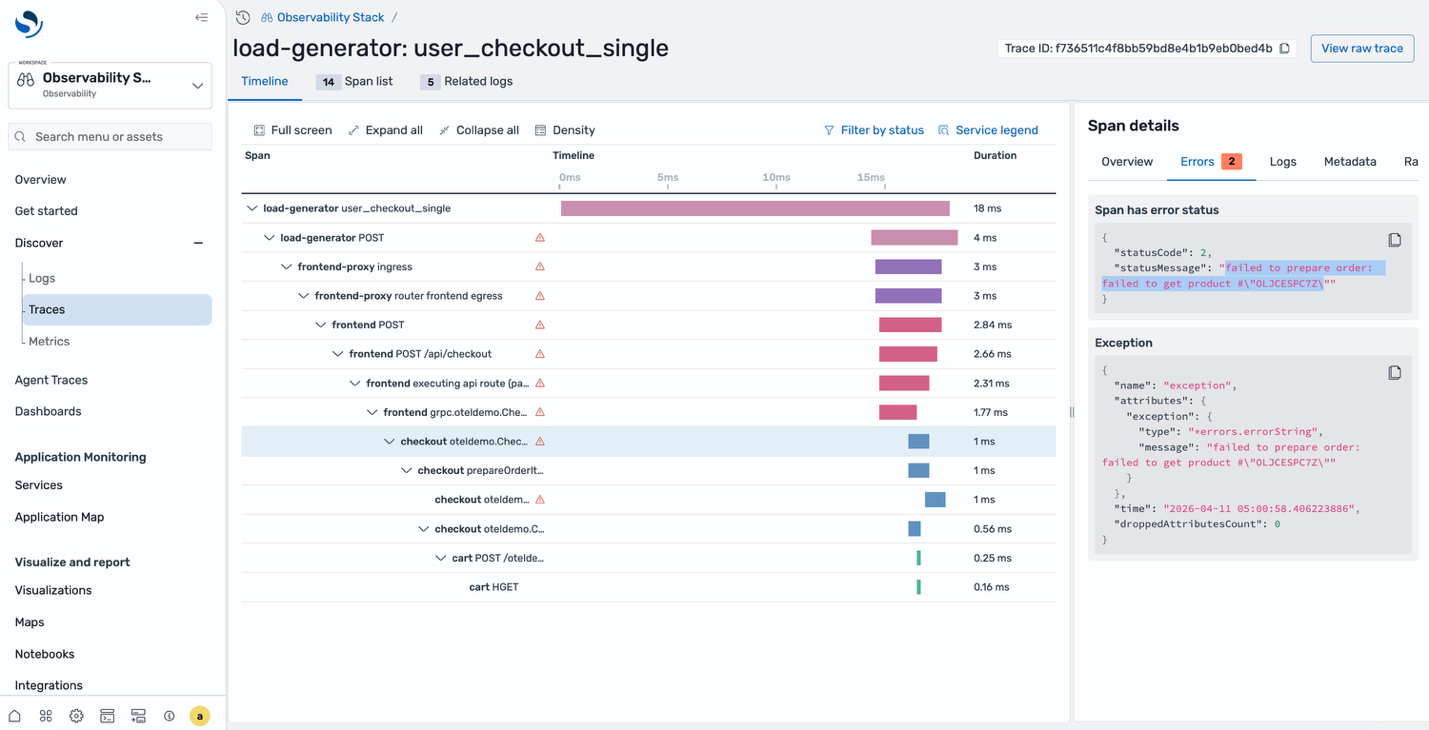

Drill into the correlated spans for the affected time window. The span listing exhibits a number of failed requests, all hitting the identical endpoint. Choose one to see the total hint waterfall. The checkout service known as prepareOrder, which failed attempting to retrieve a product from the catalog. The error message within the span particulars tells you precisely what went improper, that’s your root trigger.

Checking the infrastructure with PromQL

In each situations, the pure subsequent query is whether or not the issue originates within the utility or within the infrastructure beneath it. With the brand new Amazon Managed Service for Prometheus integration, you may reply that query with out leaving OpenSearch UI.

Prometheus metrics at the moment are queryable instantly from the identical workspace utilizing native PromQL syntax, alongside the logs and traces you’ve already been navigating.

For the database timeout in Situation 2, run a PromQL question to examine the database occasion’s learn/write throughput for a similar time window. For the agent latency challenge in Situation 1, examine the LLM endpoint’s response time metrics to see if the slowness originates from the mannequin supplier.

It is a key architectural choice: metrics proceed to reside in Amazon Managed Service for Prometheus, logs and traces proceed to reside in Amazon OpenSearch Service, and neither sign is copied or warehoused right into a second retailer. Every backend stays the one retailer for the info sort it’s purpose-built to deal with, whereas OpenSearch UI federates queries throughout each at runtime. The associated fee, retention, and operational mannequin of every retailer keep intact whereas the troubleshooting workflow collapses right into a single interface.

To configure the OpenTelemetry Collector and OpenSearch Ingestion pipelines that route metrics into Amazon Managed Service for Prometheus, see Ingesting utility telemetry.

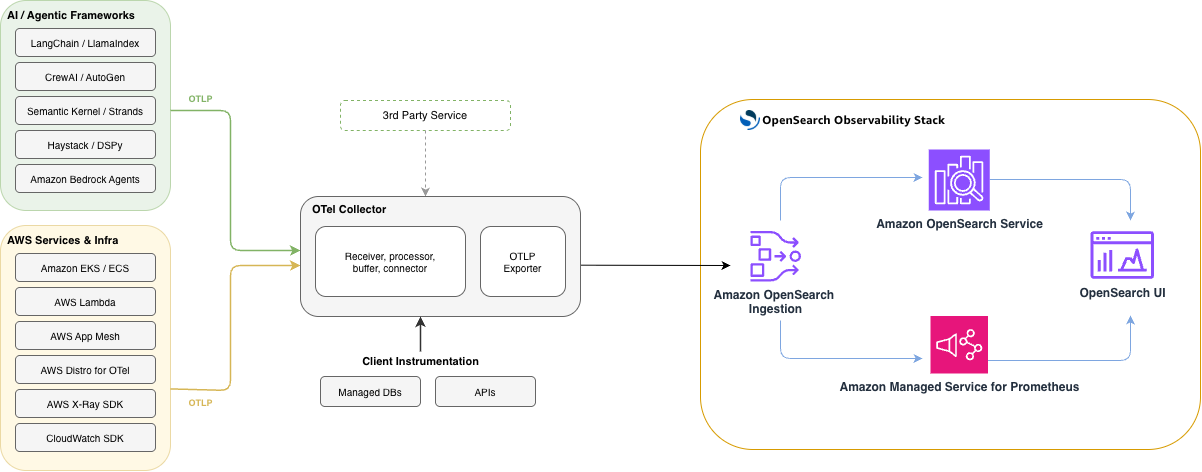

The way it’s wired collectively

The next diagram exhibits the end-to-end structure. Purposes instrumented with OpenTelemetry ship traces, logs, and metrics over OTLP to Amazon OpenSearch Ingestion. OpenSearch Ingestion routes every sign to the suitable retailer: traces and logs land in Amazon OpenSearch Service, whereas metrics circulation into Amazon Managed Service for Prometheus. OpenSearch UI then queries each shops to render the Utility Map, Companies catalog, Agent Traces, and Metrics views.

The whole expertise rests on open-source foundations, Prometheus for metrics, OpenSearch for logs and traces, and OpenTelemetry for instrumentation, so groups already operating an OpenTelemetry collector can undertake it by updating the collector’s export configuration to level at Amazon OpenSearch Ingestion, with no proprietary brokers or rewritten instrumentation required.

Getting began

To allow these capabilities, log in to OpenSearch UI’s observability workspace, choose the Gear icon within the backside left nook to open Settings and setup, and confirm that the Observability:apmEnabled toggle is on underneath the Observability part. OpenSearch UI is obtainable at no extra cost for Amazon OpenSearch Service prospects.

Discover regionally first. The OpenSearch Observability Stack offers you a completely configured surroundings together with utility monitoring, agent tracing, and Prometheus integration, operating in your machine with a single set up command. It ships with pattern instrumented companies, together with a multi-agent journey planner, so you may discover the total workflow with actual telemetry knowledge out of the field.

For AI agent growth. Agent Well being is an open-source, evaluation-driven observability instrument designed for native growth. It offers you execution circulation graphs, token monitoring, and gear invocation visibility proper in your growth loop, earlier than you push to manufacturing.

For manufacturing. The Python SDK supplies one-line setup and decorator-based tracing with gen_ai semantic conventions, with auto-instrumentation help for OpenAI, Anthropic, Amazon Bedrock, LangChain, LlamaIndex, and others. See the Amazon OpenSearch Service documentation and the Amazon Managed Service for Prometheus integration information for the total managed expertise.

Concerning the authors