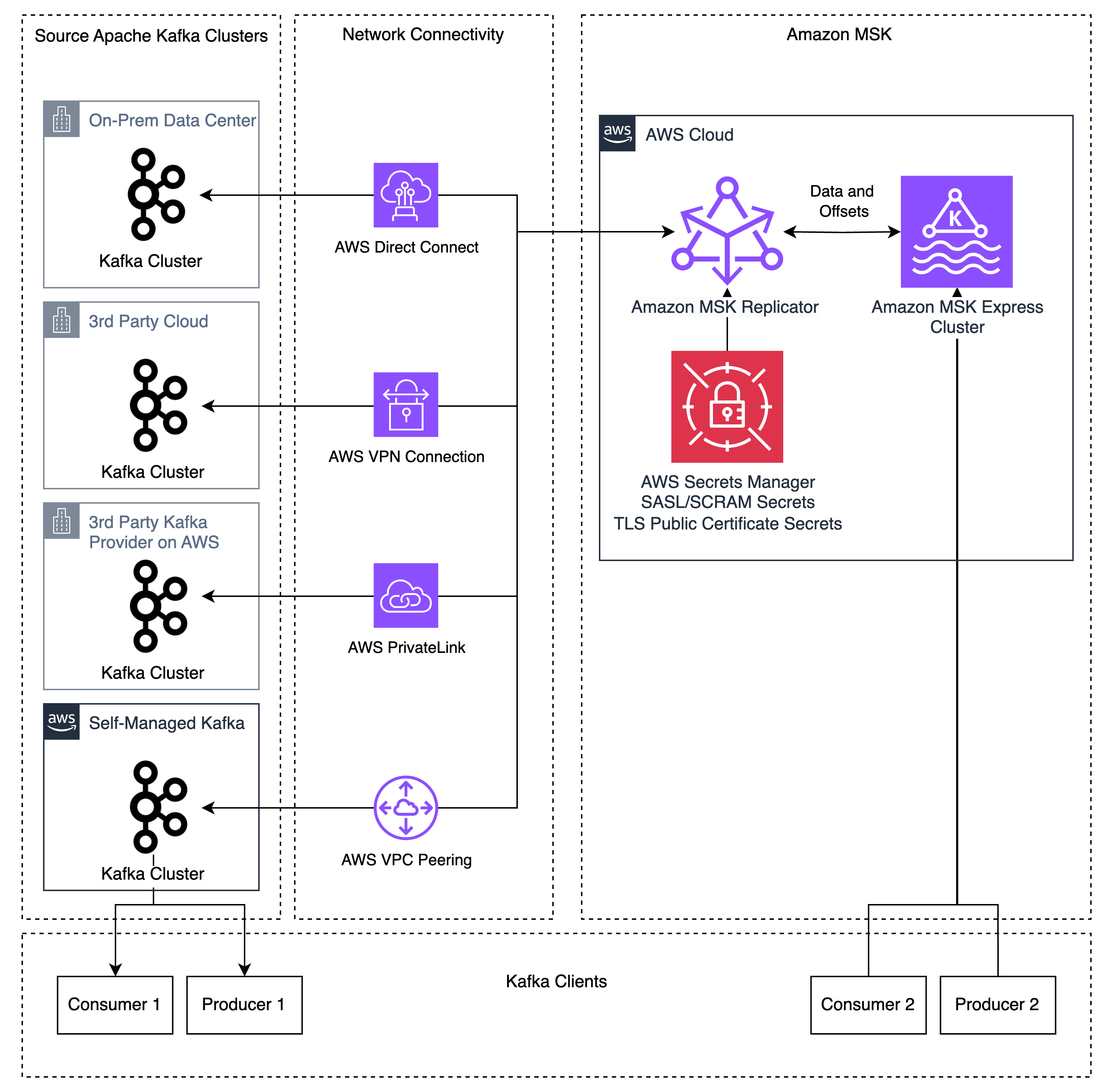

Migrating Apache Kafka workloads to the cloud usually entails managing complicated replication infrastructure, coordinating utility cutovers with prolonged downtime home windows, and sustaining deep experience in open-source instruments like Apache Kafka’s MirrorMaker 2 (MM2). These challenges decelerate migrations and enhance operational threat. Amazon MSK Replicator addresses these challenges, enabling you emigrate your Kafka deployments (known as “exterior” Kafka clusters) to Amazon MSK Categorical brokers with minimal operational overhead and diminished downtime. MSK Replicator helps information migration from Kafka deployments (model 2.8.1 or later) which have SASL/SCRAM authentication enabled – together with Kafka clusters working on-premises, on AWS, or different cloud suppliers, in addition to Kafka-protocol-compatible companies like Confluent Platform, Avien, RedPanda, WarpStream, or AutoMQ when configured with SASL/SCRAM authentication.

On this submit, we stroll you thru replicate Apache Kafka information out of your exterior Apache Kafka deployments to Amazon MSK Categorical brokers utilizing MSK Replicator. You’ll learn to configure authentication in your exterior cluster, set up community connectivity, arrange bidirectional replication, and monitor replication well being to realize a low-downtime migration.

The way it works

MSK Replicator is a completely managed serverless service that replicates matters, configurations, and offsets from cluster to cluster. It alleviates the necessity to handle complicated infrastructure or configure open-source instruments.

Earlier than MSK Replicator, prospects used instruments like MM2 for migrations. These instruments lack bi-directional subject replication when utilizing the identical subject names, creating complicated utility architectures to eat totally different matters on totally different clusters. Customized replication insurance policies in MM2 can enable equivalent subject names, however MM2 nonetheless lacks bidirectional offset replication as a result of the MM2 structure requires producers and shoppers to run on the identical cluster to copy offsets. This created complicated migrations that required both migrating shoppers earlier than producers or big-bang migrations migrating all purposes directly. When prospects run into points throughout the migration, the rollback course of is error-prone and introduces massive quantities of duplicate message processing because of the lack of client group offset synchronization. These approaches create threat and complexity for purchasers that make migrations tough to handle.

MSK Replicator addresses these issues by supporting bidirectional replication of knowledge and enhanced client group offset synchronization. MSK Replicator copies matters and offsets from an exterior Kafka cluster to MSK, permitting you to protect the identical subject and client group names on each clusters. MSK Replicator additionally helps making a second Replicator occasion for bidirectional replication of each information and enhanced offset synchronization, permitting producers and shoppers to run independently on totally different Kafka clusters. Information printed or consumed on the Amazon MSK cluster shall be replicated again to the exterior cluster by the second Replicator. This function works when producers and shoppers are migrated no matter order with out worrying about dependencies between purposes.

As a result of MSK Replicator offers bidirectional information replication and enhanced client group offset synchronization, you may transfer producers and shoppers at your individual tempo with out information loss. This reduces migration complexity, permitting you emigrate purposes between your exterior Kafka cluster and Amazon MSK no matter order. For those who run into issues throughout the migration, enhanced offset synchronization lets you roll again adjustments by shifting purposes again to the exterior Kafka cluster, the place they restart from the most recent checkpoint from the Amazon MSK cluster.

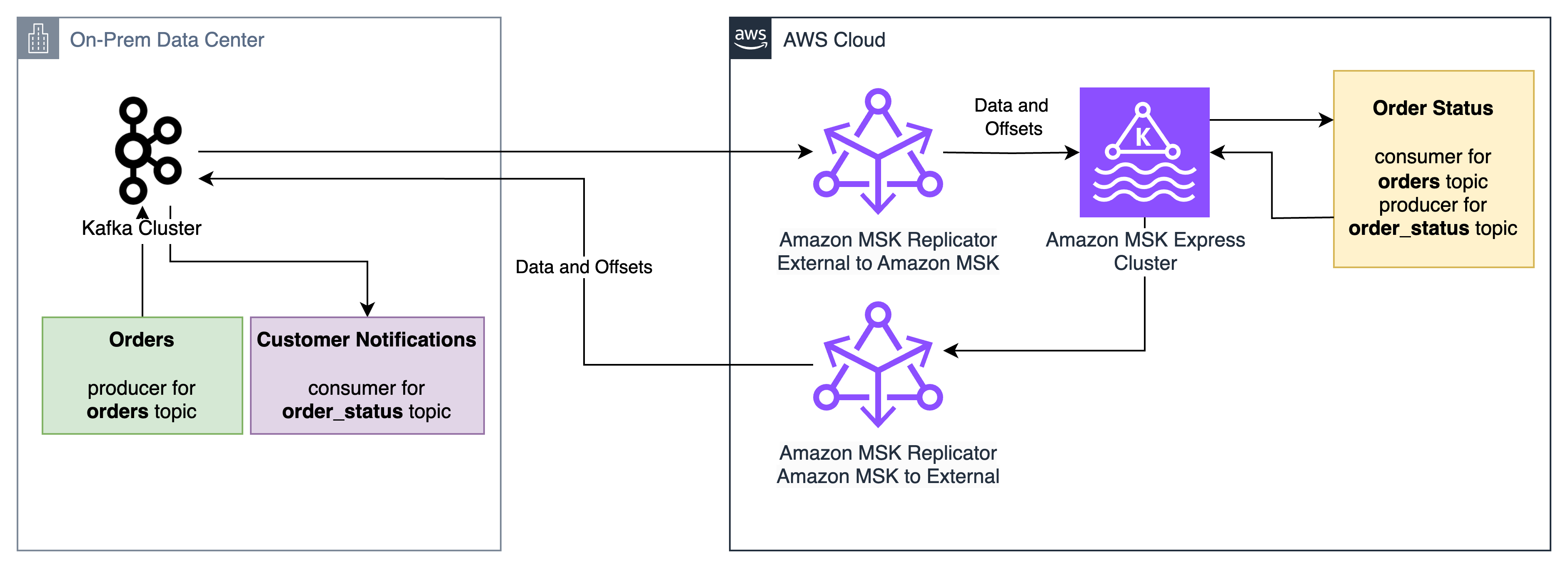

For instance, take into account three purposes:

- The “Orders” utility, which accepts incoming orders and writes them to the orders Kafka subject

- The “Order standing” utility, which reads from the “orders” Kafka subject and writes standing updates to the

order_statussubject - The “Buyer notification” utility, which reads from the

order_statussubject and notifies prospects when standing adjustments

MSK Replicator allows these purposes to be migrated between an on-premises Apache Kafka cluster and an Amazon MSK Categorical cluster with low downtime and no information loss, no matter order. The “Order standing” utility can migrate first, obtain orders from the on-premises “Orders” utility, and ship standing updates to the on-premises “Buyer notification” utility. If points come up throughout the migration, the “Order standing” utility can roll again to the on-premises cluster and its client group offsets for the orders subject shall be prepared for it to select up from the place it left off on the Amazon MSK cluster.

MSK Replicator helps information distribution throughout hybrid and multi-cloud environments for analytics, compliance, and enterprise continuity. It’s also configured for catastrophe restoration eventualities the place Amazon MSK Categorical serves as a resilient goal on your exterior Kafka clusters.

If you’re at the moment utilizing MM2 for replication, see Amazon MSK Replicator and MirrorMaker2: Selecting the best replication technique for Apache Kafka catastrophe restoration and migrations to grasp which resolution most closely fits your use case.

Resolution overview

MSK Replicator helps Kafka deployments working model 2.8.1 or later as a supply, together with third get together managed Kafka companies, self-managed Kafka, and on-premises or third-party cloud-hosted Kafka. MSK Replicator routinely handles information switch, makes use of SASL/SCRAM authentication with SSL encryption, and maintains client group positions throughout each clusters. If you don’t use SASL/SCRAM at present, this may be configured as a brand new listener used for MSK Replicator permitting present purchasers to make use of their present authentication mechanisms alongside MSK Replicator.

Conditions

To observe together with this walkthrough, you want the next assets in place:

Organising replication

Step 1: Configure community connectivity

You may arrange community connectivity between your exterior Kafka cluster and your AWS VPC utilizing strategies corresponding to AWS Direct Join for devoted community connections, AWS Web site-to-Web site VPN for encrypted connections over the web, and AWS VPC peering or AWS Transit Gateway for connections between AWS VPCs. Confirm that IP routing and DNS decision are correctly configured between your exterior cluster and AWS.

To confirm IP routing and DNS decision, connect with your exterior Kafka cluster from within your VPC by utilizing the Kafka CLI to checklist matters on the exterior cluster. For those who can checklist matters out of your VPC utilizing the Kafka CLI, this implies DNS decision and IP routing are working efficiently. If it fails, work together with your community admins to troubleshoot community connectivity points.

Step 2: Configure exterior cluster

On this step, you’ll arrange authentication in your exterior Kafka cluster and retailer the credentials in AWS Secrets and techniques Supervisor in order that MSK Replicator can join securely.

Configure authentication

Utilizing the exterior cluster admin person, configure SASL/SCRAM authentication for MSK Replicator utilizing SHA-256 or 512 in your exterior Kafka cluster. Create a SASL/SCRAM person for MSK Replicator and provides the person the next ACL permissions:

- Matter operations – Alter, AlterConfigs, Create, Describe, DescribeConfigs, Learn, Write

- Group operations – Learn, Describe

- Cluster operations – Create, ClusterAction, Describe, DescribeConfigs

Configure SecretsManager

AWS Secrets and techniques Supervisor shops your SASL/SCRAM credentials securely in order that MSK Replicator can retrieve them at runtime. The key should use JSON format and have the next keys:

username– The SCRAM username that you simply configured within the authentication step abovepassword– The SCRAM password that you simply configured within the authentication step abovecertificates– The general public root CA certificates (the top-level certificates authority that issued your cluster’s TLS certificates) and the intermediate CA chain (intermediate certificates between the basis and your cluster’s certificates), used for SSL handshakes with the exterior cluster

Optionally, it’s possible you’ll create separate secrets and techniques for SCRAM credentials and the SSL certificates. This strategy is helpful when secrets and techniques for SCRAM credentials and certificates are provisioned in several phases, corresponding to in Infrastructure as Code (IaC) pipelines.

Retrieve the cluster ID

Because the admin person, use the Kafka CLI instruments to retrieve the cluster ID of your exterior cluster. Run the next command, changing your-broker-host:9096 with the handle of one in every of your exterior cluster’s bootstrap servers:

bin/kafka-cluster.sh cluster-id --bootstrap-server your-broker-host:9096 --config admin.propertiesThe command returns a cluster ID string corresponding to lkc-abc123. Pay attention to this worth as a result of you will have it when creating the replicator in Step 4.

Step 3: Create your MSK Categorical goal cluster

Together with your exterior cluster configured, now you can arrange the goal. Create an Amazon MSK Categorical cluster with IAM authentication enabled. Be sure that the cluster is in subnets which have entry to AWS Secrets and techniques Supervisor endpoints. See Get began utilizing Amazon MSK for extra data on creating an MSK cluster.

Step 4: Create the replicator

Now that each clusters are prepared, you may join them by organising the MSK Replicator with the suitable IAM function and replication configuration.

Arrange an IAM function for MSK Replicator

MSK Replicator wants an IAM function to work together together with your MSK Categorical cluster and retrieve secrets and techniques. Arrange a service execution IAM function with a belief coverage permitting kafka.amazonaws.com and connect the AWSMSKReplicatorExecutionRole permissions coverage. Pay attention to the function ARN for creating the replicator.

Create and connect a coverage for accessing your Secrets and techniques Supervisor secrets and techniques and studying/writing information in your MSK cluster. See Creating roles and attaching insurance policies (console) for extra data on creating IAM roles and insurance policies.

The next is an instance coverage for studying and writing information to your MSK cluster and studying KMS-encrypted Secrets and techniques Supervisor secrets and techniques:

{

"Model": "2012-10-17",

"Assertion": [

{

"Sid": "SecretsManagerAccess",

"Effect": "Allow",

"Action": [

"secretsmanager:GetSecretValue",

"secretsmanager:DescribeSecret"

],

"Useful resource": [

"",

""

]

},

{

"Sid": "KMSDecrypt",

"Impact": "Enable",

"Motion": "kms:Decrypt",

"Useful resource": ""

},

{

"Sid": "TargetClusterAccess",

"Impact": "Enable",

"Motion": [

"kafka-cluster:Connect",

"kafka-cluster:DescribeCluster",

"kafka-cluster:AlterCluster",

"kafka-cluster:DescribeClusterDynamicConfiguration",

"kafka-cluster:AlterClusterDynamicConfiguration",

"kafka-cluster:DescribeTopic",

"kafka-cluster:CreateTopic",

"kafka-cluster:AlterTopic",

"kafka-cluster:DescribeTopicDynamicConfiguration",

"kafka-cluster:AlterTopicDynamicConfiguration",

"kafka-cluster:WriteData",

"kafka-cluster:WriteDataIdempotently",

"kafka-cluster:ReadData",

"kafka-cluster:DescribeGroup",

"kafka-cluster:AlterGroup"

],

"Useful resource": [

"arn:aws:kafka:::cluster/*/*",

"arn:aws:kafka:::topic//*",

"arn:aws:kafka:::group/*/*"

]

},

{

"Sid": "CloudWatchLogsAccess",

"Impact": "Enable",

"Motion": [

"logs:CreateLogStream",

"logs:PutLogEvents",

"logs:DescribeLogStreams"

],

"Useful resource": ""

}

]

}

Create the replicator for exterior to MSK replication

Use the AWS CLI, API, or Console to create your replicator. Right here’s an instance utilizing the AWS CLI:

aws kafka create-replicator

--replicator-name external-to-msk

--service-execution-role-arn "arn:aws:iam::123456789012:function/MSKReplicatorRole"

--kafka-clusters file://./kafka-clusters.json

--replication-info-list file://./replication-info.json

--log-delivery file://./log-delivery.json

--region us-east-1The kafka-clusters.json file defines the supply and goal Kafka cluster connection data, replication-info.json specifies which matters to copy and deal with client group offset synchronization, and log-delivery.json specifies the CloudWatch logging configuration. The next tables describe the required parameters:

CLI inputs:

| CLI Parameter | Description | Instance |

| replicator-name | The identify of the replicator | external-to-msk |

| service-execution-role-arn | The ARN for the service execution IAM function you created | arn:aws:iam::123456789012:function/MSKReplicatorRole |

| kafka-clusters | The Kafka cluster connection data | See beneath |

| replication-info-list | The replication configuration | See beneath |

| log-delivery | The logging configuration | See beneath |

Key kafka-clusters.json inputs:

| CLI Parameter | Description | Instance |

| ApacheKafkaClusterId | The cluster ID retrieved in Step 2 | lkc-abc123 |

| RootCaCertificate | The Secrets and techniques Supervisor ARN containing the general public CA certificates and intermediate CA chain | arn:aws:secretsmanager: |

| MskClusterArn | The ARN for the MSK Categorical cluster | arn:aws:kafka: |

| SecretArn | The Secrets and techniques Supervisor ARN containing the SASL/SCRAM username and password | arn:aws:secretsmanager: |

| SecurityGroupIds | The safety group IDs for MSK Replicator | sg-0123456789abcdef0 |

Key replication-info.json inputs:

| CLI Parameter | Description | Instance |

| TargetCompressionType | The compression kind to make use of for replicating information | LZ4 |

| TopicsToReplicate | The checklist of matters to copy (use [“.*”] for all matters) | [“my-topic”] |

| ConsumerGroupsToReplicate | The checklist of client teams to copy | [“my-group”] |

| StartingPosition | The purpose within the Kafka matters to start replication from (both EARLIEST or LATEST) | EARLIEST |

| ConsumerGroupOffsetSyncMode | Whether or not or to not use enhanced bidirectional client group offset synchronization | ENHANCED |

Observe that startingPosition is ready to EARLIEST within the configuration beneath, which implies the replicator begins studying from the oldest obtainable offset on every subject. That is the really helpful setting for migrations to keep away from information loss.

Key log-delivery.json inputs:

| CLI Parameter | Description | Instance |

| Enabled | Means that you can allow CloudWatch logging | true |

| LogGroup | The CloudWatch logs log group identify to log to | /msk/replicator/my-replicator |

Extra log supply strategies for Amazon S3 and Amazon Information Firehose are supported. On this submit, we use CloudWatch logging.

The configs ought to seem like the next for exterior to MSK replication.

kafka-clusters.json:

[

{

"ApacheKafkaCluster": {

"ApacheKafkaClusterId": "lkc-abc123",

"BootstrapBrokerString": "broker1.example.com:9096"

},

"ClientAuthentication": {

"SaslScram": {

"Mechanism": "SHA512",

"SecretArn": "arn:aws:secretsmanager:::secret:my-creds"

}

},

"EncryptionInTransit": {

"EncryptionType": "TLS",

"RootCaCertificate": "arn:aws:secretsmanager:::secret:my-cert"

}

},

{

"AmazonMskCluster": {

"MskClusterArn": "arn:aws:kafka:::cluster/my-cluster/abc-123"

},

"VpcConfig": {

"SecurityGroupIds": ["sg-0123456789abcdef0"],

"SubnetIds": ["subnet-abc123", "subnet-abc124", "subnet-abc125"]

}

}

] replication-info.json:

[

{

"SourceKafkaClusterId": "lkc-abc123",

"TargetKafkaClusterArn": "arn:aws:kafka:::cluster/my-cluster/abc-123",

"TargetCompressionType": "LZ4",

"TopicReplication": {

"TopicsToReplicate": ["my-topic"],

"CopyTopicConfigurations": true,

"CopyAccessControlListsForTopics": true,

"DetectAndCopyNewTopics": true,

"StartingPosition": {"Kind": "EARLIEST"},

"TopicNameConfiguration": {"Kind": "IDENTICAL"}

},

"ConsumerGroupReplication": {

"ConsumerGroupsToReplicate": ["my-group"],

"SynchroniseConsumerGroupOffsets": true,

"DetectAndCopyNewConsumerGroups": true,

"ConsumerGroupOffsetSyncMode": "ENHANCED"

}

}

] log-delivery.json:

{

"ReplicatorLogDelivery": {

"CloudWatchLogs": {

"Enabled": true,

"LogGroup": ""

}

}

} Configure bidirectional replication from MSK to the exterior cluster

To allow bidirectional replication, create a second replicator that replicates in the wrong way. Use the identical IAM function and community configuration from Step 4, however swap the supply and goal. Change SourceKafkaClusterId with TargetKafkaClusterId and TargetKafkaClusterArn with SourceKafkaClusterArn in a brand new msk-to-external-replication-info.json file:

aws kafka create-replicator

--replicator-name msk-to-external

--service-execution-role-arn "arn:aws:iam::123456789012:function/MSKReplicatorRole"

--kafka-clusters file:///./kafka-clusters.json

--replication-info-list file:///./msk-to-external-replication-info.json

--log-delivery file:///./log-delivery.json

--region us-east-1Monitoring replication well being

Monitor your replication utilizing Amazon CloudWatch metrics. Three key metrics to grasp are MessageLag, SumOffsetLag, and ReplicationLatency. MessageLag measures how far behind the replicator is from the exterior cluster when it comes to messages not but replicated, whereas SumOffsetLag measures how far behind a client group is from the most recent message in a subject. ReplicationLatency is the quantity of latency between the supply and goal clusters in information replication. When the three attain a sustained low stage, your clusters are totally synchronized for each information and client group offsets.

To troubleshoot MSK Replicator replication or errors, use the CloudWatch logs to get extra particulars in regards to the well being of the replicator. MSK Replicator logs standing and troubleshooting data which might be useful in diagnosing points like connectivity, authentication, and SSL errors.

Observe that the replication is asynchronous, so there shall be some lag throughout replication. The lag will attain zero as soon as a shopper is shut down throughout migration to the goal cluster. This takes about 30 seconds beneath regular operations, permitting a low downtime migration with out information loss. In case your lag is regularly rising or doesn’t attain a sustained low stage, this means that you’ve got inadequate partitions for high-throughput replication. Consult with Troubleshoot MSK Replicator for extra data on troubleshooting replication throughput and lag.

Key metrics embody:

- MessageLag – Screens the sync between the MSK Replicator and the supply cluster. MessageLag signifies the lag between the messages produced to the supply cluster and messages consumed by the replicator. It isn’t the lag between the supply and goal cluster.

- ReplicationLatency – Time taken for information to copy from supply to focus on cluster (ms)

- ReplicatorThroughput – Common variety of bytes replicated per second

- ReplicatorFailure – Variety of failures the replicator is experiencing

- KafkaClusterPingSuccessCount – Connection well being indicator (1 = wholesome, 0 = unhealthy)

- ConsumerGroupCount – Complete client teams being synchronized

- ConsumerGroupOffsetSyncFailure – Failures throughout offset synchronization

- AuthError – Variety of connections with failed authentication per second, by cluster

- ThrottleTime – Common time in ms a request was throttled by brokers, by cluster

- SumOffsetLag – Aggregated offset lag throughout partitions for a client group on a subject (MSK cluster-level metric)

For extra particulars on these metrics, see the MSK Replicator metrics documentation.

Your purposes are able to migrate when the next circumstances are met. For many workloads, it’s best to count on these metrics to stabilize inside a number of hours of beginning replication. Excessive-throughput clusters might take longer relying on subject quantity and partition rely.

- ReplicatorFailure = 0

- ConsumerGroupOffsetSyncFailure = 0

- KafkaClusterPingSuccessCount = 1 for each supply and goal clusters

- MessageLag < 1,000

- Your sustained lag could also be decrease or greater relying in your throughput per partition, message measurement, and different elements

- Sustained excessive message lag often signifies inadequate partitions for high-throughput replication

- ReplicationLatency < 90 seconds

- Your sustained latency could also be decrease or greater relying in your throughput per partition, message measurement, and different elements

- Sustained excessive latency often signifies inadequate partitions for high-throughput replication

- SumOffsetLag is at a sustained low stage on each clusters

- Offset values on the 2 clusters is probably not numerically equivalent.

- MSK Replicator interprets offsets between clusters so that customers resume from the proper place, however the uncooked offset numbers can differ resulting from how offset translation works. What issues is that SumOffsetLag is at a sustained low stage.

- ConsumerGroupCount (MSK) = Anticipated rely (exterior cluster)

- If ConsumerGroupCount is zero or doesn’t match the anticipated rely, then there is a matter within the Replicator configuration or a permissions situation stopping client group synchronization

Migrating your purposes

With bidirectional client offset synchronization, you may migrate your producers and shoppers no matter order. Begin by monitoring replication metrics till they attain the goal values described within the earlier part. Then migrate your purposes (producers or shoppers) to make use of the MSK Categorical cluster endpoints and confirm that they’re producing and consuming as anticipated. For those who encounter points, you may roll again by switching purposes again to the exterior cluster. The patron offset synchronization makes certain that your purposes resume from their final dedicated place no matter which cluster they connect with.

For a complete, hands-on walkthrough of the end-to-end migration course of, discover the MSK Migration Workshop, which offers step-by-step steering for migrating your Kafka workloads to Amazon MSK.

Safety issues

MSK Replicator makes use of SASL/SCRAM authentication with SSL encryption for safe information switch between your exterior cluster and AWS. The answer helps each publicly trusted certificates and personal or self-signed certificates. Credentials are saved securely in AWS Secrets and techniques Supervisor, and the goal MSK Categorical cluster makes use of IAM authentication for entry management.

When configuring safety, preserve the next in thoughts:

- Be sure that the IAM function you create in Step 4 follows the precept of least privileges. Solely connect

AWSMSKReplicatorExecutionRoleand an IAM coverage for Secrets and techniques Supervisor with least-privileges entry to learn secret values and keep away from including broader permissions. - Confirm that your Secrets and techniques Supervisor secret is encrypted with an AWS KMS key that the MSK Replicator service execution function has permission to decrypt.

- Verify that the safety teams assigned to MSK Replicator enable outbound visitors to your exterior cluster’s dealer ports (usually 9096 for SASL/SCRAM with TLS) and to the MSK Categorical cluster.

- Rotate your SASL/SCRAM credentials periodically and replace the corresponding Secrets and techniques Supervisor secret. MSK Replicator picks up the brand new credentials routinely on the subsequent connection try.

Underneath the AWS shared duty mannequin, AWS is liable for securing the underlying infrastructure that runs MSK Replicator, together with the compute, storage, and networking assets. You’re liable for configuring authentication mechanisms (SASL/SCRAM), managing credentials in AWS Secrets and techniques Supervisor, configuring community safety (safety teams and VPC settings), implementing IAM insurance policies following least privilege, and rotating credentials. For extra data, see Safety in Amazon MSK within the Amazon MSK Developer Information.

Cleanup

To keep away from ongoing prices, delete the assets you created throughout this walkthrough. Begin by deleting the replicators first, as a result of they rely upon the opposite assets:

aws kafka delete-replicator --replicator-arn

After each replicators are deleted, you may take away the next assets in the event that they have been created solely for this walkthrough:

- The MSK Categorical cluster (deleting a cluster additionally removes its saved information, so confirm that your purposes have totally migrated earlier than continuing)

- The Secrets and techniques Supervisor secrets and techniques containing your SASL/SCRAM credentials and certificates

- The IAM function and insurance policies created for MSK Replicator

You may confirm {that a} replicator has been totally deleted by working aws kafka list-replicators and confirming it now not seems within the output.

Conclusion

Amazon MSK Replicator simplifies the method of migrating to Amazon MSK Categorical brokers and establishes hybrid Kafka architectures. The totally managed service alleviates the operational complexity of managing replication whereas bidirectional client offset synchronization allows versatile, low-risk utility migration.

Subsequent Steps

To get began utilizing MSK Replicator emigrate purposes to MSK Categorical brokers, use the MSK Migration Workshop for a hands-on, end-to-end migration walkthrough. The Amazon MSK Replicator documentation contains detailed configuration particulars to assist configure MSK Replicator on your use case. From there, use MSK Replicator emigrate your Apache Kafka workloads to MSK Categorical dealer.

As soon as your migration is full, take into account exploring multi-region replication patterns for catastrophe restoration, or integrating your MSK Categorical cluster with AWS analytics companies corresponding to Amazon Information Firehose and Amazon Athena. For those who need assistance planning your migration, attain out to your AWS account crew, AWS Help or AWS Skilled Companies.

In regards to the authors