Builders should navigate altering security rules whereas making ready client robots, say Cooley consultants. Supply: Haris, AI, through Adobe Inventory

The buyer robotics market is exploding – with the humanoid robotics section alone projected towards $34 billion by 2030. Humanoid robots that may carry out family duties, synthetic intelligence-powered companions for aged care, autonomous garden upkeep programs, and interactive academic robots are shifting from prototypes to manufacturing.

Main retailers are scrambling for revolutionary merchandise to fulfill surging demand – with 65% of U.S. households already utilizing AI-powered gadgets. The know-how affords nice promise. The market is hungry for it. And corporations now face a essential strategic resolution whereas they race to carry their revolutionary merchandise to market: How you can navigate essentially completely different regulatory approaches of their key markets?

EU and U.S. take divergent approaches

The European Union and U.S. have to this point chosen reverse paths for regulating AI-powered client merchandise. The EU Equipment Regulation, which replaces the EU Equipment Directive and comes on-line totally in January 2027, creates baseline necessities for promoting robots in Europe, together with these incorporating AI.

The EU AI Act establishes a complete ex-ante framework with considerably extra regulatory readability than the U.S. affords. The AI Act’s risk-based classification system offers outlined classes and outlined obligations, notably for AI deemed to be “excessive threat.” Robotics incorporating AI will often fall into this class the place AI is appearing as a security part.

Then again, the U.S. at the moment has no single, nationwide regulatory framework for AI. As an alternative, particular person states have adopted various approaches, together with passing new guardrails on AI similar to Colorado’s AI Act, Texas’ Accountable AI Governance Act (HB 1709), and California’s Transparency in Frontier Synthetic Intelligence Act.

The Federal Commerce Fee (FTC) and state attorneys common are establishing AI boundaries utilizing present authorized frameworks on a case-by-case enforcement, together with enforcement actions beneath present client safety authority. And the Shopper Product Security Fee (CPSC) is in wait-and-see mode on client robotics whereas taking part in associated voluntary requirements efforts.

Current coverage developments sign potential shifts within the federal method. Govt Order 14179, issued in January 2025, revoked the earlier administration’s complete AI order and established a brand new framework emphasizing private-sector innovation and lowered regulatory boundaries.

The order directs companies to remove insurance policies that unduly prohibit AI growth whereas sustaining concentrate on nationwide safety and worldwide competitiveness. This indicators a regulatory philosophy favoring market-driven growth over prescriptive federal frameworks.

Legislative efforts are additionally beneath method that might additional form the federal panorama. Sen. Marsha Blackburn (R-Tenn.) has proposed a nationwide coverage framework for AI that might, amongst different issues, search to codify components of the chief order’s method and doubtlessly preempt sure state AI legal guidelines. If enacted, it might considerably alter the patchwork of state-level necessities corporations at the moment face.

The present U.S. atmosphere presents each challenges and alternatives for client robotics producers and builders of AI-enabled merchandise. The shortage of clear ex-ante guidelines creates uncertainty, notably for corporations accustomed to outlined compliance frameworks.

Nonetheless, it additionally creates house for product growth attentive to market wants fairly than predetermined regulatory classes. Working with skilled advisors – together with authorized counsel specializing in product security, privateness, and AI regulation – is crucial for navigating U.S. market entry.

Three strategic compliance priorities

1. Product security requirements

Business security requirements for client robots have initially drawn from automotive and industrial robotic guidelines. This method has appreciable advantage, as these requirements are time-tested.

Nonetheless, this method additionally has essential limitations. Most significantly, the hazard situations contemplated by these requirements don’t at all times align with potential dangers for in-home robotic use, particularly round susceptible populations, similar to youngsters, older customers, and people with disabilities.

Within the industrial setting, as an illustration, threat is primarily managed by separation between people and robots, which is the precise reverse state of affairs as supposed for in-home use. As a result of threat administration shall be completely different in lots of of those situations, consensus efforts are beneath strategy to develop and improve significant baseline client robotic security requirements that moderately tackle in-home threat and supply corporations with extra of the design and growth readability they search and wish.

Firms ought to begin, a minimum of, by monitoring the event of consensus requirements for robotics and AI inside organizations such because the Worldwide Group for Standardization (ISO), in addition to the Nationwide Institute of Requirements and Know-how (NIST). NIST has been actively growing AI-related frameworks and steerage, together with its AI Threat Administration Framework, and even have interaction by way of its nationwide requirements delegation.

Firms also needs to develop a baseline framework that identifies any related obligatory necessities and maps to an affordable hybrid from among the many adjoining consensus requirements. This growth requirements map won’t be an identical for each firm, as it is going to be pegged to product design and threat tolerance. However no matter selections are made, they should be cheap, nicely articulated and nicely documented to higher stand up to future authorized and compliance scrutiny.

The present absence of federal obligatory security requirements for client robotics or AI in client merchandise displays the CPSC’s conventional method of permitting industry-led growth to proceed first. This differs considerably from the EU’s top-down regulatory method, the place many client robotics shall be required to endure third-party conformity evaluation beneath the Equipment Regulation and AI Act. The present U.S. coverage atmosphere favoring private-sector innovation suggests continued reliance on industry-led tips fairly than prescriptive federal necessities.

Additional, the normal CPSC and EU jurisdictional boundary between software program and {hardware} is evolving, with AI in client merchandise more and more more likely to be handled as built-in part components topic to product security jurisdiction.

When a robotic’s AI comes to a decision that impacts bodily product habits, the software program can’t be meaningfully separated from the {hardware} for regulatory functions. Firms ought to apply product-safety rigor to their AI programs, implementing thorough testing throughout each software program and {hardware} elements.

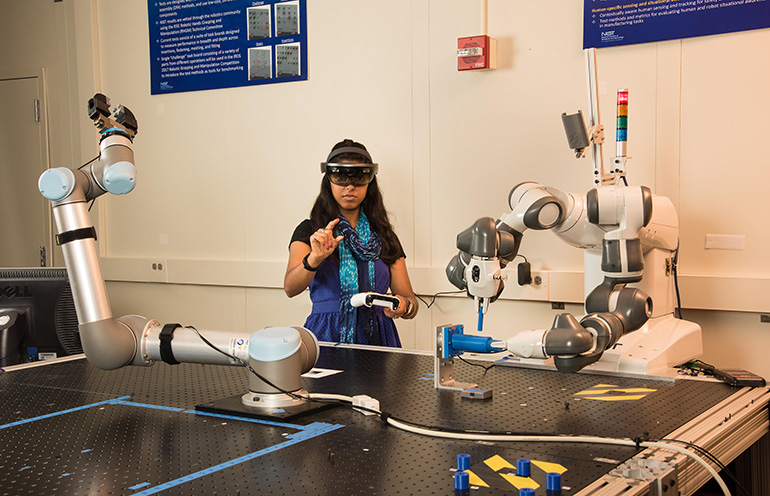

NIST has studied human-robot interplay. Credit score: Earl Bukoff, NIST

2. Transparency about AI use and knowledge practices

Transparency has change into a precedence focus for each regulators and the plaintiffs’ bar, creating vital issues for corporations bringing AI-powered merchandise to market.

Shopper robotics presents distinctive disclosure challenges as a result of these merchandise work together carefully with customers in house environments, amassing operational knowledge whereas using AI programs that will not be instantly clear to customers. The FTC has introduced enforcement actions towards a number of corporations relating to AI representations, and this enforcement exercise is predicted to proceed as AI adoption expands throughout industries.

State attorneys common have equally pursued AI-related investigations beneath present client safety statutes. For instance, in August 2025, Texas opened an investigation into AI chatbots associated to potential misleading commerce practices and deceptive psychological well being advertising and marketing. Likewise, in January 2026, California opened an investigation into nonconsensual sexually specific materials and deepfakes produced utilizing a number one AI platform.

“AI Litigation 2.0” focuses considerably on how corporations talk about their AI capabilities and knowledge practices to customers. Certainly, “AI washing” – making exaggerated or unsubstantiated claims a few product’s AI capabilities – has change into a definite enforcement precedence for the FTC, as demonstrated by current actions towards corporations overstating the position or effectiveness of AI of their merchandise.

The method is simple: Describe AI capabilities with specificity and accuracy. Present clear explanations of what the AI does, what knowledge it processes, retention practices and the way data is protected. Whereas there’s room for accessible language that communicates worth to customers and buyers, broad or ambiguous characterizations can invite questions and potential challenges.

For corporations deploying AI-powered client merchandise at scale, considerate disclosure practices can serve a number of strategic functions – constructing client belief, managing regulatory and litigation dangers, and establishing defensible positions ought to questions come up. Firms that spend money on clear, substantiated communications about their AI capabilities place themselves advantageously in an evolving regulatory and litigation atmosphere.

The U.S. authorities has cracked down on misleading AI claims. Supply: FTC

3. Bias and discrimination prevention

Algorithmic bias and discrimination have change into central issues for AI regulators, notably on the state stage. State legislatures have enacted legal guidelines straight concentrating on algorithmic discrimination.

For instance, Colorado’s AI Act prohibits “algorithmic discrimination” and imposes obligations on deployers of high-risk AI programs to keep away from differential therapy or impression on protected teams, whereas Texas’s Accountable AI Governance Act equally addresses bias in automated decision-making. These state-level necessities create important compliance obligations for corporations deploying AI-powered client merchandise.

On the federal stage, the FTC has traditionally taken the place that AI programs leading to discriminatory outcomes can violate present consumer-protection legal guidelines, even with out specific intent to discriminate, although the present administration’s coverage route – emphasizing private-sector innovation and questioning prescriptive algorithmic discrimination frameworks – could mood near-term federal enforcement on this space.

State regulators and attorneys common, nonetheless, are more and more scrutinizing AI-powered merchandise for potential bias, notably in purposes affecting susceptible populations.

For client robotics, this creates each compliance obligations and reputational threat. A companion robotic that responds in a different way primarily based on accent or speech patterns, a youngsters’s academic robotic that acknowledges some pores and skin tones higher than others in visible interactions, or a family assistant with voice recognition that performs inconsistently throughout age teams or gender current each regulatory and legal responsibility issues. Robots designed to work together with susceptible populations – notably, youngsters, aged customers or people with disabilities – should carry out equitably throughout person teams.

Firms ought to develop strong testing protocols to judge AI efficiency throughout numerous populations throughout growth, monitor for bias indicators in deployed programs, and set up processes to deal with efficiency disparities when recognized.

Robotics and AI builders ought to consider efficiency with numerous populations. Credit score: SpaceOak, through Adobe Inventory

Navigate requirements compliance strategically

The U.S. regulatory panorama differs essentially from the EU’s. The place the EU could, on paper, present better readability by way of its prescriptive framework – although questions stay about implementation – the U.S. affords flexibility however much less certainty.

The present coverage atmosphere within the U.S. emphasizes market-driven innovation over prescriptive federal frameworks, however the particular implications for client robotics regulation stay unclear. Firms that spend money on understanding these dynamics, have interaction with requirements growth processes, and work with skilled advisors can extra successfully navigate this panorama whereas positioning themselves for achievement because it evolves.

The market alternative is substantial, notably for early entrants that may meet client demand for these merchandise. Firms that construct cheap compliance capabilities now – addressing not simply bodily security necessities but in addition disclosure practices, knowledge governance and legal responsibility threat administration – shall be ready to capitalize on large client demand whereas higher managing compliance, rising rules, and litigation threat throughout their key markets.

Concerning the authors

Elliot Kaye is a associate at legislation agency Cooley LLP and former chairman of the U.S. Shopper Product Security Fee (CPSC), the place he served because the chief product security official within the U.S. and because the company’s chief in executing its mandate to guard the general public from harmful merchandise.

Elliot Kaye is a associate at legislation agency Cooley LLP and former chairman of the U.S. Shopper Product Security Fee (CPSC), the place he served because the chief product security official within the U.S. and because the company’s chief in executing its mandate to guard the general public from harmful merchandise.

Throughout his tenure, Elliot modernized the company, notably the CPSC’s design, staffing and utilization of its compliance, investigatory and enforcement powers. At Cooley, he advises shoppers on the complete product life cycle, with a selected concentrate on the intersection of synthetic intelligence and client items, particularly robots.

William Okay. Pao is co-head of Cooley’s AI Activity Pressure and a litigation associate on the agency with over 20 years of expertise serving as a trusted advisor and first-chair trial lawyer for world corporations main technological and monetary innovation. He guides shoppers by way of their most advanced litigation and regulatory exposures and is broadly considered a go-to legal professional for rising applied sciences, novel authorized questions, and cross-border disputes.

William Okay. Pao is co-head of Cooley’s AI Activity Pressure and a litigation associate on the agency with over 20 years of expertise serving as a trusted advisor and first-chair trial lawyer for world corporations main technological and monetary innovation. He guides shoppers by way of their most advanced litigation and regulatory exposures and is broadly considered a go-to legal professional for rising applied sciences, novel authorized questions, and cross-border disputes.

Philip Brown is a particular counsel at Cooley with over 15 years of expertise in product security and client legislation, together with over a decade in federal authorities enforcement on the CPSC and FTC. At Cooley, he advises world shoppers on product compliance dangers, enforcement publicity, and litigation technique.

Philip Brown is a particular counsel at Cooley with over 15 years of expertise in product security and client legislation, together with over a decade in federal authorities enforcement on the CPSC and FTC. At Cooley, he advises world shoppers on product compliance dangers, enforcement publicity, and litigation technique.