Databricks operates at a scale the place our inner logs and datasets are consistently altering—schemas evolve, new columns seem, and information semantics drift. This weblog discusses how we use Databricks at Databricks internally to maintain PII and different delicate information accurately labeled as our platform adjustments.

To do that, we constructed LogSentinel, an LLM-powered information classification system on Databricks that tracks schema evolution, detects labeling drift, and feeds high-quality labels into our governance and safety controls. We use MLflow to trace experiments and monitor efficiency over time, and we’re integrating the most effective concepts from LogSentinel again into the Databricks Knowledge Classification product so clients can profit from the identical method.

Why this System Issues

This technique is designed to maneuver three concrete enterprise levers for platform, information and safety groups:

- Shorter compliance cycles: recurring evaluation duties that beforehand took weeks of analyst time at the moment are accomplished in hours as a result of columns are pre-labeled and pre-triaged earlier than people take a look at them.

- Decrease operational danger: the system constantly detects labeling drift and schema adjustments, so delicate fields are much less prone to quietly slip by way of with incorrect or lacking tags.

- Stronger coverage enforcement: dependable labels now immediately drive masking, entry management, retention, and residency guidelines, turning what was once “best-effort governance” into executable coverage.

In observe, groups can plug new tables into an ordinary pipeline, monitor drift metrics and exceptions, and depend on the system to implement PII and residency constraints with out constructing a bespoke classifier for each area.

System Structure at a Look

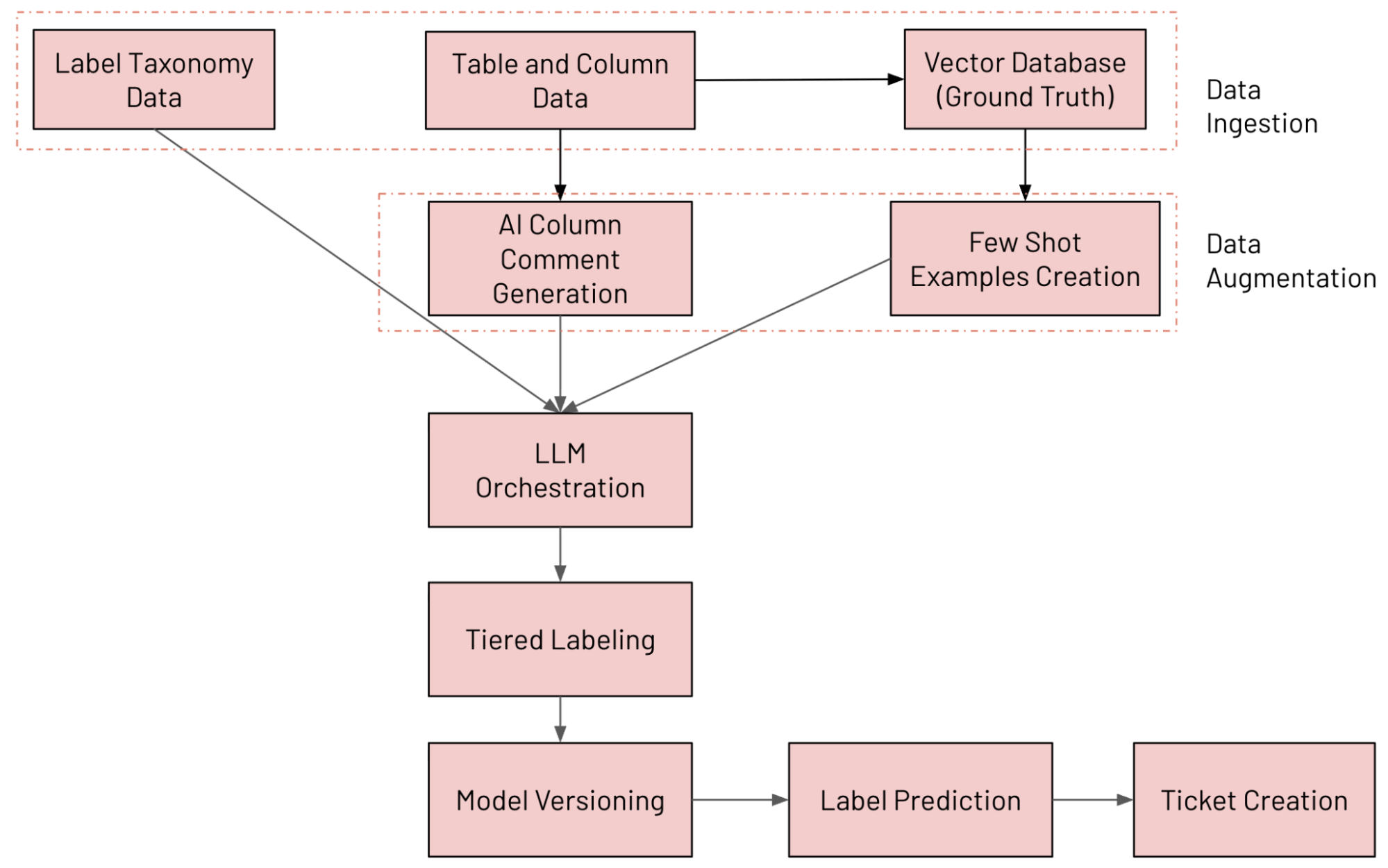

We constructed an LLM-powered column classification system on Databricks that constantly annotates tables utilizing our inner information taxonomy, detects labeling drift, and opens remediation tickets when one thing seems to be fallacious. The assorted elements concerned within the system are outlined beneath (tracked and evaluated utilizing MLFlow):

- Knowledge Ingestion: Ingesting numerous information sources (together with Unity Catalog column information, label taxonomy information and floor reality information)

- Knowledge Augmentation: Augmenting information utilizing Databricks Vector Search and AI Remark era

- LLM Orchestration

- Tiered Labeling System

- Mannequin Versioning: Operating a number of fashions in parallel

- Label Prediction: Predicting ultimate label utilizing Combination of Consultants (MoE) method

- Ticket Creation: Detecting violations and producing JIRA tickets

The top-to-end workflow is proven within the determine beneath

Knowledge Ingestion

For every log kind or dataset to be annotated, we randomly pattern values from each column and ship the next metadata into the system: desk identify, column identify, kind, present remark, and a small pattern of values. To cut back LLM value and enhance throughput, a number of columns from the identical desk are batched collectively in a single request.

Our taxonomy is outlined utilizing Protocol Buffers and at present consists of greater than 100 hierarchical information labels, with room for customized extensions when groups want extra classes. This offers governance and platform stakeholders a shared contract for what “PII” and “delicate” imply past a handful of regexes.

Knowledge Augmentation

Two augmentation methods considerably enhance classification high quality:

- AI column remark era: when feedback are lacking, we use Databricks AI-generated feedback to synthesize concise, human-readable descriptions that assist each the LLM and future desk shoppers.

- Few-shot instance era: we preserve a floor reality dataset and use each static examples and dynamic examples retrieved by way of Vector Search; for every column, we construct an embedding from identify, kind, remark, and context, then retrieve top-Okay comparable labeled columns to incorporate within the immediate.

Static prompting is greatest throughout early levels or when labeled information is proscribed, offering consistency and reproducibility. Dynamic prompting is more practical in mature methods, utilizing vector search to tug comparable examples and adapt to new schemas and information domains in giant, numerous datasets.

LLM Orchestration

On the core of the system is a light-weight orchestration layer that manages LLM calls at manufacturing scale.

Key capabilities embrace:

- Multi-model routing throughout internally hosted LLMs (for instance, Llama, Claude, and GPT-based fashions) with automated fallback when a mannequin is unavailable.

- Retry logic for transient failures and fee limits with exponential backoff.

- Validation hooks that detect empty, invalid, or hallucinated labels and re-run these circumstances with backup fashions.

- Batch processing that annotates a number of columns without delay to optimize token utilization with out shedding context.

Tiered Labeling System

We predict three kinds of labels per column:

- Granular labels, drawn from a set of 100+ fine-grained choices that energy masking, redaction, and tight entry controls.

- Hierarchical labels, which mixture associated granular labels into broader classes appropriate for monitoring and reporting.

- Residency labels, which point out whether or not information should stay in-region or can transfer cross-region, immediately feeding information motion insurance policies.

To maintain predictions constant and cut back hallucinations, we use a two-stage circulation: a broad classification step assigns a high-level class, then a refinement step picks the precise label inside that class. This mirrors how a human reviewer would first determine “that is workspace information” after which select the precise workspace identifier label.

Mannequin Versioning and Label Prediction

As a substitute of counting on a single “greatest” configuration, every mannequin setup is handled as an professional that competes to label a column.

A number of mannequin variations run in parallel with variations in:

- Main and fallback LLM selections.

- Use of generated feedback vs. uncooked metadata.

- Prompting technique (static vs. dynamic few-shot).

- Label granularity and taxonomy subsets.

Every professional produces a label and a confidence rating between 0 and 100. The system then selects the label from the professional with the best confidence, a Combination-of-Consultants model method that improves accuracy and reduces the impression of occasional unhealthy predictions from anybody configuration.

This design makes it secure to experiment: new fashions or immediate methods may be launched, run alongside present ones, and evaluated on each metrics and downstream ticket quantity earlier than turning into the default.

Ticket Creation

The pipeline constantly compares present schema annotations with LLM predictions to floor significant deviations.

Typical circumstances embrace:

- New columns added with none annotations.

- Current annotations that now not match the column’s content material.

- Columns containing delicate values which were labeled as eligible for cross-region motion.

When the system detects a violation, it creates a coverage entry and information a JIRA ticket for the proudly owning workforce with context in regards to the desk, column, proposed label, and confidence. This turns information classification points into an ongoing workflow that groups can monitor and resolve in the identical approach they monitor different manufacturing incidents.

Influence and Analysis

The system was evaluated on 2,258 labeled samples, of which 1,010 contained PII and 1,248 have been non-PII. On this dataset, it reached as much as 92% precision and 95% recall for PII detection.

Extra importantly for stakeholders, the deployment produced the operational outcomes that have been wanted:

- Handbook evaluation effort dropped from weeks to hours for every large-scale audit cycle as a result of reviewers begin from high-quality prompt labels slightly than uncooked schemas.

- Labeling drift is now detected constantly as schemas evolve, as an alternative of being found throughout an annual evaluation.

- Alerts about delicate information mis-labeled as secure are extra focused, so safety groups can act shortly as an alternative of triaging noisy rule-based scanners.

- Masking and residency insurance policies are enforced at scale utilizing the identical label taxonomy that powers analytics and reporting.

Precision and recall act as guardrails, however the system is tuned round outcomes reminiscent of evaluation time, drift detection latency, and the quantity of actionable tickets produced per week.

Conclusion

By combining taxonomy-driven labeling and an MoE-style analysis framework, we’ve enabled present engineering and governance workflows at Databricks, with experiments and deployments managed utilizing MLflow. It retains labels recent as schemas change, makes compliance evaluations quicker and extra targeted, and gives the enforcement hooks wanted to use masking and residency guidelines persistently throughout the platform.

Essentially the most thrilling a part of this work is integrating our inner learnings immediately into the Knowledge Classification product. As we operationalize and validate these strategies inside LogSentinel, we incorporate our strategies immediately in Databricks Knowledge Classification.

The identical sample—ingest metadata and samples, increase context, orchestrate a number of LLMs, and feed predictions into coverage and ticketing methods—may be reused wherever dependable, evolving understanding of information is required. By incorporating these insights inside our core product providing, we’re enabling each group to leverage their information intelligence for compliance and governance with the identical precision and scale we do at Databricks.

Acknowledgements

This venture was made doable by way of collaboration amongst a number of engineering groups. Because of Anirudh Kondaveeti, Sittichai Jiampojamarn, Zefan Xu, Li Yang, Xiaohui Solar, Dibyendu Karmakar, Chenen Liang, Viswesh Periyasamy, Chengzu Ou, Evion Kim, Matthew Hayes, Benjamin Ebanks, Sudeep Srivastava for his or her help and contributions.