Once we discuss constructing AI knowledge facilities, east-west GPU materials typically steal the highlight. However there’s one other site visitors path that’s simply as vital: north-south connectivity. In as we speak’s AI environments, how your knowledge middle ingests knowledge and delivers outcomes at scale could make or break your AI technique.

Why north-south site visitors now issues most for AI at scale

AI is now not a siloed undertaking tucked away in an remoted cluster. Enterprises are quickly evolving to ship AI as a shared service, pulling in huge volumes of knowledge from exterior sources and serving outcomes to customers, functions, and downstream programs. This AI-driven site visitors generates the bursty, high-bandwidth north-south flows that characterize fashionable AI environments:

- Ingesting and preprocessing enormous datasets from object shops, knowledge lakes, or streaming platforms

- Loading and checkpointing giant fashions from high-performance storage

- Querying vector databases and have shops to supply context for retrieval-augmented technology (RAG) and agentic workflows

- Serving real-time inference to hundreds of concurrent customers or microservices

AI workloads amplify conventional north-south challenges; typically they arrive in unpredictable bursts, can transfer terabytes in minutes, and are extremely delicate to latency and jitter. Any stall leaves costly GPUs idle and elongates job completion instances, drives up prices, and diminishes returns on AI investments.

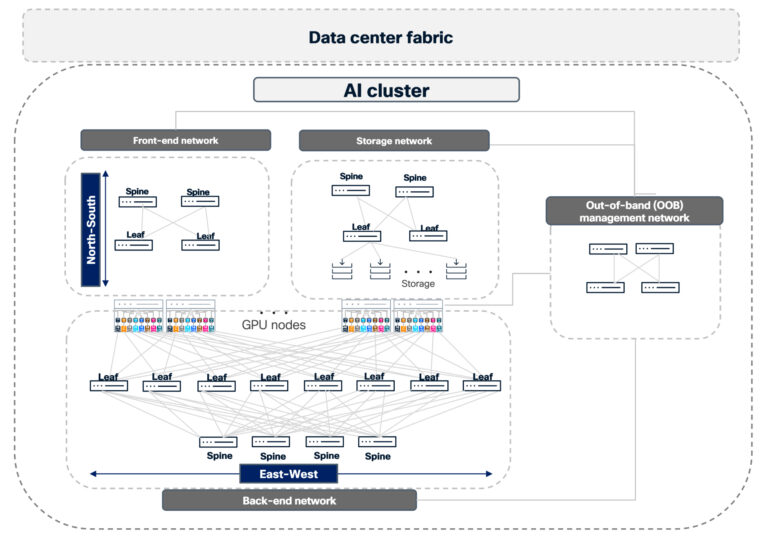

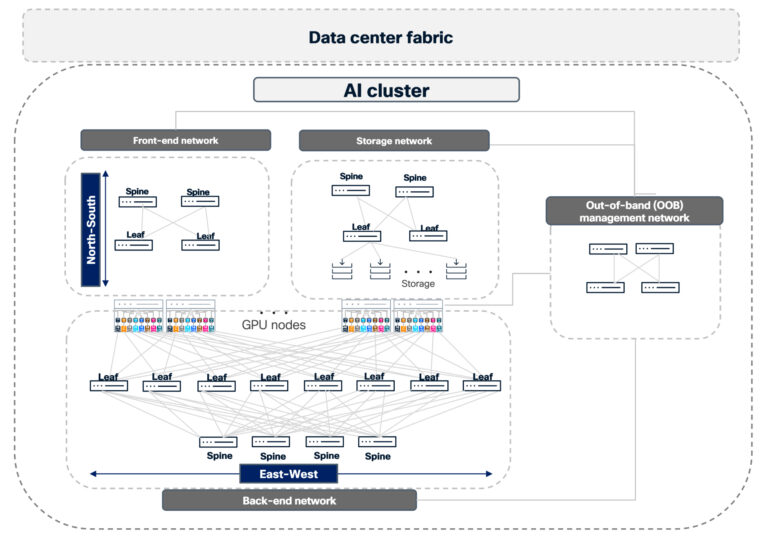

Understanding the AI cluster: a multi-network structure

It’s simple to think about an AI cluster as a single, monolithic community. In actuality, it’s a composition of a number of interconnected networks that should work collectively predictably:

- Entrance-end community connects customers, functions, and providers to the AI cluster.

- Storage community offers high-throughput storage entry.

- Again-end compute community carries GPU-to-GPU site visitors for computation.

- Out-of-band administration community for baseboard administration controller (BMC), host administration, and control-plane entry.

- Information middle material, together with border/edge, ties the cluster into the remainder of the atmosphere and the web.

Peak efficiency isn’t nearly bandwidth, it’s about how nicely your material handles congestion, failures, and operational complexity throughout all of those planes as AI demand grows.

How north-south connectivity impacts GPU effectivity

Fashionable AI depends on steady, real-time interactions between GPU clusters and the skin world. For instance:

- Fetching stay knowledge from exterior software programming interfaces (APIs) or enterprise sources and associate programs

- Excessive-speed loading of coaching units and mannequin checkpoints from converged storage materials

- Performing dynamic contextual lookups from vector databases and search indices for RAG and agent-based workflows

- Serving high-QPS inference for user-facing functions and inside providers

These patterns generate:

- Bursty, unpredictable masses: Batch/distributed inference jobs can all of the sudden eat vital bandwidth, stressing uplinks and core hyperlinks.

- Tight latency and jitter budgets: Even short-lived congestion or microbursts could cause head-of-line blocking and decelerate GPU pipelines.

- Danger of static sizzling spots: Conventional static equal-cost multi-path (ECMP) hashing can’t adapt to altering hyperlink utilization, resulting in congested paths and underutilized capability elsewhere.

To maintain your GPUs absolutely utilized, your north-south community have to be congestion-aware, resilient, and straightforward to function at scale.

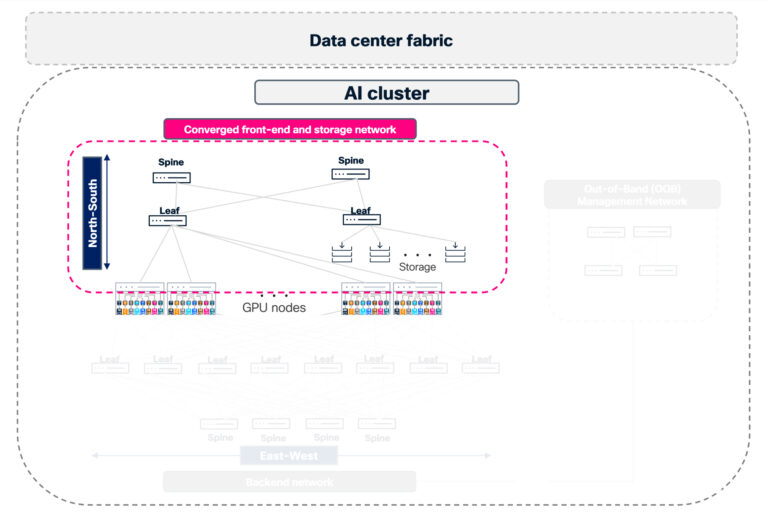

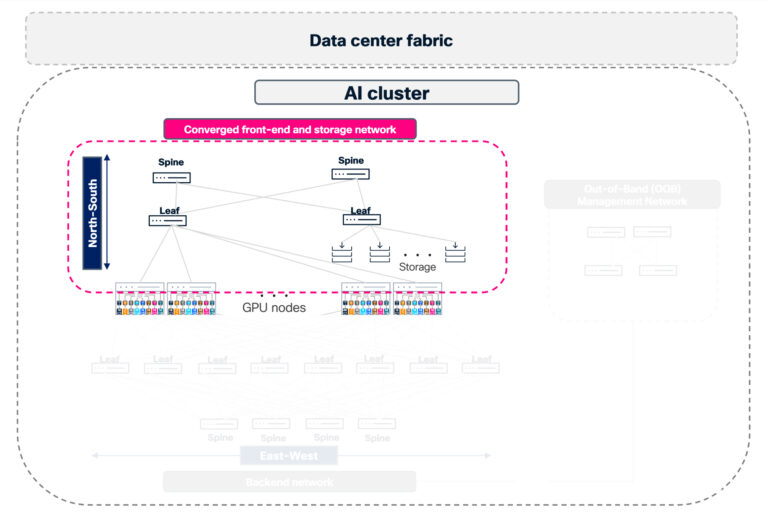

Simplifying AI infrastructure with converged front-end and storage networks

Many main AI deployments are converging front-end and storage site visitors onto a unified, high-performance Ethernet material distinct from the east-west compute community. This architectural method is pushed by each efficiency necessities and operational effectivity—permitting clients to reuse optics and cabling whereas leveraging current Clos material investments, considerably decreasing value and cabling complexity.

This converged north-south material:

- Delivers high-performance storage entry over 400G/800G leaf-spine architectures

- Carries host administration and control-plane site visitors from administration nodes to compute and storage nodes

- Connects to frame leaf or core switches for exterior connectivity and tenant ingress/egress

Cisco N9000 switches working Cisco NX-OS are purpose-built for these unified materials, delivering each the size and throughput required by fashionable AI front-end and storage networks. By combining predictable, heavy storage site visitors with lighter, latency-sensitive front-end software flows, you possibly can maximize your material’s effectivity when it’s correctly engineered.

Optimizing AI site visitors with Cisco Silicon One and Cisco NX-OS

Managing north-south AI site visitors isn’t nearly merging inference, storage, and coaching workloads on one community however will also be about addressing the challenges of converging storage networks related to completely different endpoints. It’s about optimizing for every site visitors kind to reduce latency and keep away from efficiency dips throughout congestion.

In fashionable AI infrastructure, completely different workloads demand completely different therapy:

- Inference site visitors requires low, predictable latency.

- Coaching site visitors wants most throughput.

- Storage site visitors can have completely different patterns between high-performance storage, normal storage, and shared storage.

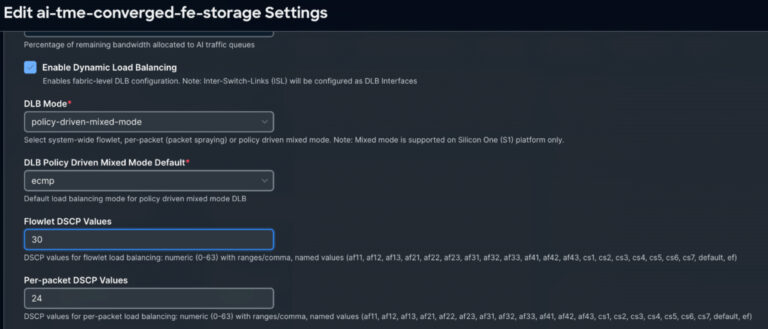

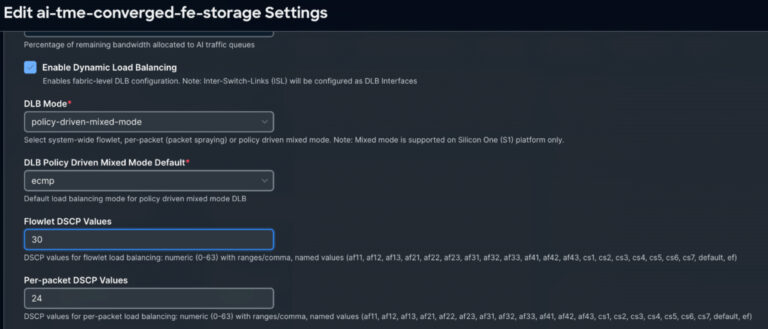

Whereas the back-end material primarily handles lossless distant direct reminiscence entry (RDMA) site visitors, the converged front-end and storage material carries a mixture of site visitors varieties. Within the absence of high quality of service (QoS) and efficient load-balancing mechanisms, sudden bursts of administration or person knowledge can result in packet loss, which is catastrophic for the strict lossless ROCEv2 necessities. That’s why Cisco Silicon One and Cisco NX-OS work in tandem, delivering dynamic load balancing (DLB) that operates in each flowlet and per-packet modes, all orchestrated by way of subtle coverage management.

Our method makes use of Cisco Silicon One application-specific built-in circuits (ASICs) paired with Cisco NX-OS intelligence to supply policy-driven, traffic-aware load balancing that adapts in actual time. This contains the next:

- Per-packet DLB: When endpoints (resembling SuperNICs) can deal with out-of-order supply, per-packet mode distributes particular person packets throughout all accessible hyperlinks in a DLB ECMP group. This maximizes hyperlink utilization and immediately relieves congestion sizzling spots—vital for bursty AI workloads.

- Flowlet-based DLB: For site visitors requiring in-order supply, flowlet-based DLB splits site visitors at pure burst boundaries. Utilizing real-time congestion and delay metrics measured by Cisco Silicon One, the system intelligently steers every burst to the least-utilized ECMP path—sustaining stream integrity whereas optimizing community sources.

- Coverage-driven preferential therapy: High quality of service (QoS) insurance policies override default habits utilizing match standards resembling differentiated providers code level (DSCP) markings or entry management lists (ACLs). This permits selective per-packet load balancing for particular high-priority or congestion-sensitive flows, guaranteeing every site visitors kind receives optimum dealing with.

- Coexistence with conventional ECMP: DLB site visitors leverages dynamic, telemetry-driven choice whereas non-DLB flows proceed utilizing conventional ECMP. This permits incremental adoption and focused optimization with out requiring a forklift improve of your total infrastructure.

This simultaneous mixed-mode method is especially helpful for north-south flows resembling storage, checkpointing, and database entry, the place congestion consciousness and even utilization straight translate into higher GPU effectivity.

Scaling AI operations utilizing Cisco Nexus One with Nexus Dashboard

Cisco Nexus One is a unified resolution that delivers community intelligence from silicon to software program—operationalized by way of Cisco Nexus Dashboard on-premises and cloud-managed Cisco Hyperfabric. It offers the intelligence required to function trusted, future-ready materials at scale with assured efficiency.

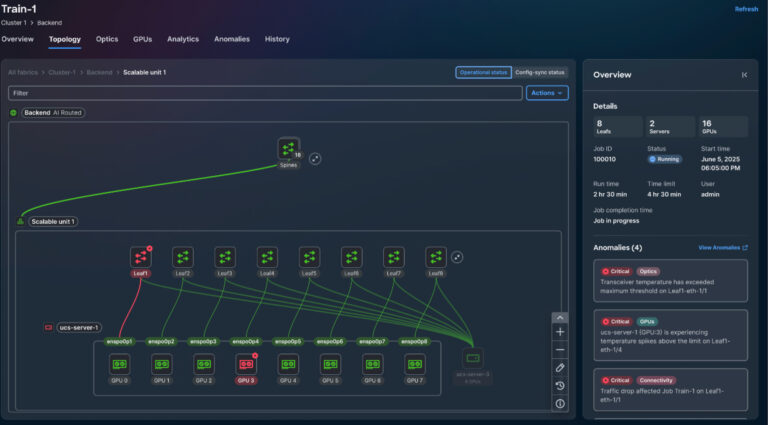

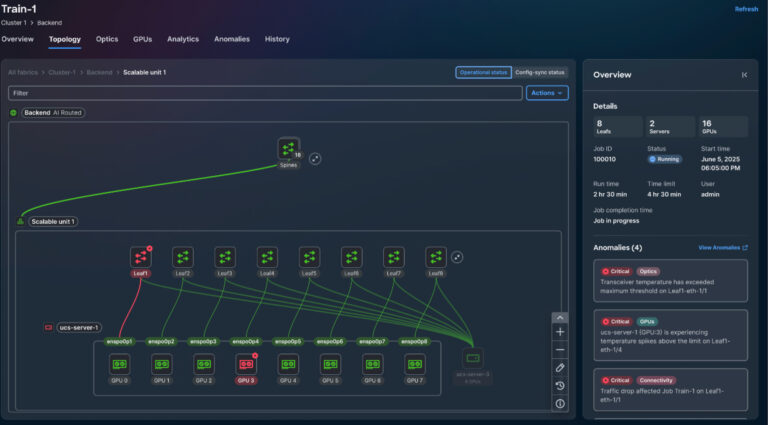

As AI clusters and community materials develop, operational simplicity turns into mission vital. With Cisco Nexus Dashboard, you acquire a unified operational layer for seamless provisioning, monitoring, and troubleshooting throughout your total multi-fabric atmosphere.

In an AI knowledge middle, this allows a unified expertise, simplified automation, and AI job observability. Utilizing Cisco Nexus Dashboard, operators can handle configurations and insurance policies for AI clusters and different materials from a single management level, considerably decreasing deployment and change-management overhead.

Nexus Dashboard simplifies automation by offering templates and policy-driven workflows to roll out best-practice express congestion notification (ECN), precedence stream management (PFC), and load-balancing configurations throughout materials, considerably decreasing handbook effort.

Utilizing Cisco Nexus Dashboard, you acquire end-to-end visibility into AI workloads throughout the total stack, enabling real-time monitoring of networks, NICs, GPUs, and distributed compute nodes.

Accelerating AI deployment with Cisco Validated Designs

Cisco Validated Designs (CVDs) and Cisco reference architectures present prescriptive, confirmed blueprints for constructing converged north-south materials which can be AI-ready, eradicating guesswork and rushing deployment.

North–south connectivity in enterprise AI—key takeaways:

- North-south efficiency is now on the vital path for enterprise AI; ignoring it may negate investments in high-end GPUs.

- Converged front-end and storage materials constructed on high-density 400G/800G-capable Cisco N9000 switches present scalable, environment friendly entry to knowledge and providers.

- Cisco NX-OS policy-based load balancing mixed-mode is a robust functionality for dealing with unpredictable site visitors in an AI cluster whereas preserving efficiency.

- Cisco Nexus Dashboard centralizes operations, visibility, and diagnostics throughout materials, which is important when many AI workloads share the identical infrastructure.

- Cisco Nexus One simplifies AI community operations from silicon to working mannequin; allows scalable knowledge middle materials; and delivers job-aware, network-to-GPU visibility for seamless telemetry correlation throughout networks.

- Cisco Validated architectures and reference designs provide confirmed patterns for safe, automated, and high-throughput north-south connectivity tailor-made to AI clusters.

Future-proofing your AI technique with a resilient community basis

On this new paradigm, north-south networks are making a comeback, rising because the decisive consider your AI journey. Profitable with AI isn’t nearly deploying the quickest GPUs; it’s about constructing a north-south community that may maintain tempo with fashionable enterprise calls for. With Cisco Silicon One, NX-OS, and Nexus Dashboard, you acquire a resilient, clever, and high-throughput basis that connects your knowledge to customers and functions on the pace your group requires, unlocking the total energy of your AI investments.