Knowledge preprocessing removes errors, fills lacking data, and standardizes information to assist algorithms discover precise patterns as an alternative of being confused by both noise or inconsistencies.

Any algorithm wants correctly cleaned up information organized in structured codecs earlier than studying from the information. The machine studying course of requires information preprocessing as its elementary step to ensure fashions preserve their accuracy and operational effectiveness whereas making certain dependability.

The standard of preprocessing work transforms fundamental information collections into essential insights alongside reliable outcomes for all machine studying initiatives. This text walks you thru the important thing steps of knowledge preprocessing for machine studying, from cleansing and reworking information to real-world instruments, challenges, and tricks to enhance mannequin efficiency.

Understanding Uncooked Knowledge

Uncooked information is the place to begin for any machine studying undertaking, and the information of its nature is key.

The method of coping with uncooked information could also be uneven typically. It typically comes with noise, irrelevant or deceptive entries that may skew outcomes.

Lacking values are one other downside, particularly when sensors fail or inputs are skipped. Inconsistent codecs additionally present up typically: date fields could use totally different types, or categorical information could be entered in numerous methods (e.g., “Sure,” “Y,” “1”).

Recognizing and addressing these points is crucial earlier than feeding the information into any machine studying algorithm. Clear enter results in smarter output.

Knowledge Preprocessing in Knowledge Mining vs Machine Studying

Whereas each information mining and machine studying depend on preprocessing to organize information for evaluation, their targets and processes differ.

In information mining, preprocessing focuses on making massive, unstructured datasets usable for sample discovery and summarization. This contains cleansing, integration, and transformation, and formatting information for querying, clustering, or affiliation rule mining, duties that don’t at all times require mannequin coaching.

In contrast to machine studying, the place preprocessing typically facilities on bettering mannequin accuracy and lowering overfitting, information mining goals for interpretability and descriptive insights. Function engineering is much less about prediction and extra about discovering significant traits.

Moreover, information mining workflows could embrace discretization and binning extra continuously, significantly for categorizing steady variables. Whereas ML preprocessing could cease as soon as the coaching dataset is ready, information mining could loop again into iterative exploration.

Thus, the preprocessing targets: perception extraction versus predictive efficiency, set the tone for the way the information is formed in every area. In contrast to machine studying, the place preprocessing typically facilities on bettering mannequin accuracy and lowering overfitting, information mining goals for interpretability and descriptive insights.

Function engineering is much less about prediction and extra about discovering significant traits.

Moreover, information mining workflows could embrace discretization and binning extra continuously, significantly for categorizing steady variables. Whereas ML preprocessing could cease as soon as the coaching dataset is ready, information mining could loop again into iterative exploration.

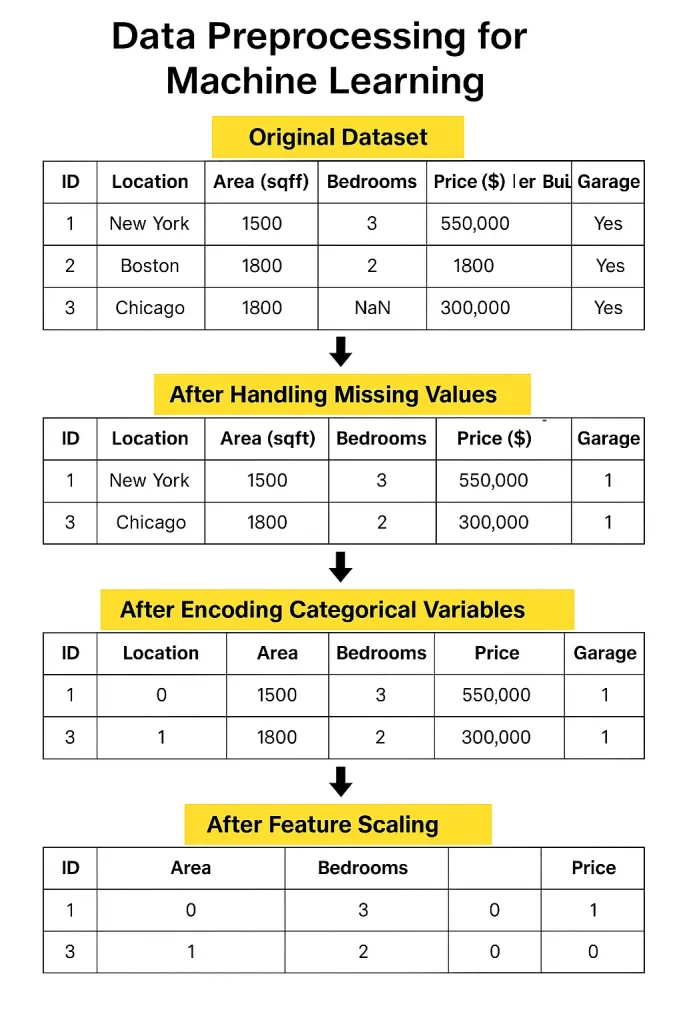

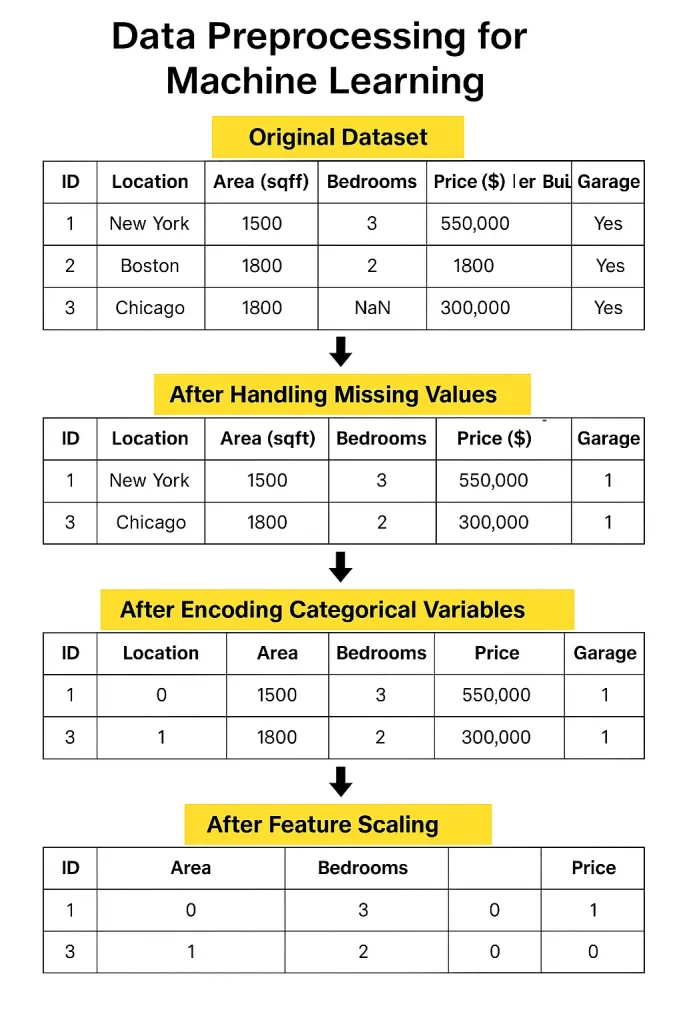

Core Steps in Knowledge Preprocessing

1. Knowledge Cleansing

Actual-world information typically comes with lacking values, blanks in your spreadsheet that must be crammed or rigorously eliminated.

Then there are duplicates, which might unfairly weight your outcomes. And don’t neglect outliers- excessive values that may pull your mannequin within the unsuitable path if left unchecked.

These can throw off your mannequin, so you could must cap, remodel, or exclude them.

2. Knowledge Transformation

As soon as the information is cleaned, that you must format it. In case your numbers range wildly in vary, normalization or standardization helps scale them constantly.

Categorical data- like nation names or product types- must be transformed into numbers by means of encoding.

And for some datasets, it helps to group related values into bins to scale back noise and spotlight patterns.

3. Knowledge Integration

Usually, your information will come from totally different places- information, databases, or on-line instruments. Merging all of it may be difficult, particularly if the identical piece of data seems totally different in every supply.

Schema conflicts, the place the identical column has totally different names or codecs, are widespread and wish cautious decision.

4. Knowledge Discount

Large information can overwhelm fashions and enhance processing time. By deciding on solely essentially the most helpful options or lowering dimensions utilizing strategies like PCA or sampling makes your mannequin sooner and infrequently extra correct.

Instruments and Libraries for Preprocessing

- Scikit-learn is great for most elementary preprocessing duties. It has built-in capabilities to fill lacking values, scale options, encode classes, and choose important options. It’s a strong, beginner-friendly library with every little thing that you must begin.

- Pandas is one other important library. It’s extremely useful for exploring and manipulating information.

- TensorFlow Knowledge Validation could be useful when you’re working with large-scale initiatives. It checks for information points and ensures your enter follows the proper construction, one thing that’s simple to miss.

- DVC (Knowledge Model Management) is nice when your undertaking grows. It retains observe of the totally different variations of your information and preprocessing steps so that you don’t lose your work or mess issues up throughout collaboration.

Widespread Challenges

One of many largest challenges at present is managing large-scale information. When you’ve gotten tens of millions of rows from totally different sources day by day, organizing and cleansing all of them turns into a severe process.

Tackling these challenges requires good instruments, strong planning, and fixed monitoring.

One other vital situation is automating preprocessing pipelines. In principle, it sounds nice; simply arrange a circulation to wash and put together your information robotically.

However in actuality, datasets range, and guidelines that work for one may break down for an additional. You continue to want a human eye to examine edge circumstances and make judgment calls. Automation helps, nevertheless it’s not at all times plug-and-play.

Even when you begin with clear information, issues change, codecs shift, sources replace, and errors sneak in. With out common checks, your once-perfect information can slowly collapse, resulting in unreliable insights and poor mannequin efficiency.

Finest Practices

Listed below are a number of finest practices that may make an enormous distinction in your mannequin’s success. Let’s break them down and look at how they play out in real-world conditions.

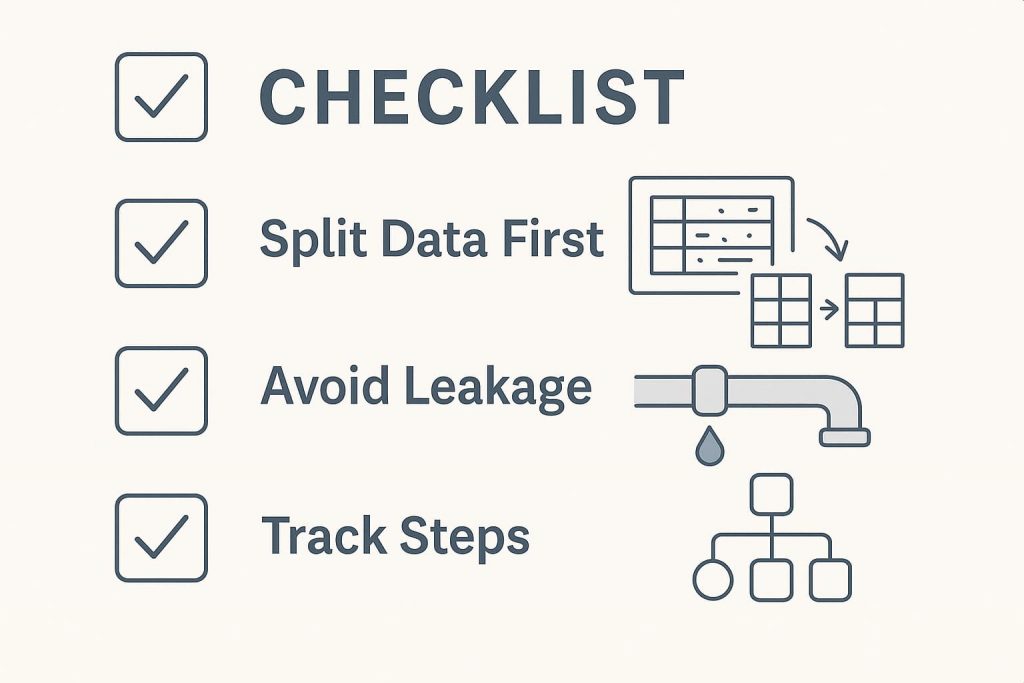

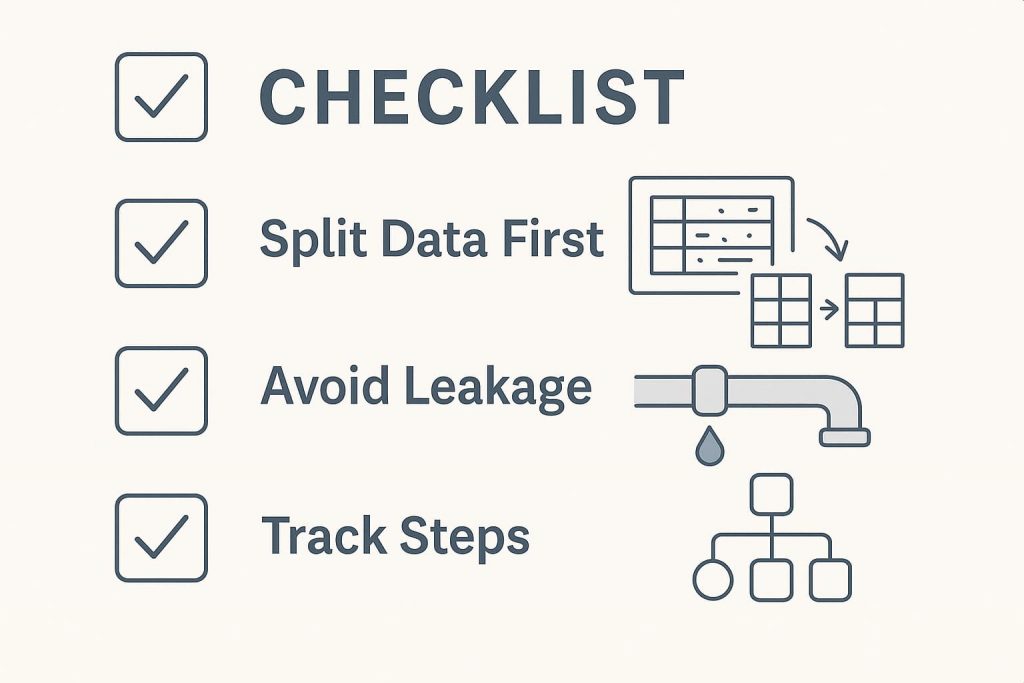

1. Begin With a Correct Knowledge Break up

A mistake many novices make is doing all of the preprocessing on the complete dataset earlier than splitting it into coaching and check units. However this strategy can by chance introduce bias.

For instance, when you scale or normalize your complete dataset earlier than the break up, data from the check set could bleed into the coaching course of, which known as information leakage.

All the time break up your information first, then apply preprocessing solely on the coaching set. Later, remodel the check set utilizing the identical parameters (like imply and commonplace deviation). This retains issues truthful and ensures your analysis is sincere.

2. Avoiding Knowledge Leakage

Knowledge leakage is sneaky and one of many quickest methods to spoil a machine studying mannequin. It occurs when the mannequin learns one thing it wouldn’t have entry to in a real-world state of affairs—dishonest.

Widespread causes embrace utilizing goal labels in function engineering or letting future information affect present predictions. The bottom line is to at all times take into consideration what data your mannequin would realistically have at prediction time and hold it restricted to that.

3. Observe Each Step

As you progress by means of your preprocessing pipeline, dealing with lacking values, encoding variables, scaling options, and holding observe of your actions are important not simply on your personal reminiscence but in addition for reproducibility.

Documenting each step ensures others (or future you) can retrace your path. Instruments like DVC (Knowledge Model Management) or a easy Jupyter pocket book with clear annotations could make this simpler. This type of monitoring additionally helps when your mannequin performs unexpectedly—you’ll be able to return and work out what went unsuitable.

Actual-World Examples

To see how a lot of a distinction preprocessing makes, take into account a case examine involving buyer churn prediction at a telecom firm. Initially, their uncooked dataset included lacking values, inconsistent codecs, and redundant options. The primary mannequin educated on this messy information barely reached 65% accuracy.

After making use of correct preprocessing, imputing lacking values, encoding categorical variables, normalizing numerical options, and eradicating irrelevant columns, the accuracy shot as much as over 80%. The transformation wasn’t within the algorithm however within the information high quality.

One other nice instance comes from healthcare. A crew engaged on predicting coronary heart illness

used a public dataset that included blended information varieties and lacking fields.

They utilized binning to age teams, dealt with outliers utilizing RobustScaler, and one-hot encoded a number of categorical variables. After preprocessing, the mannequin’s accuracy improved from 72% to 87%, proving that the way you put together your information typically issues greater than which algorithm you select.

In brief, preprocessing is the muse of any machine studying undertaking. Observe finest practices, hold issues clear, and don’t underestimate its influence. When performed proper, it could possibly take your mannequin from common to distinctive.

Incessantly Requested Questions (FAQ’s)

1. Is preprocessing totally different for deep studying?

Sure, however solely barely. Deep studying nonetheless wants clear information, simply fewer handbook options.

2. How a lot preprocessing is an excessive amount of?

If it removes significant patterns or hurts mannequin accuracy, you’ve doubtless overdone it.

3. Can preprocessing be skipped with sufficient information?

No. Extra information helps, however poor-quality enter nonetheless results in poor outcomes.

3. Do all fashions want the identical preprocessing?

No. Every algorithm has totally different sensitivities. What works for one could not swimsuit one other.

4. Is normalization at all times obligatory?

Largely, sure. Particularly for distance-based algorithms like KNN or SVMs.

5. Are you able to automate preprocessing totally?

Not solely. Instruments assist, however human judgment continues to be wanted for context and validation.

Why observe preprocessing steps?

It ensures reproducibility and helps establish what’s bettering or hurting efficiency.

Conclusion

Knowledge preprocessing isn’t only a preliminary step, and it’s the bedrock of fine machine studying. Clear, constant information results in fashions that aren’t solely correct but in addition reliable. From eradicating duplicates to selecting the best encoding, every step issues. Skipping or mishandling preprocessing typically results in noisy outcomes or deceptive insights.

And as information challenges evolve, a strong grasp of principle and instruments turns into much more helpful. Many hands-on studying paths at present, like these present in complete information science

When you’re trying to construct sturdy, real-world information science expertise, together with hands-on expertise with preprocessing strategies, take into account exploring the Grasp Knowledge Science & Machine Studying in Python program by Nice Studying. It’s designed to bridge the hole between principle and apply, serving to you apply these ideas confidently in actual initiatives.