Breaking Dangerous… Knowledge Silos

We haven’t fairly found out keep away from utilizing relational databases. Of us have undoubtedly tried, and whereas Apache Kafka® has turn out to be the usual for event-driven architectures, it nonetheless struggles to switch your on a regular basis PostgreSQL database occasion within the trendy software stack. No matter what the longer term holds for databases, we have to clear up information silo issues. To do that, Rockset has partnered with Confluent, the unique creators of Kafka who present the cloud-native information streaming platform Confluent Cloud. Collectively, we’ve constructed an answer with fully-managed providers that unlocks relational database silos and gives a real-time analytics surroundings for the trendy information software.

My first sensible publicity to databases was in a university course taught by Professor Karen Davis, now a professor at Miami College in Oxford, Ohio. Our senior venture, based mostly on the LAMP stack (Perl in our case) and sponsored with an NFS grant, put me on a path that unsurprisingly led me to the place I’m right this moment. Since then, databases have been a significant a part of my skilled life and trendy, on a regular basis life for most people.

Within the curiosity of full disclosure, it’s price mentioning that I’m a former Confluent worker, now working at Rockset. At Confluent I talked usually concerning the fanciful sounding “Stream and Desk Duality”. It’s an concept that describes how a desk can generate a stream and a stream might be remodeled right into a desk. The connection is described on this order, with tables first, as a result of that’s usually how most people question their information. Nonetheless, even inside the database itself, the whole lot begins as an occasion in a log. Usually this takes the type of a transaction log or journal, however whatever the implementation, most databases internally retailer a stream of occasions and rework them right into a desk.

If your organization solely has one database, you possibly can in all probability cease studying now; information silos will not be your downside. For everybody else, it’s vital to have the ability to get information from one database to a different. The merchandise and instruments to perform this activity make up an virtually $12 billion greenback market, and so they basically all do the identical factor in numerous methods. The idea of Change Knowledge Seize (CDC) has been round for some time however particular options have taken many shapes. The latest of those, and probably probably the most attention-grabbing, is real-time CDC enabled by the identical inside database logging programs used to construct tables. All the pieces else, together with query-based CDC, file diffs, and full desk overwrites is suboptimal when it comes to information freshness and native database affect. That is why Oracle acquired the very talked-about GoldenGate software program firm in 2009 and the core product continues to be used right this moment for real-time CDC on a wide range of supply programs. To be a real-time CDC stream we have to be occasion pushed; something much less is batch and adjustments our resolution capabilities.

Actual-Time CDC Is The Manner

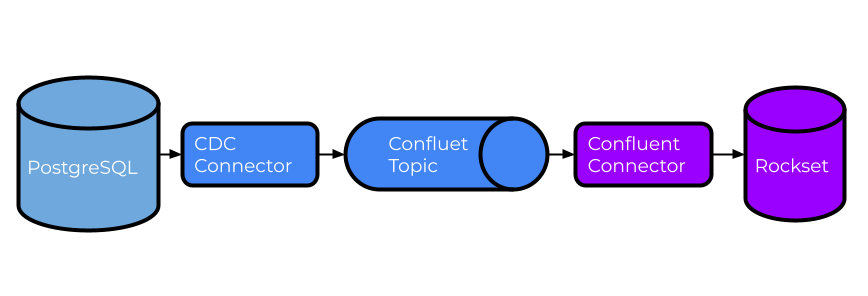

Hopefully now you’re curious how Rockset and Confluent assist you break down information silos utilizing real-time CDC. As you’ll anticipate, it begins together with your database of alternative, though ideally one which helps a transaction log that can be utilized to generate real-time CDC occasions. PostgreSQL, MySQL, SQL Server, and even Oracle are well-liked selections, however there are various others that can work high-quality. For our tutorial we’ll deal with PostgreSQL, however the ideas might be comparable whatever the database.

Subsequent, we’d like a instrument to generate CDC occasions in actual time from PostgreSQL. There are a couple of choices and, as you could have guessed, Confluent Cloud has a built-in and absolutely managed PostgreSQL CDC supply connector based mostly on Debezium’s open-source connector. This connector is particularly designed to watch row-level adjustments after an preliminary snapshot and write the output to Confluent Cloud subjects. Capturing occasions this fashion is each handy and provides you a production-quality information stream with built-in assist and availability.

Confluent Cloud can also be an important alternative for storing real-time CDC occasions. Whereas there are a number of advantages to utilizing Confluent Cloud, an important is the discount in operational burden. With out Confluent Cloud, you’ll be spending weeks getting a Kafka cluster stood up, months understanding and implementing correct safety after which dedicating a number of people to sustaining it indefinitely. With Confluent Cloud, you possibly can have all of that in a matter of minutes with a bank card and an online browser. You’ll be able to study extra about Confluent vs. Kafka over on Confluent’s web site.

Final, however on no account least, Rockset might be configured to learn from Confluent Cloud subjects and course of CDC occasions into a set that appears very very similar to our supply desk. Rockset brings three key options to the desk in the case of dealing with CDC occasions.

- Rockset integrates with a number of sources as a part of the managed service (together with DynamoDB and MongoDB). Just like Confluent’s managed PostgreSQL CDC connector, Rockset has a managed integration with Confluent Cloud. With a fundamental understanding of your supply mannequin, like the first key for every desk, you’ve gotten the whole lot you could course of these occasions.

- Rockset additionally makes use of a schemaless ingestion mannequin that permits information to evolve with out breaking something. If you’re within the particulars, we’ve been schemaless since 2019 as blogged about right here. That is essential for CDC information as new attributes are inevitable and also you don’t need to spend time updating your pipeline or suspending software adjustments.

- Rockset’s Converged Index™ is absolutely mutable, which supplies Rockset the flexibility to deal with adjustments to present data in the identical method the supply database would, normally an upsert or delete operation. This provides Rockset a novel benefit over different extremely listed programs that require heavy lifting to make any adjustments, usually involving vital reprocessing and reindexing steps.

Databases and information warehouses with out these options usually have elongated ETL or ELT pipelines that improve information latency and complexity. Rockset typically maps 1 to 1 between supply and goal objects with little or no want for complicated transformations. I’ve all the time believed that in the event you can draw the structure you possibly can construct it. The design drawing for this structure is each elegant and easy. Beneath you’ll discover the design for this tutorial, which is totally manufacturing prepared. I’m going to interrupt the tutorial up into two major sections: organising Confluent Cloud and organising Rockset.

Streaming Issues With Confluent Cloud

Step one in our tutorial is configuring Confluent Cloud to seize our change information from PostgreSQL. In the event you don’t have already got an account, getting began with Confluent is free and simple. Moreover, Confluent already has a nicely documented tutorial for organising the PostgreSQL CDC connector in Confluent Cloud. There are a couple of notable configuration particulars to spotlight:

- Rockset can course of occasions whether or not “after.state.solely” is about to “true” or “false”. For our functions, the rest of the tutorial will assume it’s “true”, which is the default.

- ”output.information.format” must be set to both “JSON” or “AVRO”. At the moment Rockset doesn’t assist “PROTOBUF” or “JSON_SR”. If you’re not sure to utilizing Schema Registry and also you’re simply setting this up for Rockset, “JSON” is the best strategy.

- Set “Tombstones on delete” to “false”, this may cut back noise as we solely want the only delete occasion to correctly delete in Rockset.

-

I additionally needed to set the desk’s reproduction identification to “full” to ensure that delete to work as anticipated, however this could be configured already in your database.

ALTER TABLE cdc.demo.occasions REPLICA IDENTITY FULL; - In case you have tables with high-frequency adjustments, take into account dedicating a single connector to them since “duties.max” is proscribed to 1 per connector. The connector, by default, displays all non-system tables, so be certain that to make use of “desk.includelist” if you’d like a subset per connector.

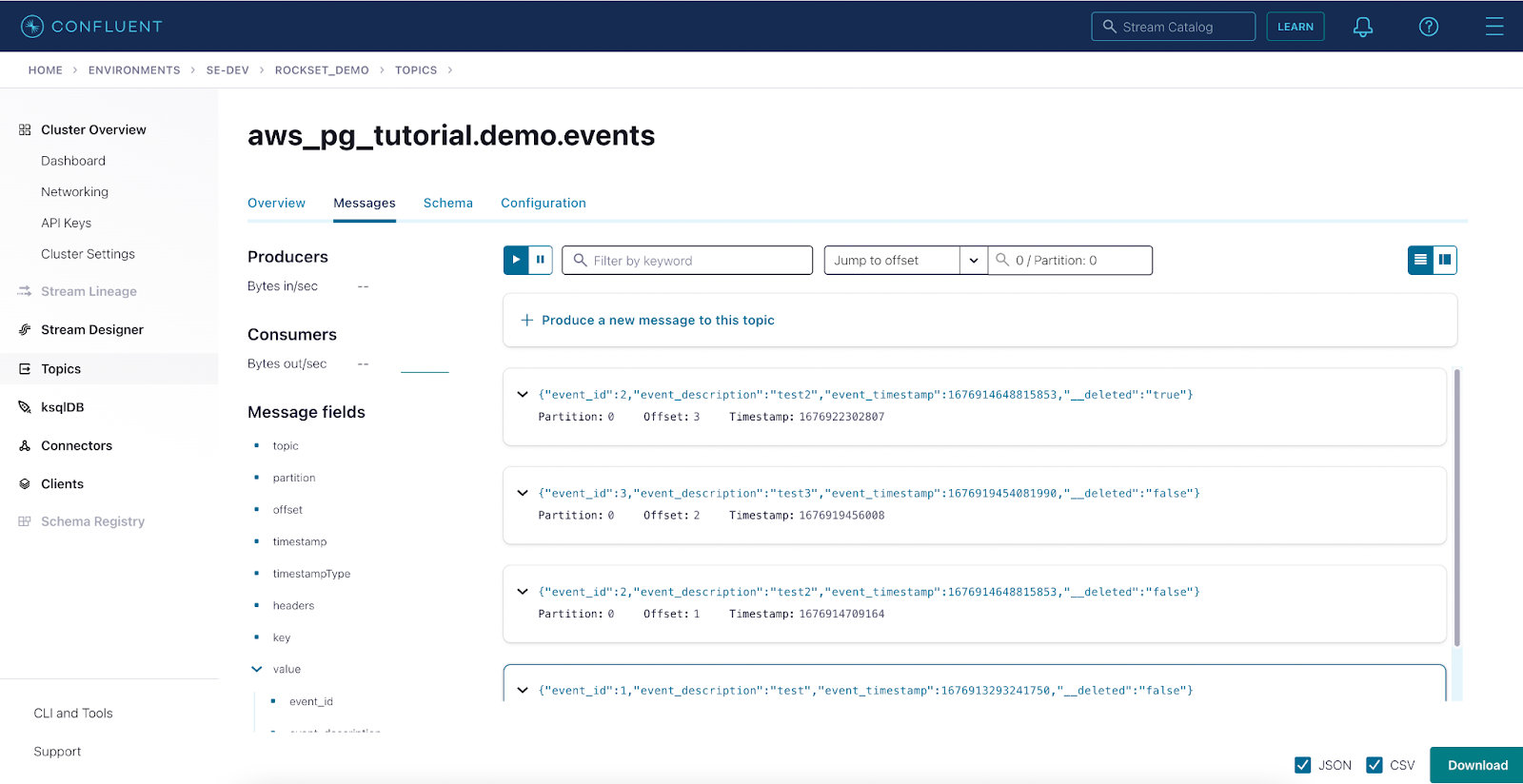

There are different settings that could be vital to your surroundings however shouldn’t have an effect on the interplay between Rockset and Confluent Cloud. In the event you do run into points between PostgreSQL and Confluent Cloud, it’s seemingly both a spot within the logging setup on PostgreSQL, permissions on both system, or networking. Whereas it’s troublesome to troubleshoot through weblog, my greatest advice is to evaluate the documentation and phone Confluent assist. In case you have completed the whole lot right up so far, it’s best to see information like this in Confluent Cloud:

Actual Time With Rockset

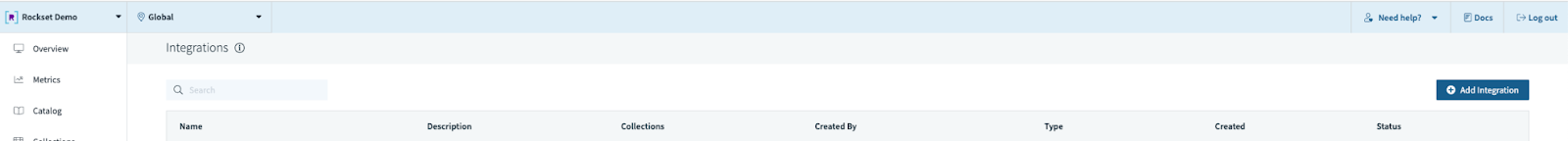

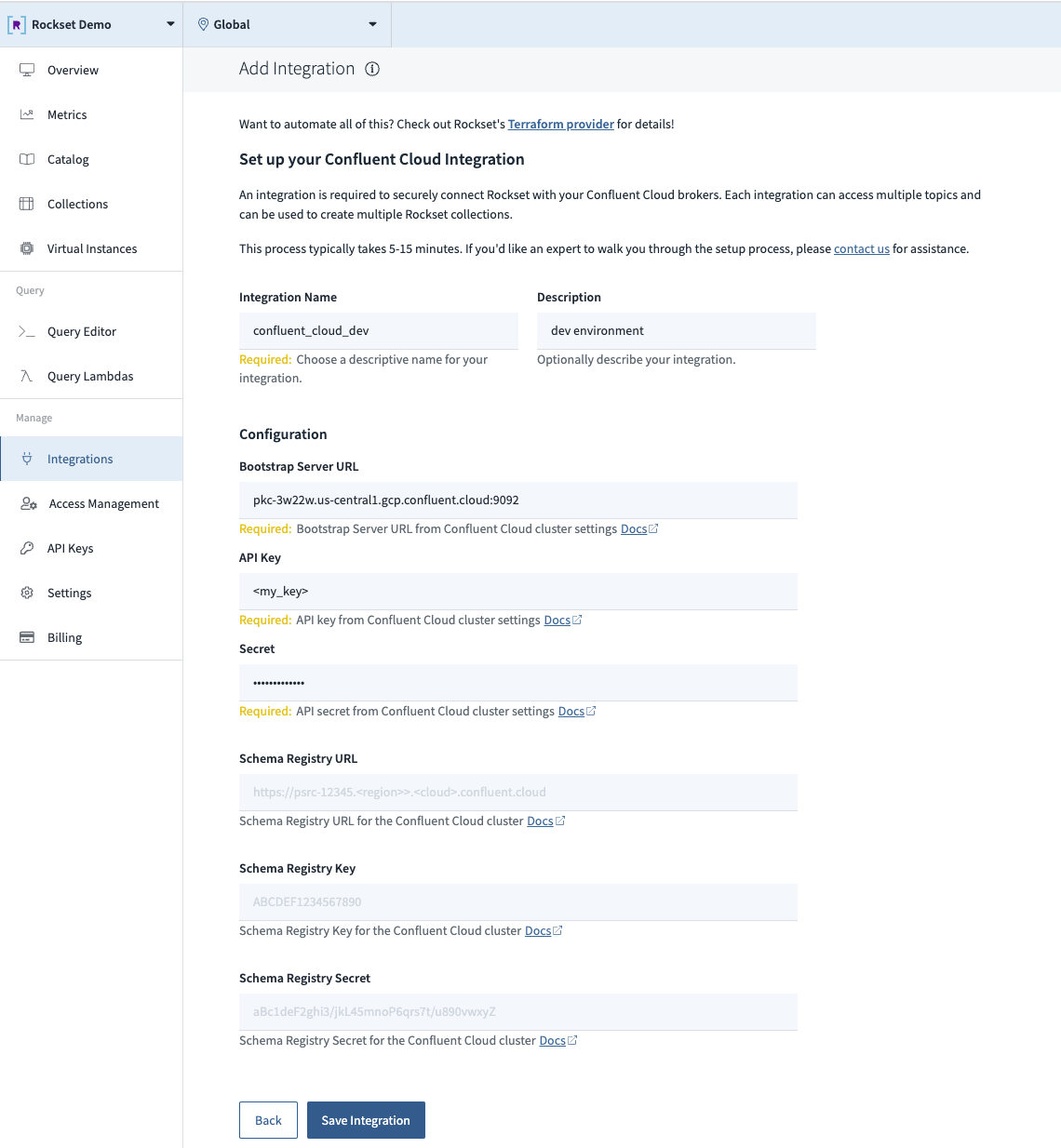

Now that PostgreSQL CDC occasions are flowing by Confluent Cloud, it’s time to configure Rockset to devour and course of these occasions. The excellent news is that it’s simply as straightforward to arrange an integration to Confluent Cloud because it was to arrange the PostgreSQL CDC connector. Begin by making a Rockset integration to Confluent Cloud utilizing the console. This can be completed programmatically utilizing our REST API or Terraform supplier, however these examples are much less visually beautiful.

Step 1. Add a brand new integration.

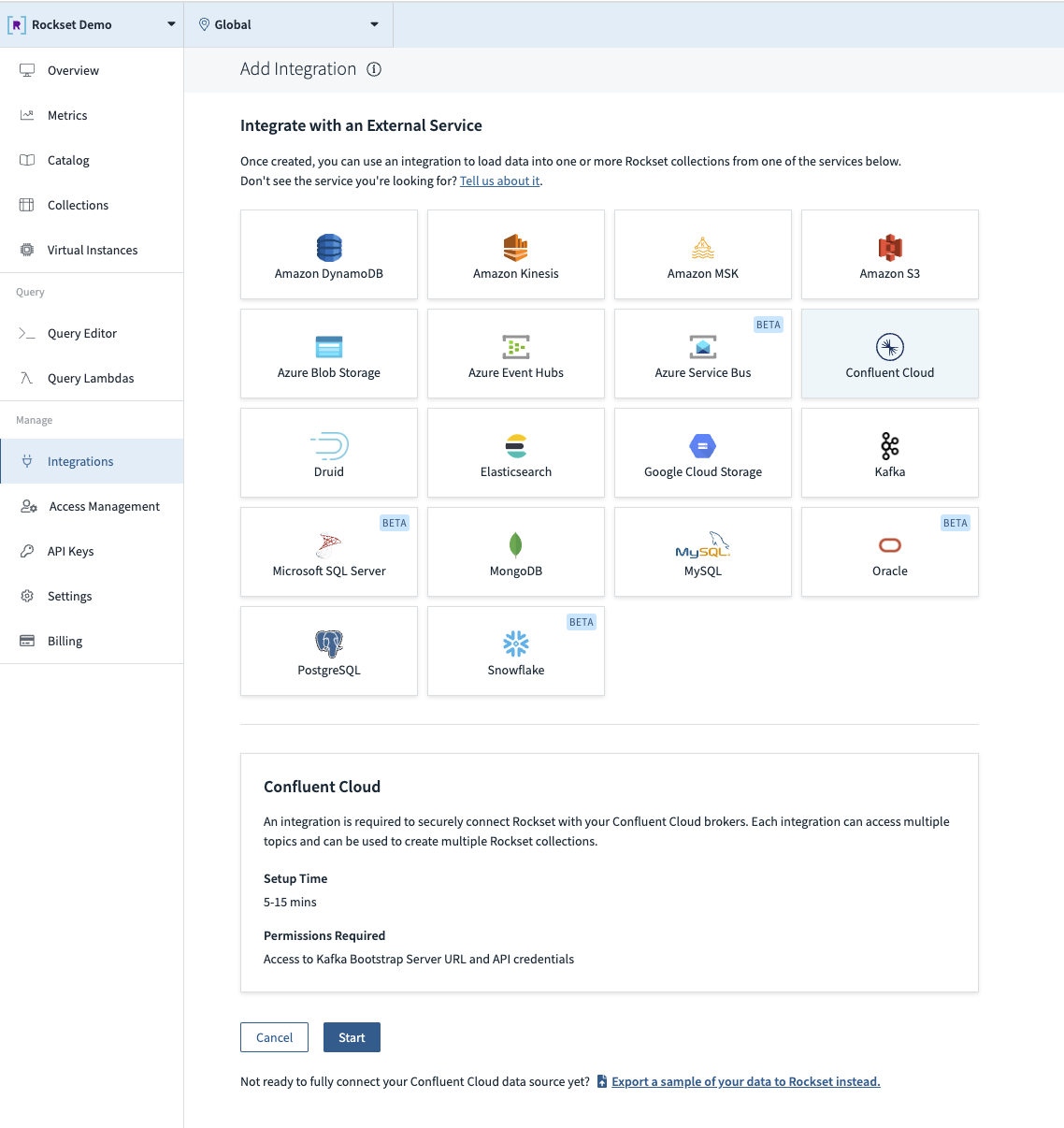

Step 2. Choose the Confluent Cloud tile within the catalog.

Step 3. Fill out the configuration fields (together with Schema Registry if utilizing Avro).

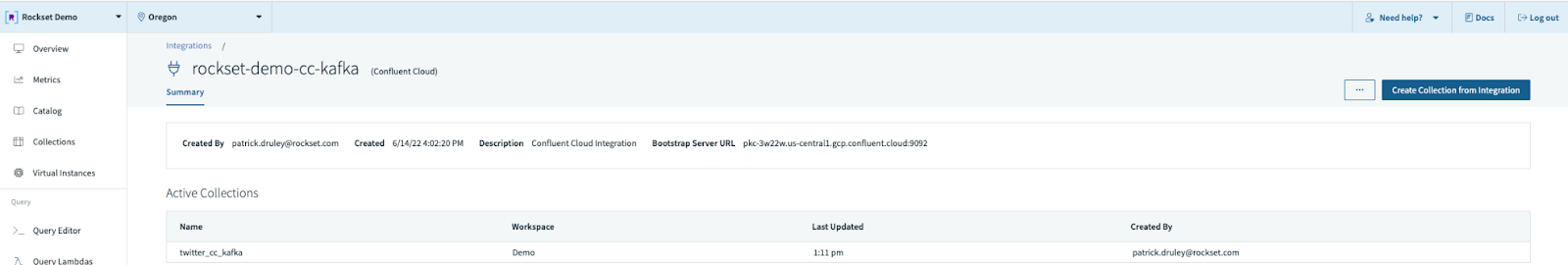

Step 4. Create a brand new assortment from this integration.

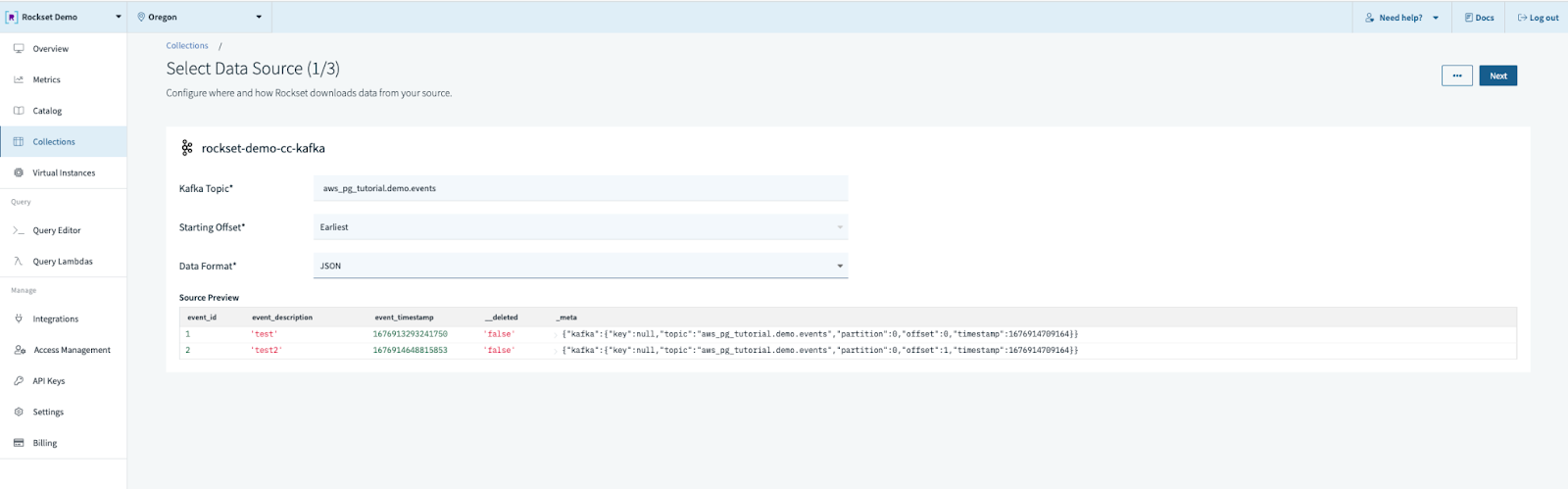

Step 5. Fill out the information supply configuration.

- Matter title

- Beginning offset (advocate earliest if the subject is comparatively small or static)

- Knowledge Format (ours might be JSON)

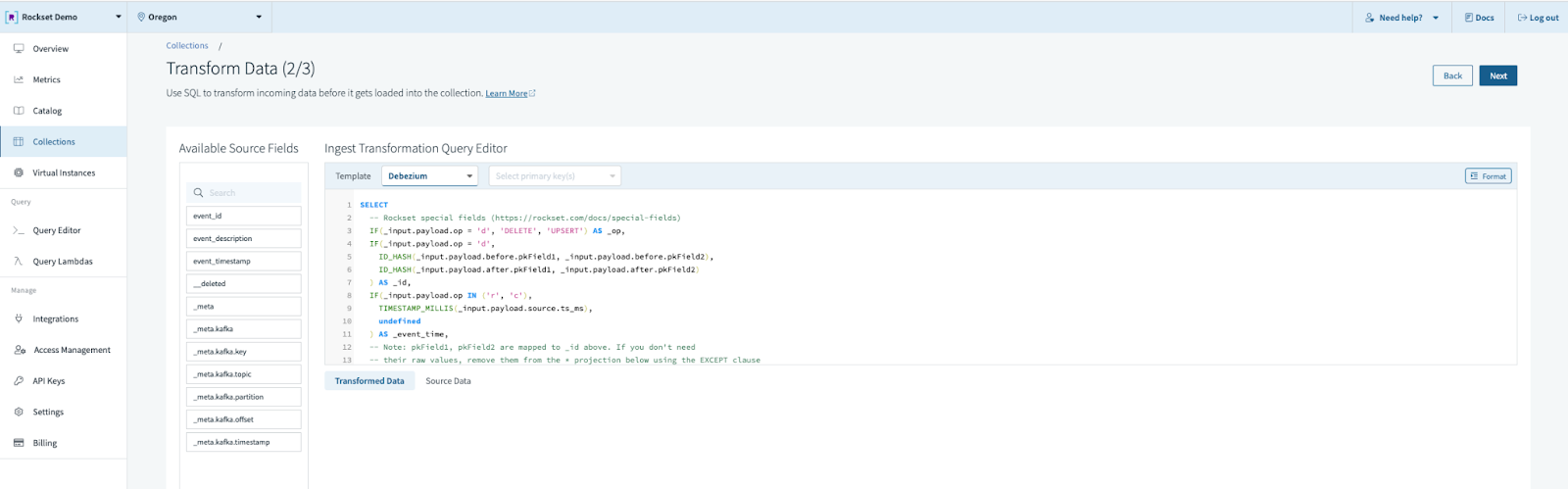

Step 6. Select the “Debezium” template in “CDC codecs” and choose “main key”. The default Debezium template assumes we’ve got each a earlier than and after picture. In our case we don’t, so the precise SQL transformation might be much like this:

SELECT

IF(enter.__deleted = 'true', 'DELETE', 'UPSERT') AS _op,

CAST(_input.event_id AS string) AS _id,

TIMESTAMP_MICROS(CAST(_input.event_timestamp as int)) as event_timestamp,

_input.* EXCEPT(event_id, event_timestamp, __deleted)

FROM _input

Rockset has template assist for a lot of frequent CDC occasions, and we even have specialised _op codes for “_op” to fit your wants. In our instance we’re solely involved with deletes; we deal with the whole lot else as an upsert.

Step 7. Fill out the workspace, title, and outline, and select a retention coverage. For this model of CDC materialization we should always set the retention coverage to “Hold all paperwork”.

As soon as the gathering state says “Prepared” you can begin working queries. In only a few minutes you’ve gotten arrange a set which mimics your PostgreSQL desk, mechanically stays up to date with simply 1-2 seconds of information latency, and is ready to run millisecond-latency queries.

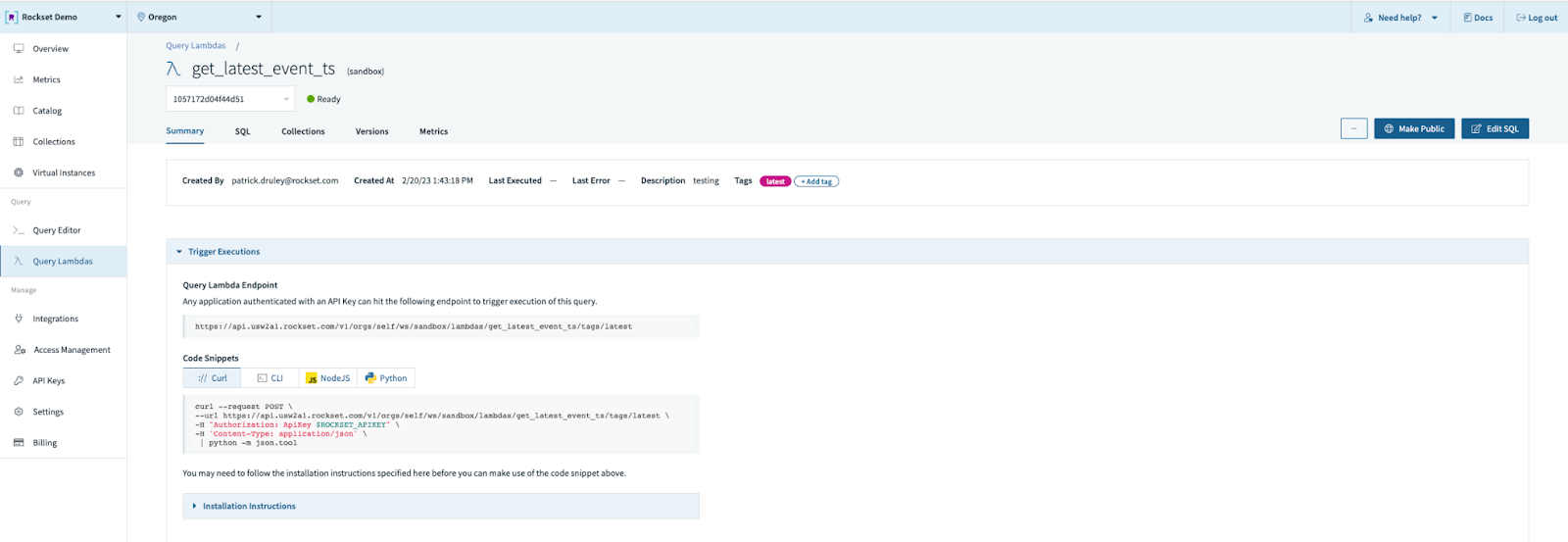

Talking of queries, it’s also possible to flip your question right into a Question Lambda, which is a managed question service. Merely write your question within the question editor, reserve it as a Question Lambda, and now you possibly can run that question through a REST endpoint managed by Rockset. We’ll observe adjustments to the question over time utilizing variations, and even report on metrics for each frequency and latency over time. It’s a solution to flip your data-as-a-service mindset right into a query-as-a-service mindset with out the burden of constructing out your personal SQL era and API layer.

The Superb Database Race

As an newbie herpetologist and basic fan of biology, I discover expertise follows an analogous means of evolution by pure choice. After all, within the case of issues like databases, the “pure” half can generally appear a bit “unnatural”. Early databases have been strict when it comes to format and construction however fairly predictable when it comes to efficiency. Later, in the course of the Large Knowledge craze, we relaxed the construction and spawned a department of NoSQL databases identified for his or her loosey-goosey strategy to information fashions and lackluster efficiency. Right this moment, many corporations have embraced real-time resolution making as a core enterprise technique and are in search of one thing that mixes each efficiency and suppleness to energy their actual time resolution making ecosystem.

Thankfully, just like the fish with legs that might finally turn out to be an amphibian, Rockset and Confluent have risen from the ocean of batch and onto the land of actual time. Rockset’s capacity to deal with excessive frequency ingestion, a wide range of information fashions, and interactive question workloads makes it distinctive, the primary in a brand new species of databases that can turn out to be ever extra frequent. Confluent has turn out to be the enterprise customary for real-time information streaming with Kafka and event-driven architectures. Collectively, they supply a real-time CDC analytics pipeline that requires zero code and nil infrastructure to handle. This lets you deal with the functions and providers that drive your small business and rapidly derive worth out of your information.

You may get began right this moment with a free trial for each Confluent Cloud and Rockset. New Confluent Cloud signups obtain $400 to spend throughout their first 30 days — no bank card required. Rockset has an analogous deal – $300 in credit score and no bank card required.